What You Need to Know About Llama3 70B Performance on NVIDIA 4090 24GB?

Introduction

The world of large language models (LLMs) is buzzing with excitement! These powerful AI tools can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way, and they're getting more sophisticated every day.

One of the most popular LLM models is Llama3, which is a family of open-source LLMs released by Meta. Llama3 comes in different sizes, from 7B parameters to 70B parameters, each with its unique strengths. But, how do these models perform on different devices?

In this deep dive, we'll be focusing on the Llama3 70B model and its performance on the NVIDIA 4090_24GB, a powerhouse GPU known for its speed and processing capabilities.

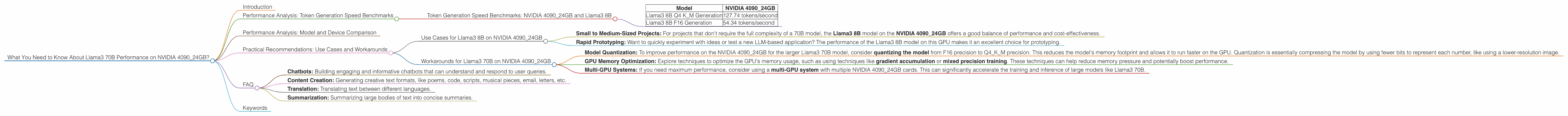

Performance Analysis: Token Generation Speed Benchmarks

Let's dive into the numbers and see how Llama3 70B performs on the NVIDIA 4090_24GB when generating text. One key metric is tokens per second, which shows how quickly the model can process text.

Unfortunately, there's no data available on the Llama3 70B model's generation speed on the NVIDIA 4090_24GB. This might be because the model's large size puts a significant strain on the GPU, even with its powerful capabilities.

However, we can look at the performance of the smaller Llama3 8B model on the same GPU to get a sense of the potential performance.

Token Generation Speed Benchmarks: NVIDIA 4090_24GB and Llama3 8B

| Model | NVIDIA 4090_24GB |

|---|---|

| Llama3 8B Q4 K_M Generation | 127.74 tokens/second |

| Llama3 8B F16 Generation | 54.34 tokens/second |

Key Takeaways:

- The Llama3 8B model achieves a substantial token generation speed on the NVIDIA 409024GB, ranging from 54.34 tokens/second in F16 precision to 127.74 tokens/second in Q4K_M precision.

- Q4KM precision delivers a higher throughput, enabling the model to generate text faster. This is often achieved by compromising accuracy, but in this case, it might be a worthwhile trade-off depending on the specific use case.

Performance Analysis: Model and Device Comparison

Let's compare the performance of the Llama3 8B and Llama3 70B models on the NVIDIA 4090_24GB. While we don't have complete performance data for the 70B model on this GPU, we can still draw some insights and make informed assumptions.

The larger size of the Llama3 70B model compared to the 8B model means it requires more computational resources and memory bandwidth. Consequently, we expect its performance on the same GPU to be significantly slower than the smaller model.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA 4090_24GB

- Small to Medium-Sized Projects: For projects that don't require the full complexity of a 70B model, the Llama3 8B model on the NVIDIA 4090_24GB offers a good balance of performance and cost-effectiveness.

- Rapid Prototyping: Want to quickly experiment with ideas or test a new LLM-based application? The performance of the Llama3 8B model on this GPU makes it an excellent choice for prototyping.

Workarounds for Llama3 70B on NVIDIA 4090_24GB

- Model Quantization: To improve performance on the NVIDIA 409024GB for the larger Llama3 70B model, consider quantizing the model from F16 precision to Q4K_M precision. This reduces the model's memory footprint and allows it to run faster on the GPU. Quantization is essentially compressing the model by using fewer bits to represent each number, like using a lower-resolution image.

- GPU Memory Optimization: Explore techniques to optimize the GPU's memory usage, such as using techniques like gradient accumulation or mixed precision training. These techniques can help reduce memory pressure and potentially boost performance.

- Multi-GPU Systems: If you need maximum performance, consider using a multi-GPU system with multiple NVIDIA 4090_24GB cards. This can significantly accelerate the training and inference of large models like Llama3 70B.

FAQ

Q: What is the difference between Llama3 7B and Llama3 70B?

A: Llama3 7B and Llama3 70B are both open-source LLMs from Meta, but they differ in the number of parameters they have. The 70B model is significantly larger and more powerful, while the 7B model is smaller and faster. This means the 70B model can handle more complex tasks, but requires more computational resources.

Q: What does "F16 precision" and "Q4KM precision" mean?

A: Precision refers to the number of bits used to represent a number in a computer. F16 precision uses 16 bits per number, while Q4KM precision uses 4 bits per number. Lower precision can speed up computations but may lead to a slight decrease in accuracy.

Q: What are some real-world use cases for Llama3 models?

A: * Llama3 models have a wide range of potential applications, including: * *Chatbots: Building engaging and informative chatbots that can understand and respond to user queries. * Content Creation: Generating creative text formats, like poems, code, scripts, musical pieces, email, letters, etc. * Translation: Translating text between different languages. * Summarization: Summarizing large bodies of text into concise summaries.

Keywords

Llama3 70B, NVIDIA 409024GB, LLM, performance, token generation speed, GPU, benchmark, quantization, F16, Q4K_M, model comparison, use cases, workarounds, practical recommendations, AI, deep dive, developers, geeks, memory optimization, multi-GPU systems.