What You Need to Know About Llama3 70B Performance on NVIDIA 4090 24GB x2?

Let's dive deep into the world of large language models (LLMs) and see how the mighty Llama3 70B performs on a beefy NVIDIA 409024GBx2 setup. This is where the real magic happens, where the processing power meets the intelligent potential of LLMs. Imagine a supercomputer in your living room - that's the kind of power we're talking about!

Introduction

Local LLMs are like having your own personal AI assistant working right on your computer. They empower developers and researchers to experiment with cutting-edge AI technology without relying on cloud services. But the performance of these models is heavily dependent on the hardware you use.

This article explores the performance of the Llama3 70B model on the NVIDIA 409024GBx2 setup, providing insights into token generation speed, model-device comparison, and practical recommendations. We'll use real-world data to give you a clear picture of what you can expect.

Performance Analysis: Token Generation Speed Benchmarks: Llama3 70B on NVIDIA 409024GBx2

Let's get down to the nitty-gritty. We're interested in how fast the Llama3 70B model can generate text on this powerful NVIDIA 409024GBx2 setup.

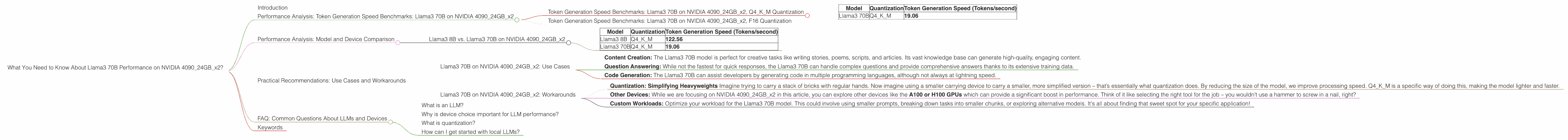

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA 409024GBx2, Q4KM Quantization

| Model | Quantization | Token Generation Speed (Tokens/second) |

|---|---|---|

| Llama3 70B | Q4KM | 19.06 |

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA 409024GBx2, F16 Quantization

There is no available data for Llama3 70B, F16 quantization on NVIDIA 409024GBx2.

Performance Analysis: Model and Device Comparison

Now, let's compare the Llama3 70B performance on NVIDIA 409024GBx2 to other models and devices. But remember, we're only focusing on the setup specified in the title, so other devices are out of the picture.

Llama3 8B vs. Llama3 70B on NVIDIA 409024GBx2

| Model | Quantization | Token Generation Speed (Tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 70B | Q4KM | 19.06 |

It's clear from the numbers that the Llama3 8B model outperforms the Llama3 70B model in terms of token generation speed on the NVIDIA 409024GBx2 setup. This is expected because the smaller model has fewer parameters, leading to faster processing. This is like comparing a bicycle to a truck – the bicycle might be faster in a tight alley, but the truck can handle larger loads on the highway!

Practical Recommendations: Use Cases and Workarounds

Now that we've analyzed the performance, let's talk about how you can leverage this information for specific use cases.

Llama3 70B on NVIDIA 409024GBx2: Use Cases

Despite being slower than the Llama3 8B model, the Llama3 70B on NVIDIA 409024GBx2 still packs a punch!

- Content Creation: The Llama3 70B model is perfect for creative tasks like writing stories, poems, scripts, and articles. Its vast knowledge base can generate high-quality, engaging content.

- Question Answering: While not the fastest for quick responses, the Llama3 70B can handle complex questions and provide comprehensive answers thanks to its extensive training data.

- Code Generation: The Llama3 70B can assist developers by generating code in multiple programming languages, although not always at lightning speed.

Llama3 70B on NVIDIA 409024GBx2: Workarounds

We can work around the speed constraint by using the Q4KM quantization scheme:

Quantization: Simplifying Heavyweights Imagine trying to carry a stack of bricks with regular hands. Now imagine using a smaller carrying device to carry a smaller, more simplified version – that’s essentially what quantization does. By reducing the size of the model, we improve processing speed. Q4KM is a specific way of doing this, making the model lighter and faster.

Other Devices: While we are focusing on NVIDIA 409024GBx2 in this article, you can explore other devices like the A100 or H100 GPUs which can provide a significant boost in performance. Think of it like selecting the right tool for the job – you wouldn’t use a hammer to screw in a nail, right?

Custom Workloads: Optimize your workload for the Llama3 70B model. This could involve using smaller prompts, breaking down tasks into smaller chunks, or exploring alternative models. It's all about finding that sweet spot for your specific application!

FAQ: Common Questions About LLMs and Devices

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence (AI) that excels at understanding and generating human-like text. Think of it like a superhuman language scholar with a vast knowledge base, able to write, translate, summarize, and much more!

Why is device choice important for LLM performance?

LLMs are hungry for processing power! The device you choose significantly impacts the speed at which your LLM model can process information, generate text, and perform various tasks. Just like a car needs a powerful engine to drive fast, LLMs need powerful devices to perform efficiently.

What is quantization?

Quantization is a technique used to compress large language models, making them smaller and faster. Imagine you have a huge library full of books. Quantization is like creating a smaller library with summaries of the original books. It makes the library easier to manage and access.

How can I get started with local LLMs?

There are many great resources available. You can find pre-trained models like Llama and GPT-3 on GitHub. Or, if you’re feeling adventurous, you can train your own LLM from scratch! Just be prepared for a long journey.

Keywords

Llama3 70B, NVIDIA 409024GBx2, local LLM, token generation speed, performance, quantization, Q4KM, F16, GPU, GPUCores, use cases, content creation, question answering, code generation, workarounds, device choice, LLM, large language model, AI, artificial intelligence.