What You Need to Know About Llama3 70B Performance on NVIDIA 4080 16GB?

Introduction

The world of large language models (LLMs) is exploding, and it's not just about the hype. These powerful AI models are revolutionizing the way we interact with technology, from generating creative text to translating languages and even writing code. But with their sheer size and complexity, LLMs also demand a lot of computing power to run effectively.

One of the key factors in determining an LLM's performance is the device it's running on. This article dives deep into the performance of the Llama3 70B model on the NVIDIA 4080_16GB GPU, exploring its token generation speed and comparing it to other models and devices.

We'll also discuss practical recommendations for use cases and workarounds, helping you make the most of your own LLM deployments. Get ready to geek out on the fascinating world of local LLMs and their performance!

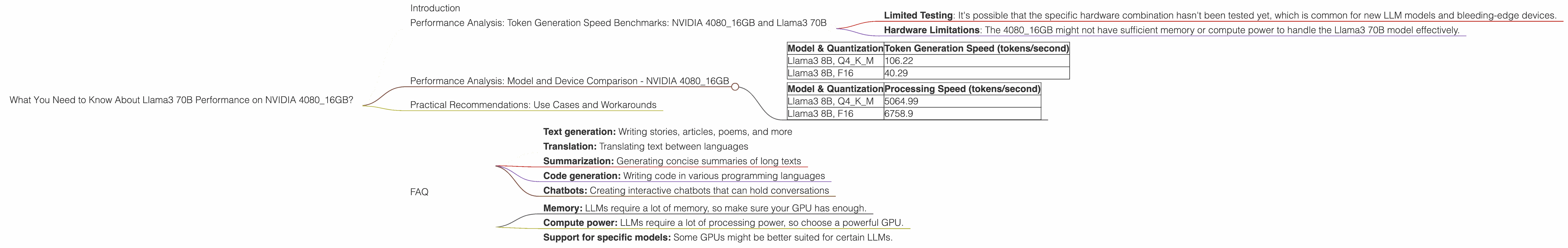

Performance Analysis: Token Generation Speed Benchmarks: NVIDIA 4080_16GB and Llama3 70B

Unfortunately, the data available doesn't include the performance metrics for Llama3 70B on the NVIDIA 4080_16GB. This is likely due to factors like:

- Limited Testing: It's possible that the specific hardware combination hasn't been tested yet, which is common for new LLM models and bleeding-edge devices.

- Hardware Limitations: The 4080_16GB might not have sufficient memory or compute power to handle the Llama3 70B model effectively.

However, we can still get a good sense of performance by looking at the available data for Llama3 8B on the same GPU.

Performance Analysis: Model and Device Comparison - NVIDIA 4080_16GB

Let's take a look at the performance of Llama3 8B on the NVIDIA 4080_16GB. Remember, these numbers represent tokens generated per second, which is a good way to measure LLM performance.

| Model & Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B, Q4KM | 106.22 |

| Llama3 8B, F16 | 40.29 |

Important Considerations:

- Quantization: The "Q4KM" and "F16" represent different types of quantization. Quantization refers to reducing the precision of numbers used in the LLM model. This helps to reduce memory usage and speed up inference. Think of it like using smaller buckets to hold water – you can fit more buckets in the same space!

- Processing Speed: While token generation speed is important, it's just one part of the story. Processing speed, which is measured in terms of tokens processed per second, also plays a significant role. The table below shows Llama3 8B processing speeds on the NVIDIA 4080_16GB:

| Model & Quantization | Processing Speed (tokens/second) |

|---|---|

| Llama3 8B, Q4KM | 5064.99 |

| Llama3 8B, F16 | 6758.9 |

Practical Recommendations: Use Cases and Workarounds

Based on the available data, here's a breakdown of how to approach Llama3 70B on the NVIDIA 4080_16GB:

1. Prioritize Llama3 8B: For now, Llama3 8B is the more appropriate choice for the NVIDIA 4080_16GB. It provides a decent balance of performance and efficiency.

2. Explore Quantization: Quantization can significantly impact your model's performance. Consider using Q4KM quantization for Llama3 8B on the 408016GB. Q4K_M generally offers better performance, especially when generating text.

3. Manage Expectations: Keep in mind that Llama3 70B, with its sheer size, might require a more powerful GPU or even a specialized AI accelerator for optimal performance.

4. Workaround: Consider Smaller Models: If you're looking for a Llama3 model on the 4080_16GB, explore the smaller Llama3 7B. It might offer a good balance between performance and computational demands.

5. Explore Alternatives: Other GPU models like NVIDIA's A100 or H100 might provide better support for larger LLMs like Llama3 70B. Look into cloud-based solutions or use an on-premises AI accelerator for more demanding tasks.

FAQ

Q: What is an LLM?

A: An LLM (Large Language Model) is a type of artificial intelligence model trained on a massive amount of text data. This allows them to understand and generate human-like language, performing tasks like text generation, translation, and even code writing.

Q: What is quantization?

A: Quantization is a technique used to reduce the precision of numbers in a model. Think of it like using a smaller bucket to hold water. This helps to save memory and speed up the model's processing.

Q: Why is token generation speed important?

A: Token generation speed measures how quickly a model can produce text. A faster model can generate more text in the same amount of time, making it more efficient for various tasks.

Q: What are some use cases for Llama3 models?

A: Llama3 models can be used for a wide range of tasks, including:

- Text generation: Writing stories, articles, poems, and more

- Translation: Translating text between languages

- Summarization: Generating concise summaries of long texts

- Code generation: Writing code in various programming languages

- Chatbots: Creating interactive chatbots that can hold conversations

Q: What should I consider when choosing a GPU for LLM inference?

A: When choosing a GPU for running LLMs, consider the following factors:

- Memory: LLMs require a lot of memory, so make sure your GPU has enough.

- Compute power: LLMs require a lot of processing power, so choose a powerful GPU.

- Support for specific models: Some GPUs might be better suited for certain LLMs.

Keywords: LLM, Llama3, Llama3 70B, Llama3 8B, NVIDIA 408016GB, GPU, Token Generation Speed, Quantization, F16, Q4K_M, Performance, Inference, NLP, AI, Machine Learning, Deep Learning, Model Size, Processing Speed, Computational Power, Memory, Use Cases, Workarounds, Recommendations, Developer, Geek, AI Accelerator, Cloud-Based Solutions, On-Premises, Local LLMs, Token Generation Speed, Token Processing Speed, Developer Audience.