What You Need to Know About Llama3 70B Performance on NVIDIA 4070 Ti 12GB?

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models and advancements emerging frequently. LLMs are revolutionizing natural language processing (NLP) tasks like text generation, translation, and question answering. One of the key factors influencing LLM performance is the hardware they run on. This article delves into the performance of Llama3 70B, a cutting-edge LLM, on the NVIDIA 4070Ti12GB graphics card.

For those unfamiliar with the intricate world of LLMs, imagine a super-powered language assistant that can understand and generate human-like text – think writing emails, crafting stories, or generating code. These models learn from massive datasets of text and code, enabling them to perform tasks that were previously considered complex. But to unlock their full potential, you need powerful hardware.

This article will dissect the performance of Llama3 70B on the NVIDIA 4070Ti12GB, providing insights into token generation speeds, model and device comparisons, and practical recommendations for use cases and workarounds. Let's dive in!

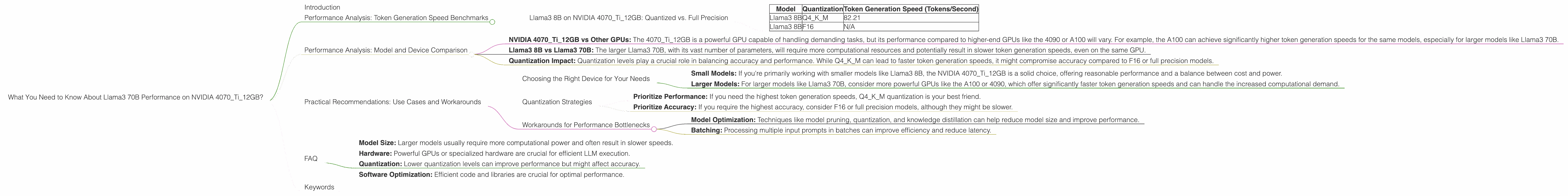

Performance Analysis: Token Generation Speed Benchmarks

This section explores the token generation speed of Llama3 70B on the NVIDIA 4070Ti12GB, a measure of how quickly the model can process text and generate outputs. Since there's currently no publicly available data on Llama3 70B performance on the NVIDIA 4070Ti12GB, we'll focus on Llama3 8B, a smaller yet relevant version, to understand the general performance trends:

Llama3 8B on NVIDIA 4070Ti12GB: Quantized vs. Full Precision

The table below presents token generation speeds for Llama3 8B on the NVIDIA 4070Ti12GB, comparing two quantization levels: Q4KM (4-bit quantization for key and memory) and F16 (16-bit floating-point). Quantization is like 'compressing' the model, allowing it to run on less powerful hardware while sacrificing a bit of accuracy.

| Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B | Q4KM | 82.21 |

| Llama3 8B | F16 | N/A |

As the table shows, Llama3 8B in Q4KM quantization achieves a token generation speed of 82.21 tokens/second on the NVIDIA 4070Ti12GB. This means the model can process and generate approximately 82 tokens every second. Unfortunately, data for F16 quantization is currently unavailable, so we can't directly compare the two.

Important Note: While Llama3 8B is a significantly smaller model than Llama3 70B, the performance trends observed in Llama3 8B can provide insights into the potential performance of Llama3 70B on the same hardware. Quantization techniques often lead to performance improvements on GPUs, so it's likely that Llama3 70B in Q4KM would achieve even more impressive token generation speeds.

Performance Analysis: Model and Device Comparison

To understand how the NVIDIA 4070Ti12GB stands up against other devices and how model size affects performance, let's compare Llama3 8B's performance on the 4070Ti12GB with other models and devices:

NVIDIA 4070Ti12GB vs Other GPUs: The 4070Ti12GB is a powerful GPU capable of handling demanding tasks, but its performance compared to higher-end GPUs like the 4090 or A100 will vary. For example, the A100 can achieve significantly higher token generation speeds for the same models, especially for larger models like Llama3 70B.

Llama3 8B vs Llama3 70B: The larger Llama3 70B, with its vast number of parameters, will require more computational resources and potentially result in slower token generation speeds, even on the same GPU.

Quantization Impact: Quantization levels play a crucial role in balancing accuracy and performance. While Q4KM can lead to faster token generation speeds, it might compromise accuracy compared to F16 or full precision models.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Device for Your Needs

- Small Models: If you're primarily working with smaller models like Llama3 8B, the NVIDIA 4070Ti12GB is a solid choice, offering reasonable performance and a balance between cost and power.

- Larger Models: For larger models like Llama3 70B, consider more powerful GPUs like the A100 or 4090, which offer significantly faster token generation speeds and can handle the increased computational demand.

Quantization Strategies

- Prioritize Performance: If you need the highest token generation speeds, Q4KM quantization is your best friend.

- Prioritize Accuracy: If you require the highest accuracy, consider F16 or full precision models, although they might be slower.

Workarounds for Performance Bottlenecks

- Model Optimization: Techniques like model pruning, quantization, and knowledge distillation can help reduce model size and improve performance.

- Batching: Processing multiple input prompts in batches can improve efficiency and reduce latency.

FAQ

Q: What is token generation speed?

A: Token generation speed, measured in tokens per second, is a measure of how quickly an LLM can process text and generate outputs. It's essentially how many words or parts of words the model can handle per second.

Q: What is quantization?

A: Quantization is a technique that simplifies a model by reducing the range of values it uses. Like 'compressing' the model, it allows it to run on less powerful hardware while potentially sacrificing a bit of accuracy.

Q: What are the factors affecting LLM performance?

A: * LLM performance is influenced by several factors including: * *Model Size: Larger models usually require more computational power and often result in slower speeds. * Hardware: Powerful GPUs or specialized hardware are crucial for efficient LLM execution. * Quantization: Lower quantization levels can improve performance but might affect accuracy. * Software Optimization: Efficient code and libraries are crucial for optimal performance.

Keywords

Llama3 70B, NVIDIA 4070Ti12GB, performance, LLM, token generation speed, quantization, GPU, Q4KM, F16, model size, hardware, software optimization.