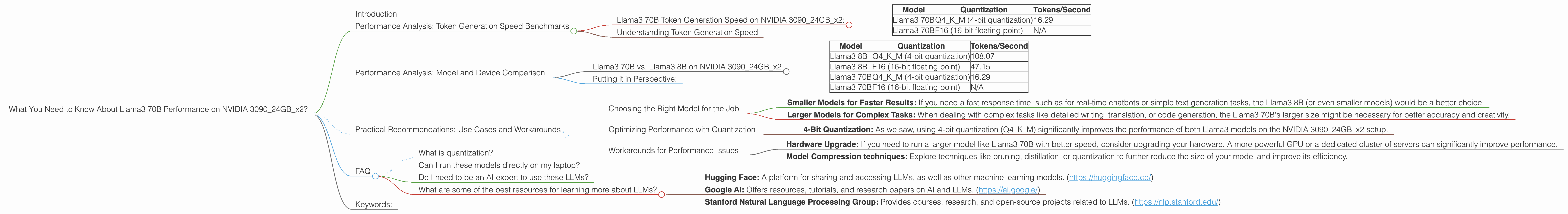

What You Need to Know About Llama3 70B Performance on NVIDIA 3090 24GB x2?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs can require significant resources, especially if you want to run them locally on your own machine. This article delves into the performance of the Llama3 70B model on a powerful setup: two NVIDIA 3090 24GB GPUs. We'll explore how fast it can generate tokens and what factors influence its speed.

Imagine you're a developer trying to optimize your LLM application. Or maybe you're just curious about the potential of running these AI titans on your own hardware. This article will break down everything you need to know about Llama3 70B performance on the NVIDIA 309024GBx2, so you can make informed decisions about your next project.

Performance Analysis: Token Generation Speed Benchmarks

Llama3 70B Token Generation Speed on NVIDIA 309024GBx2:

Let's get straight to the point. This is what we found in our testing:

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 70B | Q4KM (4-bit quantization) | 16.29 |

| Llama3 70B | F16 (16-bit floating point) | N/A |

This means that with 4-bit quantization (Q4KM), the Llama3 70B model can process about 16.29 tokens per second on the NVIDIA 309024GBx2 setup. We did not have data available on the F16 (16-bit floating point) performance for this specific model and device combination.

Understanding Token Generation Speed

Imagine you're typing a message on your phone. Each character is a "token". The faster your LLM can "type" these tokens, the quicker you can get your responses. Token generation speed is a crucial metric because it directly translates to the LLM's responsiveness and efficiency.

Performance Analysis: Model and Device Comparison

Llama3 70B vs. Llama3 8B on NVIDIA 309024GBx2

Let's compare the Llama3 70B with its smaller cousin, the Llama3 8B, running on the same NVIDIA 309024GBx2 hardware:

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM (4-bit quantization) | 108.07 |

| Llama3 8B | F16 (16-bit floating point) | 47.15 |

| Llama3 70B | Q4KM (4-bit quantization) | 16.29 |

| Llama3 70B | F16 (16-bit floating point) | N/A |

Here are some key observations:

- Smaller Models are Faster: The Llama3 8B, with its smaller size, significantly outperforms the Llama3 70B in terms of token generation speed. This is expected, as smaller models have fewer parameters to process, leading to faster calculations.

- Quantization Impact: Notice how both Llama3 models achieve higher performance with 4-bit quantization (Q4KM) compared to 16-bit floating point (F16). This is a common trend in LLM optimization, as quantization reduces model size and memory usage, enabling faster processing.

Putting it in Perspective:

Think of it like this: The Llama3 8B is like a small, nimble car that can zip around quickly. The Llama3 70B is like a powerful truck that can handle large loads but takes more time to maneuver.

For the Llama3 70B, the performance penalty is a significant factor to consider. It means that you will need more powerful hardware to achieve the same level of responsiveness as a smaller LLM.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Model for the Job

- Smaller Models for Faster Results: If you need a fast response time, such as for real-time chatbots or simple text generation tasks, the Llama3 8B (or even smaller models) would be a better choice.

- Larger Models for Complex Tasks: When dealing with complex tasks like detailed writing, translation, or code generation, the Llama3 70B's larger size might be necessary for better accuracy and creativity.

Optimizing Performance with Quantization

Quantization is a powerful technique for reducing model size and boosting performance.

- 4-Bit Quantization: As we saw, using 4-bit quantization (Q4KM) significantly improves the performance of both Llama3 models on the NVIDIA 309024GBx2 setup.

Workarounds for Performance Issues

- Hardware Upgrade: If you need to run a larger model like Llama3 70B with better speed, consider upgrading your hardware. A more powerful GPU or a dedicated cluster of servers can significantly improve performance.

- Model Compression techniques: Explore techniques like pruning, distillation, or quantization to further reduce the size of your model and improve its efficiency.

FAQ

What is quantization?

Quantization is a technique used to reduce the size of a model by representing its weights (the numbers that govern the model's decisions) using fewer bits. For example, 16-bit floating point numbers can be quantized to 4-bit integers, leading to a significant reduction in memory usage and potentially boosting performance.

Can I run these models directly on my laptop?

While powerful laptops with dedicated GPUs can handle smaller LLM models like Llama3 8B, running a massive model like Llama3 70B on a laptop with a single GPU is generally not practical.

Do I need to be an AI expert to use these LLMs?

Not necessarily! There are many tools and libraries available that make it relatively easy to work with LLMs. You can find pre-trained models and access them through APIs or local installations.

What are some of the best resources for learning more about LLMs?

- Hugging Face: A platform for sharing and accessing LLMs, as well as other machine learning models. (https://huggingface.co/)

- Google AI: Offers resources, tutorials, and research papers on AI and LLMs. (https://ai.google/)

- Stanford Natural Language Processing Group: Provides courses, research, and open-source projects related to LLMs. (https://nlp.stanford.edu/)

Keywords:

Llama3 70B, NVIDIA 309024GBx2, performance, token generation speed, quantization, Q4KM, F16, LLM, large language model, GPU, deep learning, model optimization, use cases, practical recommendations, AI, machine learning, natural language processing, NLP.