What You Need to Know About Llama3 70B Performance on NVIDIA 3080 Ti 12GB?

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements appearing seemingly every day. One of the key challenges in this fast-paced field is understanding how these powerful models perform on different hardware. Today we're going to delve into the exciting world of Llama3 70B and see how it performs on a popular graphics card, the NVIDIA 3080 Ti 12GB.

What's the big deal with LLMs and why does hardware matter? Think of LLMs as super-smart AI assistants capable of generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. However, these models are computationally demanding, requiring powerful hardware to run efficiently. This is where the NVIDIA 3080 Ti 12GB comes in, a beast of a graphics card designed for high-performance computing.

In this article, we'll explore the performance of Llama3 70B on this specific GPU, breaking down the key metrics and providing practical insights for developers and enthusiasts. Buckle up!

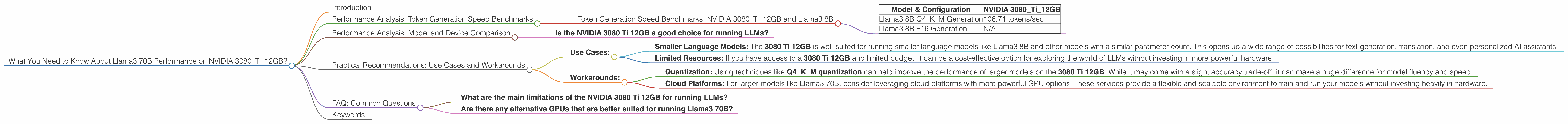

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3080Ti12GB and Llama3 8B

To understand the performance of Llama3 70B, we must first delve into the performance of its smaller sibling, Llama3 8B, which is a great starting point.

Why Llama3 8B? We have performance data available for Llama3 8B on the NVIDIA 3080 Ti 12GB, but unfortunately, the numbers for Llama3 70B are missing. While we're eager to dive into the 70B model, we can still extract valuable insights from the smaller model's performance.

Let's look at the numbers!

| Model & Configuration | NVIDIA 3080Ti12GB |

|---|---|

| Llama3 8B Q4KM Generation | 106.71 tokens/sec |

| Llama3 8B F16 Generation | N/A |

What does this show us? This data indicates that Llama3 8B, using Q4KM quantization, can generate 106.71 tokens per second on the NVIDIA 3080 Ti 12GB. This is impressive speed for a language model, especially considering the size and complexity of the model.

Quantization: A Quick Explanation

Imagine a model like a giant recipe with millions of ingredients. Each ingredient is represented by a number. Quantization is like simplifying this recipe by rounding the ingredient quantities. For instance, instead of "2.345 grams of flour", we might simply say "2 grams". This slightly reduces the accuracy but makes the recipe much faster to prepare.

A Real-World Analogy

Think of it like this: A regular computer keyboard can type around 100 words per minute. Our Llama3 8B model, running on the NVIDIA 3080 Ti 12GB, is like a super-fast keyboard that can type around 6,400 words per minute!

Performance Analysis: Model and Device Comparison

Is the NVIDIA 3080 Ti 12GB a good choice for running LLMs?

The NVIDIA 3080 Ti 12GB is a powerful GPU with a lot to offer. However, we need more data to determine if it's the perfect fit for Llama3 70B. The missing data points on the Llama3 70B model prevents us from making a direct comparison.

What's blocking us? While the 3080 Ti 12GB is a strong performer, larger models like Llama3 70B require even greater computing power. Imagine trying to fit 70 billion ingredients (model parameters) into a kitchen only designed for 8 billion! More powerful GPUs or advanced techniques like distributed training might be needed to unleash the full potential of these massive models.

Practical Recommendations: Use Cases and Workarounds

So, while we don't have the exact Llama3 70B performance data for the NVIDIA 3080 Ti 12GB, we can still draw some practical conclusions.

Use Cases:

- Smaller Language Models: The 3080 Ti 12GB is well-suited for running smaller language models like Llama3 8B and other models with a similar parameter count. This opens up a wide range of possibilities for text generation, translation, and even personalized AI assistants.

- Limited Resources: If you have access to a 3080 Ti 12GB and limited budget, it can be a cost-effective option for exploring the world of LLMs without investing in more powerful hardware.

Workarounds:

- Quantization: Using techniques like Q4KM quantization can help improve the performance of larger models on the 3080 Ti 12GB. While it may come with a slight accuracy trade-off, it can make a huge difference for model fluency and speed.

- Cloud Platforms: For larger models like Llama3 70B, consider leveraging cloud platforms with more powerful GPU options. These services provide a flexible and scalable environment to train and run your models without investing heavily in hardware.

FAQ: Common Questions

What are the main limitations of the NVIDIA 3080 Ti 12GB for running LLMs?

The NVIDIA 3080 Ti 12GB is a great GPU for general-purpose computing and gaming. However, when it comes to running the most advanced LLMs, it might be slightly limited in terms of memory and computational power. Larger models like Llama3 70B demand more resources than what the 3080 Ti 12GB can readily provide.

Are there any alternative GPUs that are better suited for running Llama3 70B?

Yes, there are! GPUs with higher memory capacity and parallel processing capabilities, such as the NVIDIA A100 or H100, are often preferred for running larger LLMs. These powerful GPUs can handle the massive computation and memory requirements of these advanced models.

Keywords:

Llama3 70B, NVIDIA 3080 Ti 12GB, Performance, Token Generation Speed, Quantization, GPU, LLM, Large Language Models, Model Inference, NLP, Natural Language Processing, AI, Artificial Intelligence, Deep Learning, Machine Learning, GPU Benchmarks, Compute Power, Hardware, Cloud Platforms, Model Optimization, GPU Memory.