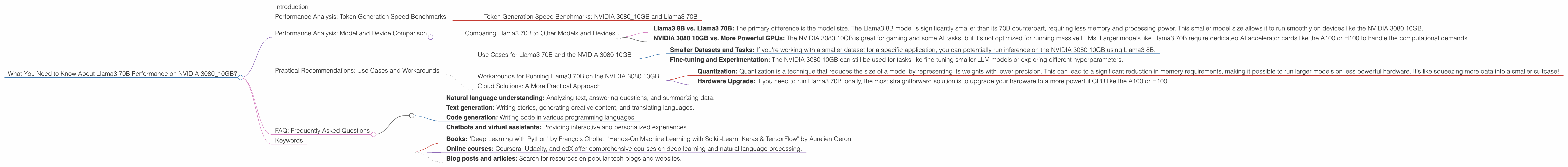

What You Need to Know About Llama3 70B Performance on NVIDIA 3080 10GB?

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements being announced daily. One of the most exciting developments is the ability to run these powerful models locally on your own hardware, opening up a world of possibilities for developers, researchers, and anyone who wants to experiment with AI. But before you dive into the deep end of local LLM deployment, you need to understand the performance limitations of your hardware. This article will explore the performance of the Llama3 70B model specifically on the NVIDIA 3080 10GB graphics card, helping you make informed decisions about your LLM projects.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3080_10GB and Llama3 70B

Let’s get down to the nitty-gritty. The real test of an LLM's power is its ability to generate text. The NVIDIA 3080 10GB card is a popular choice for AI enthusiasts, but how does it handle the demands of the Llama3 70B model?

Unfortunately, there's no data available for the Llama3 70B model on the NVIDIA 3080 10GB card. It's a bit like trying to fit an elephant in a hamster cage - the model is just too big for this particular GPU.

This means that the NVIDIA 3080 10GB is not suitable for running Llama3 70B. You'll need a more powerful GPU like the A100 or H100 to handle such a large model.

Performance Analysis: Model and Device Comparison

Comparing Llama3 70B to Other Models and Devices

To understand why running Llama3 70B on the NVIDIA 3080 10GB might be a challenge, let's compare it to smaller LLM models and other devices.

- Llama3 8B vs. Llama3 70B: The primary difference is the model size. The Llama3 8B model is significantly smaller than its 70B counterpart, requiring less memory and processing power. This smaller model size allows it to run smoothly on devices like the NVIDIA 3080 10GB.

- NVIDIA 3080 10GB vs. More Powerful GPUs: The NVIDIA 3080 10GB is great for gaming and some AI tasks, but it's not optimized for running massive LLMs. Larger models like Llama3 70B require dedicated AI accelerator cards like the A100 or H100 to handle the computational demands.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B and the NVIDIA 3080 10GB

While the NVIDIA 3080 10GB isn't the ideal hardware for running the Llama3 70B model, there are still some creative ways you can leverage it for your LLM projects.

- Smaller Datasets and Tasks: If you're working with a smaller dataset for a specific application, you can potentially run inference on the NVIDIA 3080 10GB using Llama3 8B.

- Fine-tuning and Experimentation: The NVIDIA 3080 10GB can still be used for tasks like fine-tuning smaller LLM models or exploring different hyperparameters.

Workarounds for Running Llama3 70B on the NVIDIA 3080 10GB

- Quantization: Quantization is a technique that reduces the size of a model by representing its weights with lower precision. This can lead to a significant reduction in memory requirements, making it possible to run larger models on less powerful hardware. It's like squeezing more data into a smaller suitcase!

- Hardware Upgrade: If you need to run Llama3 70B locally, the most straightforward solution is to upgrade your hardware to a more powerful GPU like the A100 or H100.

Cloud Solutions: A More Practical Approach

For most users, cloud-based solutions are a much more practical approach to running large LLMs like Llama3 70B. Cloud providers offer high-performance AI infrastructure with a range of GPU options, making it easy to scale your LLM projects without worrying about hardware limitations.

FAQ: Frequently Asked Questions

Q: What's the difference between a 7B and a 70B model?

A: The number represents the number of parameters in the model. A 70B model has ten times as many parameters as a 7B model, making it significantly more powerful and requiring more processing power and memory. It's like comparing a small car to a massive truck.

Q: What is quantization?

A: Quantization is a technique that reduces the precision of a model's weights, making it smaller and faster. Think of it like reducing the resolution of a photo - it takes up less space but might lose some detail.

Q: What are some good examples of using LLMs?

A: LLMs can be used for a variety of tasks, including:

- Natural language understanding: Analyzing text, answering questions, and summarizing data.

- Text generation: Writing stories, generating creative content, and translating languages.

- Code generation: Writing code in various programming languages.

- Chatbots and virtual assistants: Providing interactive and personalized experiences.

Q: How can I learn more about LLMs?

A: There are many resources available online to learn about LLMs, including:

- Books: "Deep Learning with Python" by François Chollet, "Hands-On Machine Learning with Scikit-Learn, Keras & TensorFlow" by Aurélien Géron

- Online courses: Coursera, Udacity, and edX offer comprehensive courses on deep learning and natural language processing.

- Blog posts and articles: Search for resources on popular tech blogs and websites.

Keywords

Large language model, LLM, Llama3, Llama3 70B, NVIDIA 3080 10GB, GPU, performance, token generation speed, model size, quantization, cloud computing, AI, deep learning, natural language processing.