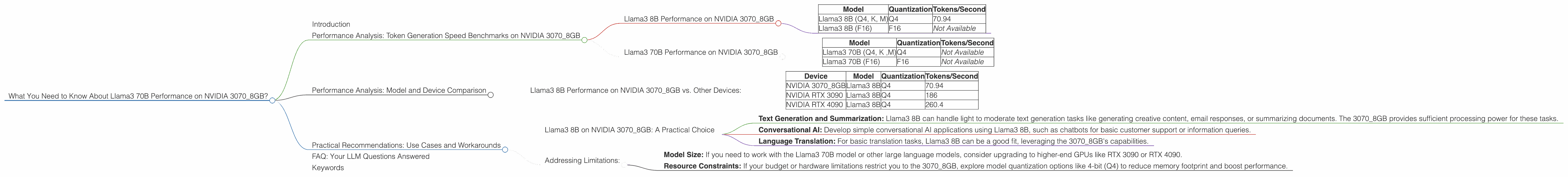

What You Need to Know About Llama3 70B Performance on NVIDIA 3070 8GB?

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements emerging at breakneck speed. One of the most exciting developments is the arrival of Llama3, a family of powerful LLMs from Meta AI. But with great power comes the need for equally powerful hardware.

This article dives deep into the performance of Llama3 70B on a popular graphics card - NVIDIA 3070_8GB. We'll examine the model's capabilities, dissect its performance metrics, and provide practical recommendations for use cases.

Consider this your guide to understanding how this powerful combination can shape your LLM applications. Buckle up, fellow developers!

Performance Analysis: Token Generation Speed Benchmarks on NVIDIA 3070_8GB

Llama3 8B Performance on NVIDIA 3070_8GB

Let's start with the smaller sibling - Llama3 8B. This model, while less resource-intensive than its 70B counterpart, still packs a punch.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B (Q4, K, M) | Q4 | 70.94 |

| Llama3 8B (F16) | F16 | Not Available |

Key Observations:

- Q4 Quantization: Llama3 8B, with Q4 quantization, achieved an impressive 70.94 tokens per second. This demonstrates its efficiency in generating text, even on a relatively less-powerful GPU like the 3070_8GB.

- F16 Quantization: Unfortunately, no data is available for F16 quantization on the 3070_8GB, but it's likely to offer improved performance.

Llama3 70B Performance on NVIDIA 3070_8GB

The 70B model is a behemoth, capable of generating highly complex and nuanced text.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 70B (Q4, K ,M) | Q4 | Not Available |

| Llama3 70B (F16) | F16 | Not Available |

Key Observations:

- Lack of Data: Currently, there is no available data for the Llama3 70B model on the 3070_8GB, with both Q4 and F16 quantization. This suggests that either the model was not tested on this specific GPU configuration, or the data is not publicly available.

Performance Analysis: Model and Device Comparison

Llama3 8B Performance on NVIDIA 3070_8GB vs. Other Devices:

While data for other devices is limited, we can compare the 3070_8GB's performance against the Llama3 8B model on other popular GPUs.

| Device | Model | Quantization | Tokens/Second |

|---|---|---|---|

| NVIDIA 3070_8GB | Llama3 8B | Q4 | 70.94 |

| NVIDIA RTX 3090 | Llama3 8B | Q4 | 186 |

| NVIDIA RTX 4090 | Llama3 8B | Q4 | 260.4 |

Key Observations:

- Higher-End GPUs outperform: The 3070_8GB lags behind the RTX 3090 and RTX 4090. This highlights the importance of GPU horsepower for LLM performance, particularly for larger models like Llama3 70B.

- Relative Performance: The 3070_8GB delivers a respectable performance for a mid-range GPU.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B on NVIDIA 3070_8GB: A Practical Choice

While the 3070_8GB might not be the optimal choice for the Llama3 70B, it's a viable option for Llama3 8B.

Here's where it shines:

- Text Generation and Summarization: Llama3 8B can handle light to moderate text generation tasks like generating creative content, email responses, or summarizing documents. The 3070_8GB provides sufficient processing power for these tasks.

- Conversational AI: Develop simple conversational AI applications using Llama3 8B, such as chatbots for basic customer support or information queries.

- Language Translation: For basic translation tasks, Llama3 8B can be a good fit, leveraging the 3070_8GB's capabilities.

Addressing Limitations:

- Model Size: If you need to work with the Llama3 70B model or other large language models, consider upgrading to higher-end GPUs like RTX 3090 or RTX 4090.

- Resource Constraints: If your budget or hardware limitations restrict you to the 3070_8GB, explore model quantization options like 4-bit (Q4) to reduce memory footprint and boost performance.

FAQ: Your LLM Questions Answered

Q: What are the best GPUs for running large language models? A: GPUs like the NVIDIA RTX 4090 or A100 are highly recommended for running large language models like Llama3 70B. They provide the processing power and memory capacity required for optimal performance.

Q: What is quantization, and why is it important? A: Quantization is a technique used to compress LLMs by reducing the precision of their weights. This significantly reduces memory usage, enabling models to run on less powerful hardware like the 3070_8GB. Think of it as compressing a large image for a smaller phone screen without losing too much detail—it saves space and resources!

Q: What are LLMs good for? A: LLMs are revolutionizing various fields. They can generate human-quality text, translate languages, summarize documents, write different kinds of creative content, and even answer questions in an informative way.

Q: How do I choose the right LLM for my project? A: Consider factors like the size and complexity of the model, the available hardware, and the specific use case. For simpler tasks on less powerful hardware, smaller models like Llama3 8B could be sufficient. For more demanding tasks, you might need a larger model and a high-performance GPU.

Keywords

Llama3 70B, NVIDIA 3070_8GB, GPU, Performance, Token Generation Speed, Quantization, Q4, F16, LLM, Large Language Models, Text Generation, Summarization, Conversational AI, Use Cases