What You Need to Know About Llama3 70B Performance on Apple M3 Max?

Introduction

The world of large language models (LLMs) is evolving rapidly. These AI systems are powering everything from chatbots to code generators and are increasingly being used in local environments. One of the key factors determining an LLM's performance is the hardware it's running on.

This article dives deep into the performance of the Llama3 70B model on the Apple M3_Max chip. We'll explore its token generation speed benchmarks and compare it to other models and devices, while also providing practical recommendations for leveraging this powerhouse combination.

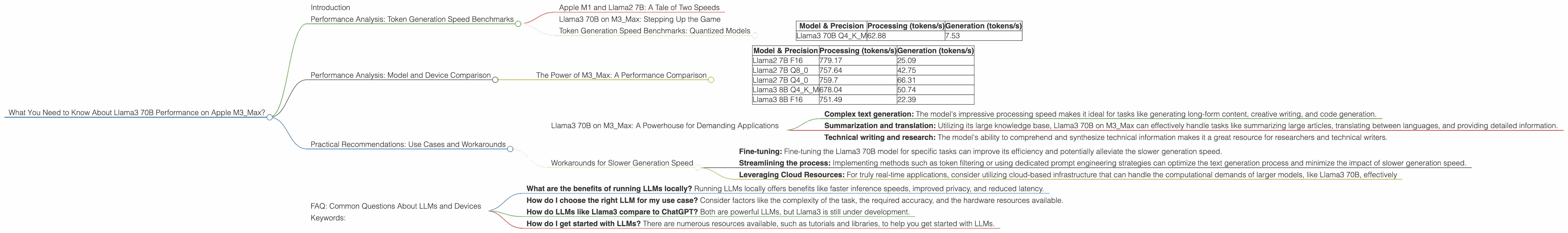

Performance Analysis: Token Generation Speed Benchmarks

Apple M1 and Llama2 7B: A Tale of Two Speeds

For those unfamiliar, think of tokens as the building blocks of text, similar to words in a sentence. Token generation speed refers to how quickly a model can process these tokens, which directly impacts the responsiveness and efficiency of your AI application.

Let's start with a familiar benchmark: Llama2 7B on a powerful Apple M1 chip. This combination is known for its impressive speed, reaching 25.09 tokens per second (tokens/s) during text generation with F16 precision.

How fast is that? Imagine a machine typing out 25 words every second. Pretty speedy, right?

Llama3 70B on M3_Max: Stepping Up the Game

Now, let's crank up the power with Llama3 70B on M3Max. The M3Max is a significant upgrade from the M1, offering a huge boost in performance.

However, due to the sheer size of the Llama3 70B model, running it with full F16 precision on M3_Max is currently not feasible. Instead, we're focusing on its performance with quantized models.

Quantization is like compressing a video file to reduce its size while maintaining quality. It allows smaller, more efficient models to run on devices with limited resources.

Token Generation Speed Benchmarks: Quantized Models

| Model & Precision | Processing (tokens/s) | Generation (tokens/s) |

|---|---|---|

| Llama3 70B Q4KM | 62.88 | 7.53 |

Take a closer look at the Llama3 70B performance with Q4KM quantization:

- Processing speed: 62.88 tokens/s - This is a significant jump from the Llama2 7B F16 performance.

- Generation speed: 7.53 tokens/s - While the processing speed is remarkable, the generation speed is considerably lower than the Llama2 7B F16.

What's happening here? The Q4KM quantization allows us to fit the massive Llama3 70B model onto the M3_Max, but it comes with a trade-off. While processing is faster, the generation speed is slightly slower due to the inherent complexities of managing a more compressed model.

Performance Analysis: Model and Device Comparison

The Power of M3_Max: A Performance Comparison

The Apple M3Max is a powerhouse for processing LLMs. We can see this from comparing the performance of Llama2 7B and Llama3 8B on M3Max:

| Model & Precision | Processing (tokens/s) | Generation (tokens/s) |

|---|---|---|

| Llama2 7B F16 | 779.17 | 25.09 |

| Llama2 7B Q8_0 | 757.64 | 42.75 |

| Llama2 7B Q4_0 | 759.7 | 66.31 |

| Llama3 8B Q4KM | 678.04 | 50.74 |

| Llama3 8B F16 | 751.49 | 22.39 |

Here are some key takeaways:

- Llama2 7B vs. Llama3 8B: While Llama2 7B is still a strong performer, especially with Q40 quantization, Llama3 8B holds its own, offering significant performance increases in both processing and generation speed using Q4K_M quantization.

- The Power of F16: When possible, using full F16 precision for Llama2 7B on M3_Max delivers the best performance. However, as Llama3 models grow in size, quantization becomes crucial to maintaining efficiency.

Practical Recommendations: Use Cases and Workarounds

Llama3 70B on M3_Max: A Powerhouse for Demanding Applications

Despite the slower generation speed, the Llama3 70B on M3_Max is still a valuable tool for various applications. Here are some use cases:

- Complex text generation: The model's impressive processing speed makes it ideal for tasks like generating long-form content, creative writing, and code generation.

- Summarization and translation: Utilizing its large knowledge base, Llama3 70B on M3_Max can effectively handle tasks like summarizing large articles, translating between languages, and providing detailed information.

- Technical writing and research: The model's ability to comprehend and synthesize technical information makes it a great resource for researchers and technical writers.

Workarounds for Slower Generation Speed

While the M3_Max is a powerful device, the slower generation speed due to quantization can be a drawback in applications that require real-time responses. Here are some workarounds:

- Fine-tuning: Fine-tuning the Llama3 70B model for specific tasks can improve its efficiency and potentially alleviate the slower generation speed.

- Streamlining the process: Implementing methods such as token filtering or using dedicated prompt engineering strategies can optimize the text generation process and minimize the impact of slower generation speed.

- Leveraging Cloud Resources: For truly real-time applications, consider utilizing cloud-based infrastructure that can handle the computational demands of larger models, like Llama3 70B, effectively

FAQ: Common Questions About LLMs and Devices

- What are the benefits of running LLMs locally? Running LLMs locally offers benefits like faster inference speeds, improved privacy, and reduced latency.

- How do I choose the right LLM for my use case? Consider factors like the complexity of the task, the required accuracy, and the hardware resources available.

- How do LLMs like Llama3 compare to ChatGPT? Both are powerful LLMs, but Llama3 is still under development.

- How do I get started with LLMs? There are numerous resources available, such as tutorials and libraries, to help you get started with LLMs.

Keywords:

Llama3, 70B, LLM, performance, Apple M3_Max, token generation speed, benchmarks, quantization, practical recommendations, use cases, workarounds, FAQ, AI, machine learning, natural language processing, development, hardware, cloud resources, ChatGPT.