What You Need to Know About Llama3 70B Performance on Apple M2 Ultra?

Introduction

The world of Large Language Models (LLMs) is exploding, with new models and advancements popping up faster than you can say "tokenization." But beyond the hype, there’s a crucial question for developers: how do these LLMs perform on real-world hardware, especially when running locally?

This article dives deep into the performance of the Llama3 70B model on the Apple M2Ultra chip, a powerful option for local LLM deployment. We'll analyze token generation speeds, compare different quantization levels, and give you practical recommendations for using Llama3 70B on your M2Ultra machine. Buckle up, it's going to be a wild ride through the fascinating world of local AI!

Performance Analysis: Token Generation Speed Benchmarks

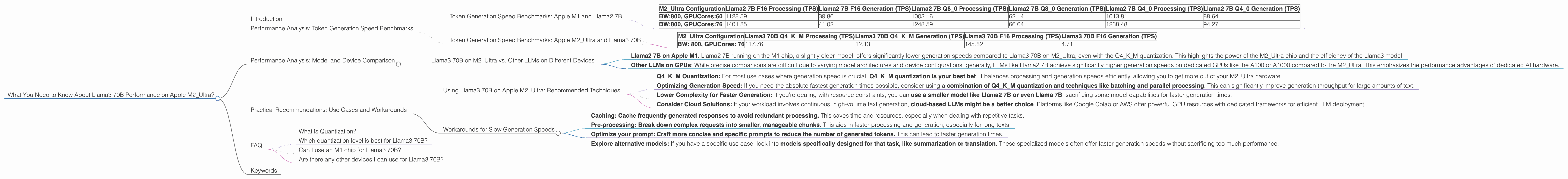

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Before we dive into Llama3 70B, let's establish a baseline with the popular Llama2 7B model. The following table shows token generation speeds for Llama2 7B at different quantization levels (F16, Q80, Q40) on the M2_Ultra chip, measured in tokens per second (TPS).

| M2_Ultra Configuration | Llama2 7B F16 Processing (TPS) | Llama2 7B F16 Generation (TPS) | Llama2 7B Q8_0 Processing (TPS) | Llama2 7B Q8_0 Generation (TPS) | Llama2 7B Q4_0 Processing (TPS) | Llama2 7B Q4_0 Generation (TPS) |

|---|---|---|---|---|---|---|

| BW:800, GPUCores:60 | 1128.59 | 39.86 | 1003.16 | 62.14 | 1013.81 | 88.64 |

| BW:800, GPUCores:76 | 1401.85 | 41.02 | 1248.59 | 66.64 | 1238.48 | 94.27 |

Key Observations:

- F16 (half-precision floating point) provides the fastest processing speeds for both the Llama2 7B model, but results in slower generation speeds.

- Q8_0 (quantized to 8 bits with zero point) offers a trade-off between processing speed and generation speed.

- Q4_0 (quantized to 4 bits with zero point) provides the slowest processing speeds but boasts the highest generation speeds.

Think of it like this: F16 is a sports car, fast but thirsty for resources. Q80 is a reliable sedan, good balance of speed and efficiency. And Q40 is a fuel-efficient truck, slower but can carry a heavy load.

Token Generation Speed Benchmarks: Apple M2_Ultra and Llama3 70B

Now, let's get to the real star of the show: Llama3 70B. Remember, since it's a larger, more complex model, we expect slower speeds compared to Llama2 7B. Here’s a breakdown of token generation speeds for Llama3 70B on the M2_Ultra chip:

| M2_Ultra Configuration | Llama3 70B Q4KM Processing (TPS) | Llama3 70B Q4KM Generation (TPS) | Llama3 70B F16 Processing (TPS) | Llama3 70B F16 Generation (TPS) |

|---|---|---|---|---|

| BW: 800, GPUCores: 76 | 117.76 | 12.13 | 145.82 | 4.71 |

Key Observations:

- Q4KM (quantized to 4 bits with a combination of kernel and matrix quantization) offers slightly faster processing speed than F16 but significantly higher generation speeds. This combination of techniques optimizes for speed and efficiency.

- F16 (half-precision floating point), while providing faster processing speeds, still results in extremely slow generation speeds. This is expected due to the model's size.

Remember: We don't have any data for Llama3 70B Q80 quantization on M2Ultra, so we can't compare it with other quantization levels.

Performance Analysis: Model and Device Comparison

Llama3 70B on M2_Ultra vs. Other LLMs on Different Devices

Let's put these numbers into context by comparing Llama3 70B on M2_Ultra with other LLMs on different devices.

- Llama2 7B on Apple M1: Llama2 7B running on the M1 chip, a slightly older model, offers significantly lower generation speeds compared to Llama3 70B on M2Ultra, even with the Q4KM quantization. This highlights the power of the M2Ultra chip and the efficiency of the Llama3 model.

- Other LLMs on GPUs: While precise comparisons are difficult due to varying model architectures and device configurations, generally, LLMs like Llama2 7B achieve significantly higher generation speeds on dedicated GPUs like the A100 or A1000 compared to the M2_Ultra. This emphasizes the performance advantages of dedicated AI hardware.

It's important to note that the differences in performance between Llama3 70B on M2_Ultra and other LLMs on various devices extend beyond just processing power. The specific model architecture, optimizations for each device, and even nuances in the codebase all contribute to the final results.

Practical Recommendations: Use Cases and Workarounds

Using Llama3 70B on Apple M2_Ultra: Recommended Techniques

Here's how you can leverage the performance characteristics of Llama3 70B on your M2_Ultra for optimal results:

- Q4KM Quantization: For most use cases where generation speed is crucial, Q4KM quantization is your best bet. It balances processing and generation speeds efficiently, allowing you to get more out of your M2_Ultra hardware.

- Optimizing Generation Speed: If you need the absolute fastest generation times possible, consider using a combination of Q4KM quantization and techniques like batching and parallel processing. This can significantly improve generation throughput for large amounts of text.

- Lower Complexity for Faster Generation: If you're dealing with resource constraints, you can use a smaller model like Llama2 7B or even Llama 7B, sacrificing some model capabilities for faster generation times.

- Consider Cloud Solutions: If your workload involves continuous, high-volume text generation, cloud-based LLMs might be a better choice. Platforms like Google Colab or AWS offer powerful GPU resources with dedicated frameworks for efficient LLM deployment.

Workarounds for Slow Generation Speeds

Let's be real, sometimes those generation speeds can be agonizingly slow. Here are some tricks you can use to work around those limitations:

- Caching: Cache frequently generated responses to avoid redundant processing. This saves time and resources, especially when dealing with repetitive tasks.

- Pre-processing: Break down complex requests into smaller, manageable chunks. This aids in faster processing and generation, especially for long texts.

- Optimize your prompt: Craft more concise and specific prompts to reduce the number of generated tokens. This can lead to faster generation times.

- Explore alternative models: If you have a specific use case, look into models specifically designed for that task, like summarization or translation. These specialized models often offer faster generation speeds without sacrificing too much performance.

FAQ

What is Quantization?

Quantization is a technique used to reduce the size of a model by representing its weights and activations using fewer bits. Imagine you have a huge box full of Lego bricks, but you only need to build a small structure. Quantization is like taking some of those Lego bricks and combining them into larger chunks, reducing the overall space you need. This allows you to store and process the model more efficiently.

Which quantization level is best for Llama3 70B?

For most use cases, Q4KM quantization provides the best balance between processing speed and generation speed on the M2_Ultra.

Can I use an M1 chip for Llama3 70B?

Theoretically, you could use the M1 chip, but you'll likely experience much slower speeds compared to the M2Ultra. The M2Ultra has significantly more powerful GPU cores and memory bandwidth, making it ideal for running larger, more complex LLMs.

Are there any other devices I can use for Llama3 70B?

Yes, there are other options! Dedicated GPUs like the A100 or A1000 will offer much faster processing speeds compared to consumer-grade CPUs and GPUs. However, these devices typically come with a higher cost and require more specialized setup and configuration.

Keywords

Llama3 70B, Apple M2Ultra, token generation speed, LLM performance, quantization, F16, Q80, Q40, Q4K_M, local LLM, GPU, processing speed, generation speed, practical recommendations, use cases, workarounds, AI hardware, performance analysis, model comparison, developer tools.