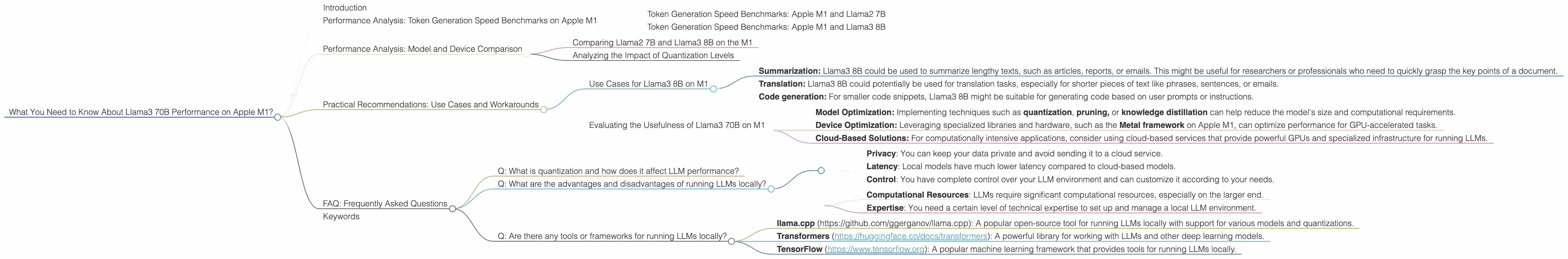

What You Need to Know About Llama3 70B Performance on Apple M1?

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models and advancements emerging at a breakneck pace. One of the latest breakthroughs is the Llama3 70B model, which boasts impressive capabilities and the potential to revolutionize various industries.

However, running these colossal models locally on your own machine can be a significant challenge. This is where the ubiquitous Apple M1 chip, known for its impressive performance and power efficiency, comes into play. In this article, we'll delve into the fascinating world of local LLM deployment and explore the performance of Llama3 70B on the Apple M1 chip.

Performance Analysis: Token Generation Speed Benchmarks on Apple M1

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Before we dive into Llama3 70B, let's first establish a baseline by examining the performance of the popular Llama2 7B model on the Apple M1.

The data reveals that Llama2 7B with Q8_0 quantization achieves a processing speed of 117.25 tokens/second and a generation speed of 7.91 tokens/second on the M1 with 8 GPU cores and a bandwidth of 68 GB/s. This is comparable to the 7 GPU cores and 68 GB/s configuration, which has a processing speed of 108.21 tokens/second and a generation speed of 7.92 tokens/second.

Token Generation Speed Benchmarks: Apple M1 and Llama3 8B

Now, let's move on to Llama3 8B. Unfortunately, we have no data for Llama3 70B on the Apple M1. But we can gain some insight by looking at the performance metrics for Llama3 8B with Q4KM quantization.

The Llama3 8B Q4KM configuration achieves a processing speed of 87.26 tokens/second and a generation speed of 9.72 tokens/second on the Apple M1 with 7 GPU cores and 68 GB/s bandwidth.

Performance Analysis: Model and Device Comparison

Comparing Llama2 7B and Llama3 8B on the M1

When comparing the processing speeds of Llama2 7B (Q80) and Llama3 8B (Q4K_M) on the M1, we observe that Llama2 7B offers slightly better performance. This difference can be attributed to various factors, such as the model architecture, quantization techniques, and optimization strategies employed.

However, it's important to note that the generation speed of Llama3 8B is slightly higher than Llama2 7B. This suggests that, despite a lower processing speed, Llama3 8B might generate responses faster.

Analyzing the Impact of Quantization Levels

The performance data highlights the significant impact of quantization levels on model performance. The Q80 quantization of Llama2 7B offers significantly better performance, both in processing and generation speed, compared to the Q4K_M quantization of Llama3 8B. This is largely because using fewer bits per token allows for faster processing and memory access, particularly when using GPUs.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on M1

Despite the lack of data for Llama3 70B, the performance trends seen with Llama3 8B suggest a potential use case for smaller scale applications. Here are some examples:

- Summarization: Llama3 8B could be used to summarize lengthy texts, such as articles, reports, or emails. This might be useful for researchers or professionals who need to quickly grasp the key points of a document.

- Translation: Llama3 8B could potentially be used for translation tasks, especially for shorter pieces of text like phrases, sentences, or emails.

- Code generation: For smaller code snippets, Llama3 8B might be suitable for generating code based on user prompts or instructions.

Evaluating the Usefulness of Llama3 70B on M1

While the data for Llama3 70B on M1 is unavailable, the performance of Llama3 8B suggests that Llama3 70B might struggle to run efficiently on the M1. To achieve better performance, you might need to consider:

- Model Optimization: Implementing techniques such as quantization, pruning, or knowledge distillation can help reduce the model's size and computational requirements.

- Device Optimization: Leveraging specialized libraries and hardware, such as the Metal framework on Apple M1, can optimize performance for GPU-accelerated tasks.

- Cloud-Based Solutions: For computationally intensive applications, consider using cloud-based services that provide powerful GPUs and specialized infrastructure for running LLMs.

FAQ: Frequently Asked Questions

Q: What is quantization and how does it affect LLM performance?

A: Quantization is a technique used to reduce the size of a model by representing its weights and activations using fewer bits. This can lead to faster inference speeds and lower memory usage. While quantization can improve performance, it can sometimes come at the cost of accuracy.

Q: What are the advantages and disadvantages of running LLMs locally?

A: Running LLMs locally offers several advantages:

- Privacy: You can keep your data private and avoid sending it to a cloud service.

- Latency: Local models have much lower latency compared to cloud-based models.

- Control: You have complete control over your LLM environment and can customize it according to your needs.

However, running LLMs locally also has some downsides:

- Computational Resources: LLMs require significant computational resources, especially on the larger end.

- Expertise: You need a certain level of technical expertise to set up and manage a local LLM environment.

Q: Are there any tools or frameworks for running LLMs locally?

A: Yes, there are several tools and frameworks available for running LLMs locally:

- llama.cpp (https://github.com/ggerganov/llama.cpp): A popular open-source tool for running LLMs locally with support for various models and quantizations.

- Transformers (https://huggingface.co/docs/transformers): A powerful library for working with LLMs and other deep learning models.

- TensorFlow (https://www.tensorflow.org): A popular machine learning framework that provides tools for running LLMs locally.

Keywords

Large Language Models, LLMs, Llama3, Llama2, Apple M1, M1, GPU, Token Generation Speed, Quantization, Q80, Q4K_M, Metal Framework, Cloud, Performance Benchmarks, Inference, Local Deployment, Model Optimization, Device Optimization, Privacy, Latency, Computational Resources, Transformers, llama.cpp, TensorFlow, Deep Learning