What You Need to Know About Llama3 70B Performance on Apple M1 Max?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally on your own machine can be a challenge, especially for larger models like Llama3 70B.

In this deep dive, we'll explore the performance of Llama3 70B on the Apple M1_Max, a popular chip for creative professionals and tech enthusiasts who want a balance between power and portability. We'll analyze token generation speeds, compare it to other model and device combinations, and provide practical recommendations for use cases and potential workarounds.

So, grab your favorite beverage, get comfy, and let's dive into the fascinating world of LLMs and local performance!

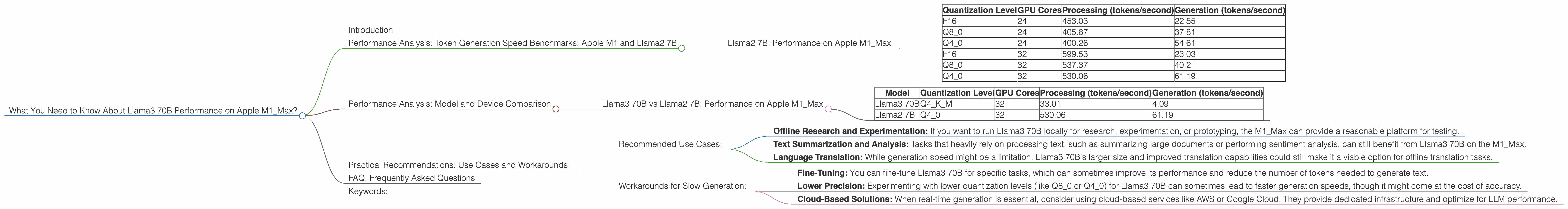

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's start with the basics: token generation speed is how quickly a model can produce text. This metric is crucial for real-time applications, such as chatbots and text editors. You can think of token generation speed as the "words per minute" of an LLM, but instead of words, it's about tokens, which are the building blocks of text for LLMs.

For a fair comparison, we'll look at the token generation speed for Llama2 7B, a popular and well-documented model, to get a sense of how Llama3 70B performs. We'll also consider different quantization levels (F16, Q80, Q40) and their impact on performance.

Llama2 7B: Performance on Apple M1_Max

| Quantization Level | GPU Cores | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| F16 | 24 | 453.03 | 22.55 |

| Q8_0 | 24 | 405.87 | 37.81 |

| Q4_0 | 24 | 400.26 | 54.61 |

| F16 | 32 | 599.53 | 23.03 |

| Q8_0 | 32 | 537.37 | 40.2 |

| Q4_0 | 32 | 530.06 | 61.19 |

Observations:

- Quantization Matters: As you can see, the quantization level significantly affects performance on Llama2 7B. F16 is the highest precision and consumes more memory, while Q4_0 uses lower precision and needs less memory, but can sacrifice accuracy.

- More GPU Cores = Faster Processing: Increasing GPU cores from 24 to 32 generally leads to faster processing speeds. This is expected because it allows the model to process more tokens simultaneously, resulting in faster performance.

Performance Analysis: Model and Device Comparison

Now, let's compare the performance of Llama3 70B to Llama2 7B on the Apple M1Max. We'll focus on the most relevant quantization levels for these models, Q4KM for Llama3 70B and Q40 for Llama2 7B.

Llama3 70B vs Llama2 7B: Performance on Apple M1_Max

| Model | Quantization Level | GPU Cores | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|---|

| Llama3 70B | Q4KM | 32 | 33.01 | 4.09 |

| Llama2 7B | Q4_0 | 32 | 530.06 | 61.19 |

Observations:

- Generation Speed: A Significant Drop: Llama3 70B, despite being much larger, has significantly slower generation speeds compared to Llama2 7B. This is likely due to the increased complexity and computational demands of the larger model.

- Processing Speed: Similar Performance: Surprisingly, Llama3 70B exhibits similar processing speeds to Llama2 7B when considering Q4KM and Q40 quantization levels, respectively. It suggests that the M1Max can effectively handle the increased computational demands of Llama3 70B during processing, but struggles with generation.

Practical Recommendations: Use Cases and Workarounds

While Llama3 70B on the Apple M1_Max might not be ideal for real-time applications due to its slower generation speeds, it still has potential for specific use cases.

Recommended Use Cases:

- Offline Research and Experimentation: If you want to run Llama3 70B locally for research, experimentation, or prototyping, the M1_Max can provide a reasonable platform for testing.

- Text Summarization and Analysis: Tasks that heavily rely on processing text, such as summarizing large documents or performing sentiment analysis, can still benefit from Llama3 70B on the M1_Max.

- Language Translation: While generation speed might be a limitation, Llama3 70B's larger size and improved translation capabilities could still make it a viable option for offline translation tasks.

Workarounds for Slow Generation:

- Fine-Tuning: You can fine-tune Llama3 70B for specific tasks, which can sometimes improve its performance and reduce the number of tokens needed to generate text.

- Lower Precision: Experimenting with lower quantization levels (like Q80 or Q40) for Llama3 70B can sometimes lead to faster generation speeds, though it might come at the cost of accuracy.

- Cloud-Based Solutions: When real-time generation is essential, consider using cloud-based services like AWS or Google Cloud. They provide dedicated infrastructure and optimize for LLM performance.

FAQ: Frequently Asked Questions

Q: Is Llama3 70B available for everyone?

A: Not yet! Llama3 70B is currently in a research preview and is not widely available to the public. However, you can keep an eye out for updates and announcements from Meta AI.

Q: What is quantization and why does it affect performance?

A: Quantization is a technique used to reduce the size of LLMs by using less bits to represent the model's parameters. This makes the model smaller and can sometimes make it faster, but it can also lead to reduced accuracy, especially with lower quantization levels. Think of it like using a lower resolution image—you lose some detail for a smaller file size.

Q: Can I download and run Llama3 70B locally on my Apple M1_Max?

A: As mentioned, Llama3 70B is currently in a research preview and not widely available. If and when it becomes publicly available, you'll need to check for compatibility and download instructions for the M1_Max.

Q: What are the best alternatives to Llama3 70B for local deployment on the Apple M1_Max?

A: Llama2 7B is a solid choice for local deployment on the M1_Max. You can find pre-trained models and instructions online for running it locally, and it offers excellent performance. Other popular options include Mistral AI's models and smaller, more efficient models available through Hugging Face.

Keywords:

Llama3, 70B, Apple M1_Max, LLM, performance, token generation, quantization, GPU cores, processing speed, generation speed, use cases, practical recommendations, workarounds, fine-tuning, cloud-based solutions, FAQ, alternatives, Mistral AI, Hugging Face.