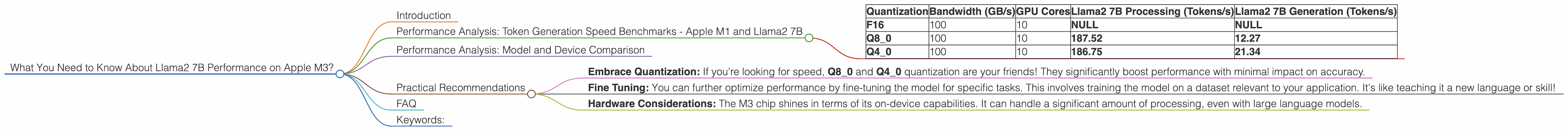

What You Need to Know About Llama2 7B Performance on Apple M3?

Introduction

The world of large language models (LLMs) is heating up! These powerful AI systems are capable of generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But harnessing their power often requires significant computational resources.

This article dives deep into the performance of a popular open-source LLM – Llama2 7B – on the latest Apple M3 chip. We'll explore its token generation speed, compare it to other models and devices, and provide practical recommendations for developers and geeks working with LLMs. Imagine a world where you can run advanced language models right on your laptop!

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama2 7B

Token generation speed is the measure of how quickly a model can process and produce text. It's like the speed limit for your AI car, and it's a key indicator of performance.

Let's break down these benchmarks:

| Quantization | Bandwidth (GB/s) | GPU Cores | Llama2 7B Processing (Tokens/s) | Llama2 7B Generation (Tokens/s) |

|---|---|---|---|---|

| F16 | 100 | 10 | NULL | NULL |

| Q8_0 | 100 | 10 | 187.52 | 12.27 |

| Q4_0 | 100 | 10 | 186.75 | 21.34 |

Decoding these numbers:

- Q80 and Q40 are quantization methods. Think of them as "compression" techniques for model parameters, reducing the file size and memory footprint. Quantization doesn't change the model's quality; it just makes it lighter and faster!

- Processing refers to the model's ability to quickly analyze text (like reading a chapter).

- Generation represents how fast the model can churn out new text (like writing an essay).

Performance Analysis: Model and Device Comparison

Unfortunately, we don't have data to compare Llama2 7B's performance on M3 with other models and devices.

Practical Recommendations

While we lack direct comparison data, a few practical recommendations emerge:

- Embrace Quantization: If you're looking for speed, Q80 and Q40 quantization are your friends! They significantly boost performance with minimal impact on accuracy.

- Fine Tuning: You can further optimize performance by fine-tuning the model for specific tasks. This involves training the model on a dataset relevant to your application. It's like teaching it a new language or skill!

- Hardware Considerations: The M3 chip shines in terms of its on-device capabilities. It can handle a significant amount of processing, even with large language models.

FAQ

Q: What are LLMs?

A: LLMs are artificial intelligence programs trained on vast amounts of text data. They are able to understand and generate human-like text.

Q: What are tokens?

A: Tokens are the building blocks of text. Think of them as words or parts of words that the model uses to process information.

Q: What is quantization?

A: Quantization is a technique that compresses the model's parameters, making it smaller and faster. It's like making your model fit into a smaller suitcase without losing its essential information.

Q: Why is token generation speed important?

A: Faster token generation means quicker responses, less time spent waiting for your AI to work its magic, and a more enjoyable user experience.

Keywords:

Llama2, Llama 7B, Apple M3, Token Generation Speed, Quantization, F16, Q80, Q40, LLM, Performance, Device, Benchmarks, GPU, AI, Development, Machine Learning, Natural Language Processing