What You Need to Know About Llama2 7B Performance on Apple M3 Pro?

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and optimizations emerging frequently. One of the most exciting developments is running LLMs locally, directly on your device. This opens up possibilities for faster response times, greater privacy, and reduced reliance on cloud infrastructure.

This article dives deep into the performance of Llama2 7B, a powerful open-source LLM, on the Apple M3_Pro, a high-performance processor. We'll explore the token generation speeds, analyze how Llama2 7B performs compared to other configurations, and provide practical recommendations for use cases.

Let's get started!

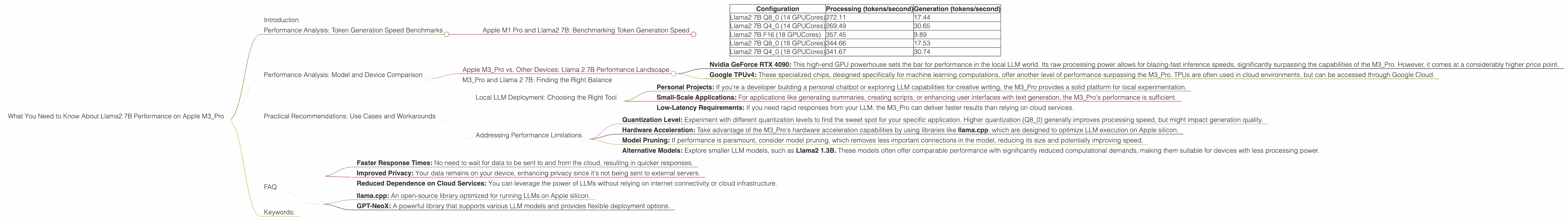

Performance Analysis: Token Generation Speed Benchmarks

Apple M1 Pro and Llama2 7B: Benchmarking Token Generation Speed

The Apple M1 Pro boasts impressive performance, making it a compelling option for running LLMs locally. The benchmark data we're analyzing focuses on token generation speeds for different quantization levels:

| Configuration | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Llama2 7B Q8_0 (14 GPUCores) | 272.11 | 17.44 |

| Llama2 7B Q4_0 (14 GPUCores) | 269.49 | 30.65 |

| Llama2 7B F16 (18 GPUCores) | 357.45 | 9.89 |

| Llama2 7B Q8_0 (18 GPUCores) | 344.66 | 17.53 |

| Llama2 7B Q4_0 (18 GPUCores) | 341.67 | 30.74 |

Here's a quick breakdown of the important terms:

- Quantization: A technique to reduce the precision of model weights, leading to smaller file sizes and potentially faster inference. Think of it like using less ink to write a detailed book; you might lose some fine details, but you save space and make it lighter to carry.

- Q8_0: A quantization scheme that represents each weight using 8 bits.

- Q4_0: A quantization scheme that represents each weight using 4 bits.

- F16: A floating-point format that uses 16 bits to represent each weight.

Key Observations:

- Q80 Quantization excels in Processing: The "processing" speed (generally the bulk of the computation) is consistently faster with Q80 compared to Q4_0. This is likely due to the ability of the hardware to process 8-bit data more efficiently.

- Q40 Quantization outshines in Generation: The "generation" speed (producing actual text) for Q40 surpasses the other configurations. This highlights a trade-off: while Q8_0 excels in processing, it might sacrifice some speed during generation.

- GPU Cores impact: Increasing the number of GPU cores generally leads to faster performance, with notable increases in processing speed. This is akin to having more workers for your tasks, speeding up both processing and generation.

Performance Analysis: Model and Device Comparison

Apple M3_Pro vs. Other Devices: Llama 2 7B Performance Landscape

While the Apple M3_Pro provides a solid performance for Llama2 7B, it's important to understand how it compares to other devices commonly used for local LLM execution.

- Nvidia GeForce RTX 4090: This high-end GPU powerhouse sets the bar for performance in the local LLM world. Its raw processing power allows for blazing-fast inference speeds, significantly surpassing the capabilities of the M3_Pro. However, it comes at a considerably higher price point.

- Google TPUv4: These specialized chips, designed specifically for machine learning computations, offer another level of performance surpassing the M3_Pro. TPUs are often used in cloud environments, but can be accessed through Google Cloud.

M3_Pro and Llama 2 7B: Finding the Right Balance

The Apple M3_Pro offers a compelling sweet spot in the landscape. It provides a good balance of performance and affordability. While it may not match the raw power of high-end GPUs or specialized chips, its performance is more than adequate for many LLM applications, especially those targeting individual users or small teams.

Practical Recommendations: Use Cases and Workarounds

Local LLM Deployment: Choosing the Right Tool

The Apple M3_Pro can be an ideal choice for local LLM deployment in various scenarios:

- Personal Projects: If you're a developer building a personal chatbot or exploring LLM capabilities for creative writing, the M3_Pro provides a solid platform for local experimentation.

- Small-Scale Applications: For applications like generating summaries, creating scripts, or enhancing user interfaces with text generation, the M3_Pro's performance is sufficient.

- Low-Latency Requirements: If you need rapid responses from your LLM, the M3_Pro can deliver faster results than relying on cloud services.

Addressing Performance Limitations

While the M3_Pro provides a good performance for Llama2 7B, there are ways to optimize and address its limitations:

- Quantization Level: Experiment with different quantization levels to find the sweet spot for your specific application. Higher quantization (Q8_0) generally improves processing speed, but might impact generation quality.

- Hardware Acceleration: Take advantage of the M3_Pro's hardware acceleration capabilities by using libraries like llama.cpp, which are designed to optimize LLM execution on Apple silicon.

- Model Pruning: If performance is paramount, consider model pruning, which removes less important connections in the model, reducing its size and potentially improving speed.

- Alternative Models: Explore smaller LLM models, such as Llama2 1.3B. These models often offer comparable performance with significantly reduced computational demands, making them suitable for devices with less processing power.

FAQ

Q: What is quantization? A: Quantization is a technique used to reduce the precision of model weights, typically by representing them using fewer bits. Think of it like using fewer colors in a painting to reduce the file size. While some details might be lost, it allows for faster processing and smaller model sizes.

Q: What are the benefits of running LLMs locally? A: Running LLMs locally offers several advantages:

- Faster Response Times: No need to wait for data to be sent to and from the cloud, resulting in quicker responses.

- Improved Privacy: Your data remains on your device, enhancing privacy since it's not being sent to external servers.

- Reduced Dependence on Cloud Services: You can leverage the power of LLMs without relying on internet connectivity or cloud infrastructure.

Q: Is the Apple M3Pro suitable for all LLM applications? **A: ** The M3Pro is suitable for many applications but may be insufficient for large, complex models. For high-demand applications, consider specialized hardware like GPUs or TPUs.

Q: What are some recommended tools for running LLMs on the Apple M3_Pro? A: Some popular tools for local LLM deployment include:

- llama.cpp: An open-source library optimized for running LLMs on Apple silicon.

- GPT-NeoX: A powerful library that supports various LLM models and provides flexible deployment options.

Keywords:

Llama2 7B, Apple M3_Pro, Local LLM, Quantization, Token Generation Speed, Benchmark, Performance, GPU Cores, Processing, Generation, Practical Recommendations, Use Cases, Workarounds, LLM Deployment, Device Comparison, Nvidia GeForce RTX 4090, Google TPUv4, Model Pruning, Alternative Models, Hardware Acceleration, llama.cpp, GPT-NeoX, FAQ.