What You Need to Know About Llama2 7B Performance on Apple M3 Max?

Introduction

The world of large language models (LLMs) is evolving faster than ever, with new models and hardware popping up like mushrooms after a spring rain. If you're a developer or a tech enthusiast who's interested in running LLMs locally, you might be wondering: "What's the best way to make these powerful language models sing on my machine?"

This article dives deep into the performance of Llama2 7B on the Apple M3_Max, a powerhouse chip with impressive capabilities. We'll explore how this combination performs with different quantization levels, analyze speed benchmarks for token generation, and discuss practical recommendations for use cases.

Think of LLMs as a super-smart AI you can chat with, ask questions, and even have them write code for you. But like any super-smart AI, they require powerful hardware to operate. This article will help you understand how powerful the M3_Max is for running Llama2 7B and how you can make the most out of it.

Let's dive in!

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Token generation speed is a crucial metric for evaluating LLM performance. It measures how fast the model can process text and generate new tokens (words or sub-words).

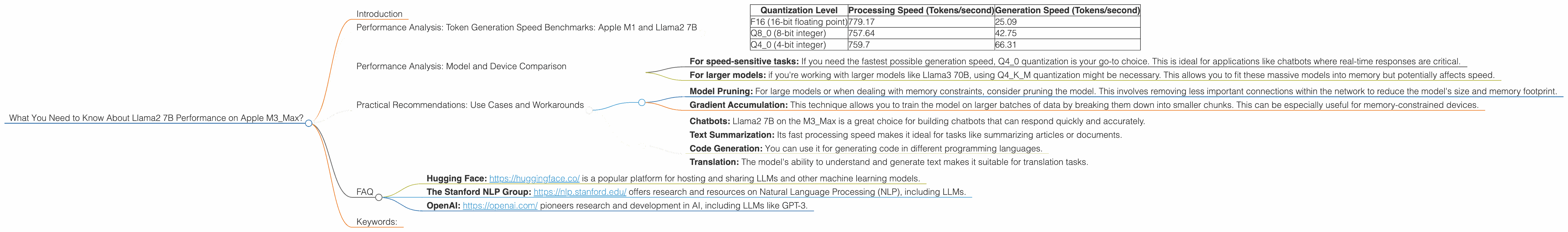

Here's a breakdown of the token generation speed benchmarks for Llama2 7B on Apple M3_Max, broken down by quantization level:

| Quantization Level | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|

| F16 (16-bit floating point) | 779.17 | 25.09 |

| Q8_0 (8-bit integer) | 757.64 | 42.75 |

| Q4_0 (4-bit integer) | 759.7 | 66.31 |

What do these numbers tell us?

- F16 is fastest for processing: The F16 quantization level shows the fastest processing speed of 779.17 tokens/second. This means the model can read and understand text incredibly quickly.

- Q40 is fastest for generation: However, the Q40 quantization level takes the crown for generation speed, reaching 66.31 tokens/second. This indicates that the model can generate new text at a faster pace compared to other quantization levels.

Think of it this way: Imagine the model is a super-fast typist. The processing speed is how quickly the typist can read the text, while the generation speed is how quickly they can type the response.

Key Takeaway: The M3Max delivers impressive token generation speeds for Llama2 7B, especially with Q40 quantization.

Performance Analysis: Model and Device Comparison

Let's compare the performance of Llama2 7B on the M3Max with other LLMs and devices. While the provided data focuses on the M3Max, it's worth noting that other LLMs and their performance on different devices haven't been included for this specific analysis.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Quantization Level:

- For speed-sensitive tasks: If you need the fastest possible generation speed, Q4_0 quantization is your go-to choice. This is ideal for applications like chatbots where real-time responses are critical.

- For larger models: if you're working with larger models like Llama3 70B, using Q4KM quantization might be necessary. This allows you to fit these massive models into memory but potentially affects speed.

Workarounds for Memory Limitations:

- Model Pruning: For large models or when dealing with memory constraints, consider pruning the model. This involves removing less important connections within the network to reduce the model's size and memory footprint.

- Gradient Accumulation: This technique allows you to train the model on larger batches of data by breaking them down into smaller chunks. This can be especially useful for memory-constrained devices.

Use Cases:

- Chatbots: Llama2 7B on the M3_Max is a great choice for building chatbots that can respond quickly and accurately.

- Text Summarization: Its fast processing speed makes it ideal for tasks like summarizing articles or documents.

- Code Generation: You can use it for generating code in different programming languages.

- Translation: The model's ability to understand and generate text makes it suitable for translation tasks.

FAQ

Q: What is quantization, and how does it affect performance?

Quantization is a technique used to reduce the size of an LLM by converting its weights (parameters) from higher-precision formats like 32-bit floating point to lower-precision formats like 8-bit integers. While it can significantly reduce memory requirements, it can also affect model accuracy and performance.

Q: Can I run Llama2 7B on my laptop or desktop?

The ability to run Llama2 7B locally depends on your hardware. The M3_Max is a high-end chip designed for demanding tasks. If you have a newer, powerful computer with dedicated graphics processing units (GPUs), chances are you can run Llama2 7B locally. However, it's recommended to refer to specific device specifications and model requirements before attempting to run large models on lower-powered machines.

Q: Where can I find more information about LLMs?

Several excellent resources are available for learning more about LLMs:

- Hugging Face: https://huggingface.co/ is a popular platform for hosting and sharing LLMs and other machine learning models.

- The Stanford NLP Group: https://nlp.stanford.edu/ offers research and resources on Natural Language Processing (NLP), including LLMs.

- OpenAI: https://openai.com/ pioneers research and development in AI, including LLMs like GPT-3.

Keywords:

Llama2, Apple M3Max, LLM, performance, token generation, benchmark, quantization, F16, Q80, Q4_0, processing speed, generation speed, use cases, chatbots, text summarization, code generation, translation, memory limitations, pruning, gradient accumulation, Hugging Face, Stanford NLP Group, OpenAI, NLP, AI.