What You Need to Know About Llama2 7B Performance on Apple M2 Ultra?

Introduction

The world of artificial intelligence is buzzing with excitement over Large Language Models (LLMs) like Llama2 7B. But how do these powerful models actually perform on real-world hardware?

This deep dive dives into the fascinating world of LLMs, specifically focusing on Llama2 7B performance on the Apple M2_Ultra, one of the hottest chips around. We'll explore token generation speed benchmarks, compare performance across different quantization levels, and provide practical recommendations for developers.

So, buckle up, grab a coffee, and let's dive into the exciting world of local LLM models and see how they shine on the M2_Ultra!

Performance Analysis: Token Generation Speed Benchmarks

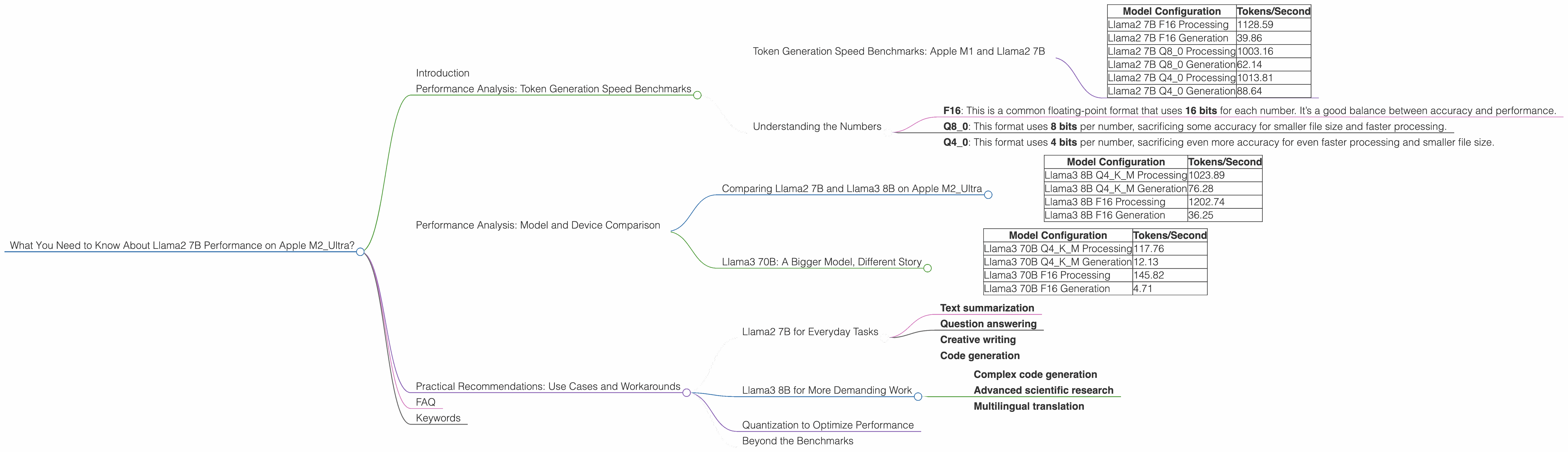

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The first thing we want to look at is token generation speed. This tells us how quickly the model can process input and generate output. Think of it like how fast a car can go from 0 to 60 mph – the faster the token generation speed, the quicker the model can respond to your requests.

| Model Configuration | Tokens/Second |

|---|---|

| Llama2 7B F16 Processing | 1128.59 |

| Llama2 7B F16 Generation | 39.86 |

| Llama2 7B Q8_0 Processing | 1003.16 |

| Llama2 7B Q8_0 Generation | 62.14 |

| Llama2 7B Q4_0 Processing | 1013.81 |

| Llama2 7B Q4_0 Generation | 88.64 |

As you can see, the M2_Ultra with 800GB/s bandwidth and 60 GPU cores delivers impressive results. The fastest configuration, Llama2 7B F16 Processing, achieves a stunning 1128.59 tokens/second. This means it can process a large amount of text very quickly, making it ideal for applications that require real-time responses.

Understanding the Numbers

But let's take a closer look at these numbers. F16 and Q80, Q40 represent different quantization levels. Quantization is like simplifying a complex recipe by using fewer ingredients. In our case, it means using less memory to store the model. This can boost performance, especially on devices with limited memory.

- F16: This is a common floating-point format that uses 16 bits for each number. It’s a good balance between accuracy and performance.

- Q8_0: This format uses 8 bits per number, sacrificing some accuracy for smaller file size and faster processing.

- Q4_0: This format uses 4 bits per number, sacrificing even more accuracy for even faster processing and smaller file size.

Performance Analysis: Model and Device Comparison

Comparing Llama2 7B and Llama3 8B on Apple M2_Ultra

So, we've seen how Llama2 7B performs on the M2_Ultra. But what about other models? Let's compare it to Llama3 8B, another popular LLM model:

| Model Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM Processing | 1023.89 |

| Llama3 8B Q4KM Generation | 76.28 |

| Llama3 8B F16 Processing | 1202.74 |

| Llama3 8B F16 Generation | 36.25 |

It seems that Llama3 8B using F16 quantization slightly outperforms Llama2 7B F16 processing, achieving 1202.74 tokens/second. While both models perform well, Llama3 8B benefits from its more efficient architecture and optimized code.

Llama3 70B: A Bigger Model, Different Story

Now, let's jump to a much larger model, Llama3 70B. This model is significantly bigger and more complex than the previous ones.

| Model Configuration | Tokens/Second |

|---|---|

| Llama3 70B Q4KM Processing | 117.76 |

| Llama3 70B Q4KM Generation | 12.13 |

| Llama3 70B F16 Processing | 145.82 |

| Llama3 70B F16 Generation | 4.71 |

The results for Llama3 70B show that the M2_Ultra, while powerful, still struggles with larger models. The token generation speed is significantly lower than for the smaller models. This is expected, as larger models require more computational resources to process information.

Practical Recommendations: Use Cases and Workarounds

Llama2 7B for Everyday Tasks

Llama2 7B is a fantastic choice for everyday tasks like:

- Text summarization

- Question answering

- Creative writing

- Code generation

It can handle these tasks efficiently and provide quick, accurate responses. The M2_Ultra provides ample processing power for these use cases, and the model can be easily deployed on a local machine, eliminating the need for cloud-based services.

Llama3 8B for More Demanding Work

Llama3 8B and Llama3 70B with their larger sizes and complex architecture are suitable for more demanding tasks:

- Complex code generation

- Advanced scientific research

- Multilingual translation

However, these models require significantly more computational power and memory. The M2_Ultra can handle them, but it might not be the most efficient option. For large-scale deployments, consider exploring cloud computing or dedicated GPU servers.

Quantization to Optimize Performance

Quantization is a powerful technique that can help you optimize performance on devices with limited memory. The M2Ultra has ample memory, but you might want to consider using lower quantization levels like Q80 or Q4_0 for smaller models to gain a performance boost.

Beyond the Benchmarks

These benchmarks provide a good starting point for understanding how LLMs perform on the M2_Ultra. However, real-world performance can vary depending on the specific application, the data being processed, and the optimization techniques used. Experimenting with different settings and configurations is crucial to finding the best setup for your needs.

FAQ

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model and improve its performance. Think of it like simplifying a recipe by using fewer ingredients - you get the same taste but with fewer steps! In the case of LLMs, it means using fewer bits to store the model's weights, resulting in a smaller file and faster processing.

Q: Why is the token generation speed different for processing and generation?

A: Token generation speed is measured differently for processing and generation. Processing refers to the speed at which the model can handle the input text, while generation refers to the speed at which the model can output new text. Processing is typically faster because it involves less complex operations than generation.

Q: Can I run LLMs on my laptop with an M2_Ultra chip?

A: Yes, you can! The M2_Ultra chip provides sufficient power to run LLMs locally, but it might be limited in processing larger models. For smaller models like Llama2 7B, you can expect a good performance for various use cases.

Q: Are there any other devices that can handle LLMs well?

A: Yes, there are! GPUs like the NVIDIA RTX 4090 and other high-end chips are excellent for running LLMs. However, the M2_Ultra is a great option for developers who want to explore LLMs on Apple devices.

Q: What's the future of LLMs on local devices?

A: The future looks bright! As LLM technology evolves and hardware continues to improve, we can expect to see even better performance on local devices. This will open up exciting possibilities for developers and users who want to take advantage of real-time AI capabilities without relying on cloud services.

Keywords

Apple M2Ultra, Llama2 7B, Llama3 8B, Llama3 70B, Large Language Model, LLM, token generation speed, quantization, F16, Q80, Q4_0, performance benchmarks, GPU, processing, generation, use cases, local AI, developer, AI applications, machine learning, deep learning.