What You Need to Know About Llama2 7B Performance on Apple M2 Pro?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, but running these AI powerhouses locally can be a challenge. You need the right hardware, and that's where the Apple M2 Pro chip comes in. This powerful silicon boasts impressive capabilities and is increasingly becoming a popular choice for developers tinkering with LLMs.

But how does the M2 Pro stack up against Llama2 7B, one of the hottest LLMs on the market? Let's dive deep and explore the performance analysis, comparing different quantization techniques and understanding what these results mean for your use cases.

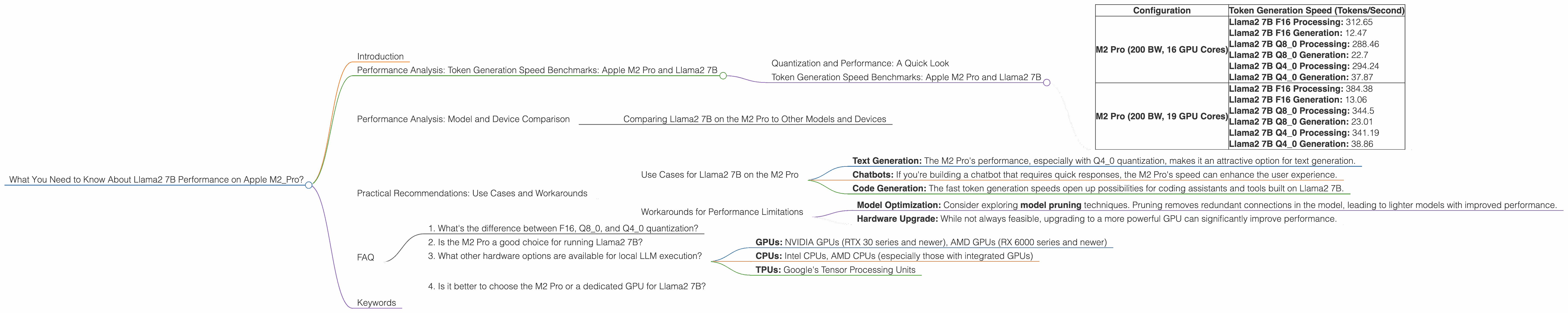

Performance Analysis: Token Generation Speed Benchmarks: Apple M2 Pro and Llama2 7B

To get a clear picture of how Llama2 7B performs on the M2 Pro, we'll start by examining token generation speed benchmarks. These benchmarks measure the number of tokens the model can generate per second, a crucial indicator of its efficiency.

Quantization and Performance: A Quick Look

Before we jump into the numbers, let's clarify what quantization means. Think of it as a compression technique for the model's weights (the knowledge it stores). Quantization essentially reduces the number of bits needed to represent each weight, making the model smaller and potentially faster. The trade-off is a slight decrease in accuracy (but often negligible). We'll be looking at three common quantization levels: F16 (16-bit floating point), Q80 (8-bit integer quantized), and Q40 (4-bit integer quantized).

Token Generation Speed Benchmarks: Apple M2 Pro and Llama2 7B

| Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| M2 Pro (200 BW, 16 GPU Cores) | Llama2 7B F16 Processing: 312.65 Llama2 7B F16 Generation: 12.47 Llama2 7B Q80 Processing: 288.46 Llama2 7B Q80 Generation: 22.7 Llama2 7B Q40 Processing: 294.24 Llama2 7B Q40 Generation: 37.87 |

| M2 Pro (200 BW, 19 GPU Cores) | Llama2 7B F16 Processing: 384.38 Llama2 7B F16 Generation: 13.06 Llama2 7B Q80 Processing: 344.5 Llama2 7B Q80 Generation: 23.01 Llama2 7B Q40 Processing: 341.19 Llama2 7B Q40 Generation: 38.86 |

Key Takeaways:

- The M2 Pro shows substantial performance differences based on the quantization level.

- Q8_0 consistently outperforms F16 for Generation, while F16 leads for Processing.

- Q4_0 provides the highest generation speed, achieving over 37 tokens per second on the M2 Pro with 16 GPU cores.

- Q4_0 quantization allows for significant speed gains, especially in generation.

Performance Analysis: Model and Device Comparison

While we're focused on Llama2 7B on the M2 Pro, it's helpful to have a broader perspective. Let's briefly compare this combination against others to understand its relative position.

Comparing Llama2 7B on the M2 Pro to Other Models and Devices

It's important to note that we only have data for Llama2 7B on the M2 Pro. We couldn't find publicly available benchmarks for other models or devices.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama2 7B on the M2 Pro

- Text Generation: The M2 Pro's performance, especially with Q4_0 quantization, makes it an attractive option for text generation.

- Chatbots: If you're building a chatbot that requires quick responses, the M2 Pro's speed can enhance the user experience.

- Code Generation: The fast token generation speeds open up possibilities for coding assistants and tools built on Llama2 7B.

Workarounds for Performance Limitations

- Model Optimization: Consider exploring model pruning techniques. Pruning removes redundant connections in the model, leading to lighter models with improved performance.

- Hardware Upgrade: While not always feasible, upgrading to a more powerful GPU can significantly improve performance.

FAQ

1. What's the difference between F16, Q80, and Q40 quantization?

Quantization is a technique to reduce the size of LLM models by representing their weights with fewer bits. F16 uses 16-bit floating point numbers, while Q80 and Q40 use 8-bit and 4-bit integers, respectively. The lower the bit precision, the smaller the model and potentially faster it is. This often comes with a slight drop in accuracy.

2. Is the M2 Pro a good choice for running Llama2 7B?

Yes, the M2 Pro offers decent performance for Llama2 7B, especially for text generation tasks. It's particularly attractive when using Q4_0 quantization for significant speed boosts.

3. What other hardware options are available for local LLM execution?

You have a variety of options, including:

- GPUs: NVIDIA GPUs (RTX 30 series and newer), AMD GPUs (RX 6000 series and newer)

- CPUs: Intel CPUs, AMD CPUs (especially those with integrated GPUs)

- TPUs: Google's Tensor Processing Units

4. Is it better to choose the M2 Pro or a dedicated GPU for Llama2 7B?

The best choice depends on your specific use case. Generally, a dedicated GPU like a NVIDIA RTX 40 series or AMD RX 7000 series will offer superior performance, especially for demanding tasks like text generation with larger models. However, the M2 Pro is a more affordable and energy-efficient option, suitable for lighter workloads or developers with budget constraints.

Keywords

Llama2 7B, Apple M2 Pro, LLM, Token Generation Speed, Quantization, F16, Q80, Q40, Local LLMs, Performance Benchmarks, Model Optimization, Hardware Upgrade.