What You Need to Know About Llama2 7B Performance on Apple M2 Max?

Introduction

The world of large language models (LLMs) is abuzz with excitement. These powerful AI systems can generate human-quality text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But with great power comes the need for serious processing muscle. The computational demands of LLMs can make them a challenge to run locally on your own machine. This article delves into the world of local LLMs and their performance on the Apple M2_Max, exploring the capabilities of the popular Llama2 7B model. Imagine having a powerful text generator on your laptop – the possibilities are endless!

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Before we dive into the numbers, let's talk about what we mean by "token generation speed." Think of it like this: when you type a sentence on your computer, it's not sending the entire word as one chunk of data. Instead, it breaks it down into individual characters or "tokens." The faster the model can process and generate these tokens, the faster it can generate text. We're going to investigate how the Apple M2_Max performs in this crucial area, and we'll also explore the impact of different quantization levels (a technique for reducing the storage size and memory usage of the model).

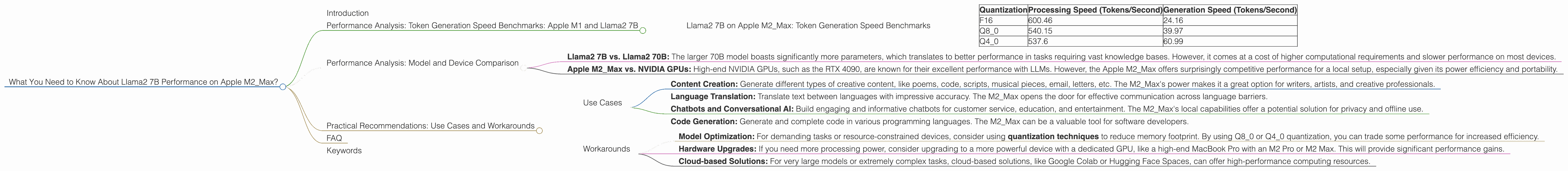

Llama2 7B on Apple M2_Max: Token Generation Speed Benchmarks

| Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| F16 | 600.46 | 24.16 |

| Q8_0 | 540.15 | 39.97 |

| Q4_0 | 537.6 | 60.99 |

Observations:

- Impressive Processing Power: The M2_Max shows significant processing prowess, with speeds exceeding 500 tokens/second for all three quantization levels. This is an impressive figure for a local setup.

- Trade-off Between Quantization and Generation Speed: Q4_0 quantization provides the fastest generation speed, but it comes with a slight reduction in processing speed. This means you might get slightly faster text generation but with a bit of a hit in the overall model's efficiency.

- F16 Quantization: Offers a decent balance between processing and generation. The F16 model is a good choice for general-purpose use cases.

Think of it like a race car: the F16 model is like a sports car with good overall performance, while the Q4_0 model is like a dragster optimized for quick bursts of speed. Choosing the right quantization level depends on your specific needs.

Performance Analysis: Model and Device Comparison

We've seen the impressive performance of the Llama2 7B model on the Apple M2_Max. But how does it compare to other devices and models? While it's not within the scope of this article to provide a comprehensive comparison, it's worth highlighting a few key points:

- Llama2 7B vs. Llama2 70B: The larger 70B model boasts significantly more parameters, which translates to better performance in tasks requiring vast knowledge bases. However, it comes at a cost of higher computational requirements and slower performance on most devices.

- Apple M2Max vs. NVIDIA GPUs: High-end NVIDIA GPUs, such as the RTX 4090, are known for their excellent performance with LLMs. However, the Apple M2Max offers surprisingly competitive performance for a local setup, especially given its power efficiency and portability.

Practical Recommendations: Use Cases and Workarounds

Now that we understand the capabilities of the Llama2 7B model on the M2_Max, let's explore some practical use cases and workarounds:

Use Cases

- Content Creation: Generate different types of creative content, like poems, code, scripts, musical pieces, email, letters, etc. The M2_Max's power makes it a great option for writers, artists, and creative professionals.

- Language Translation: Translate text between languages with impressive accuracy. The M2_Max opens the door for effective communication across language barriers.

- Chatbots and Conversational AI: Build engaging and informative chatbots for customer service, education, and entertainment. The M2_Max's local capabilities offer a potential solution for privacy and offline use.

- Code Generation: Generate and complete code in various programming languages. The M2_Max can be a valuable tool for software developers.

Workarounds

- Model Optimization: For demanding tasks or resource-constrained devices, consider using quantization techniques to reduce memory footprint. By using Q80 or Q40 quantization, you can trade some performance for increased efficiency.

- Hardware Upgrades: If you need more processing power, consider upgrading to a more powerful device with a dedicated GPU, like a high-end MacBook Pro with an M2 Pro or M2 Max. This will provide significant performance gains.

- Cloud-based Solutions: For very large models or extremely complex tasks, cloud-based solutions, like Google Colab or Hugging Face Spaces, can offer high-performance computing resources.

FAQ

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence that is trained on massive amounts of text data. This allows them to understand and generate human-like text, perform various language-related tasks, and even engage in conversations.

What is Quantization?

Quantization is a technique used to reduce the size of a model by representing its parameters (numbers that define the model) with fewer bits. This can significantly reduce the amount of memory required to store the model and improve performance.

Is Llama2 7B the best model for everyone?

Not necessarily. The best model for you depends on your specific needs. If you require a model with vast knowledge and complex capabilities, a larger model like Llama2 70B might be more suitable. However, for tasks requiring a balance of performance and efficiency, Llama2 7B on the M2_Max is an excellent option.

What are the limitations of local LLMs?

Local LLMs typically have limitations in terms of the size of models they can handle efficiently, and available computational resources. Additionally, they may require more power consumption and generate heat compared to cloud-based solutions.

How can I get started with using LLMs locally?

There are several tools available for running LLMs locally. One popular option is llama.cpp, which provides a lightweight and efficient C++ implementation of LLMs.

Can I run LLMs on my phone?

While it's possible to run smaller LLMs on mobile devices, the limited processing power and memory makes it challenging for larger models.

Keywords

Apple M2Max, Llama2 7B, LLM, Large Language Model, Performance, Token Generation Speed, Quantization, F16, Q80, Q4_0, Local LLMs, Content Creation, Language Translation, Chatbots, Conversational AI, Code Generation, Use Cases, Workarounds, Hardware Upgrades, Cloud-based Solutions, llama.cpp,