What You Need to Know About Llama2 7B Performance on Apple M1?

Introduction

The world of large language models (LLMs) is rapidly evolving. If you're a developer who wants to unleash the power of LLMs, you're probably wondering: "How can I run these models locally on my machine?" And if you're using an Apple M1, you're in luck! This article delves into the performance of Llama2 7B, a powerful open-source LLM, on the Apple M1 chip.

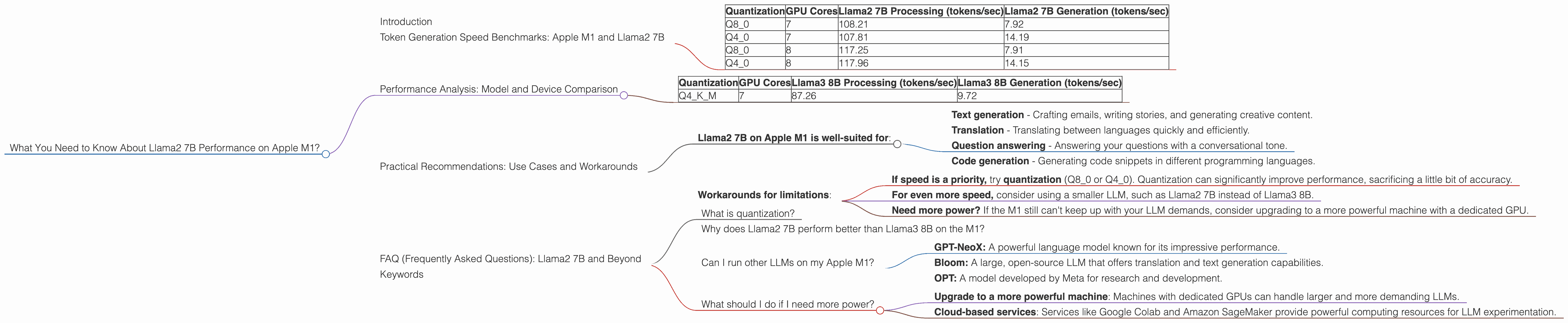

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Imagine an LLM as a super-fast typist. The more tokens it can generate per second, the faster it can churn out text, translate languages, and answer your questions. This is where token generation speed comes in. Higher token generation speed means your LLM can think and respond faster.

Let's look at Llama2 7B's speed on an Apple M1, measured in tokens per second (tokens/sec):

| Quantization | GPU Cores | Llama2 7B Processing (tokens/sec) | Llama2 7B Generation (tokens/sec) |

|---|---|---|---|

| Q8_0 | 7 | 108.21 | 7.92 |

| Q4_0 | 7 | 107.81 | 14.19 |

| Q8_0 | 8 | 117.25 | 7.91 |

| Q4_0 | 8 | 117.96 | 14.15 |

As you can see, Llama2 7B delivers impressive performance on the M1, especially with quantization. Quantization is like compressing the LLM's brain to make it smaller and faster. It's like swapping out a bulky textbook for a pocket-sized summary.

Performance Analysis: Model and Device Comparison

Let's take a moment to compare Llama2 to a more advanced LLM, Llama3 8B (8 billion parameters), using the same M1 chip.

| Quantization | GPU Cores | Llama3 8B Processing (tokens/sec) | Llama3 8B Generation (tokens/sec) |

|---|---|---|---|

| Q4KM | 7 | 87.26 | 9.72 |

Llama3 8B is significantly larger than Llama2 7B, but with the same Q4KM quantization, it's slightly slower in processing speed.

Important Note: We don't have performance data for F16 (half-precision floating-point) for Llama2 7B and Llama3 8B on the Apple M1.

Practical Recommendations: Use Cases and Workarounds

Now, let's translate these numbers into real-world scenarios:

Llama2 7B on Apple M1 is well-suited for:

- Text generation - Crafting emails, writing stories, and generating creative content.

- Translation - Translating between languages quickly and efficiently.

- Question answering - Answering your questions with a conversational tone.

- Code generation - Generating code snippets in different programming languages.

Workarounds for limitations:

- If speed is a priority, try quantization (Q80 or Q40). Quantization can significantly improve performance, sacrificing a little bit of accuracy.

- For even more speed, consider using a smaller LLM, such as Llama2 7B instead of Llama3 8B.

- Need more power? If the M1 still can't keep up with your LLM demands, consider upgrading to a more powerful machine with a dedicated GPU.

FAQ (Frequently Asked Questions): Llama2 7B and Beyond

What is quantization?

Quantization is a technique for reducing the memory footprint and computational demands of LLMs. Think of it as compressing a large file to make it smaller and easier to manage. LLMs are often trained with 32-bit floating-point numbers (F32). Quantization reduces this to 8-bit (Q8) or even 4-bit (Q4) integers, significantly reducing the model's size and speeding up processing.

Why does Llama2 7B perform better than Llama3 8B on the M1?

While Llama3 8B has a larger number of parameters, which can potentially make it more capable, its larger size also comes with a performance cost. The M1 might struggle to process the additional data, leading to slower operation.

Can I run other LLMs on my Apple M1?

Yes, you can! Several LLMs are compatible with the Apple M1, including: - GPT-NeoX: A powerful language model known for its impressive performance. - Bloom: A large, open-source LLM that offers translation and text generation capabilities. - OPT: A model developed by Meta for research and development.

What should I do if I need more power?

If your M1 can't handle your LLM ambitions, don't despair! Consider these options: - Upgrade to a more powerful machine: Machines with dedicated GPUs can handle larger and more demanding LLMs. - Cloud-based services: Services like Google Colab and Amazon SageMaker provide powerful computing resources for LLM experimentation.

Keywords

Llama2 7B, Apple M1, LLM performance, token generation speed, quantization, GPU cores, text generation, translation, question answering, code generation, GPT-NeoX, Bloom, OPT