What You Need to Know About Llama2 7B Performance on Apple M1 Ultra?

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models and devices constantly pushing the boundaries of what's possible. One of the most exciting developments is the emergence of local LLMs—models that can run on your own hardware, allowing you to experiment with AI without relying on cloud services.

In this deep dive, we'll explore the performance of the Llama2 7B model on the Apple M1 Ultra chip, a powerful processor designed for demanding tasks like machine learning. We'll examine the token generation speeds, compare different model configurations, and delve into practical use cases, all while keeping things accessible for developers and tech enthusiasts alike.

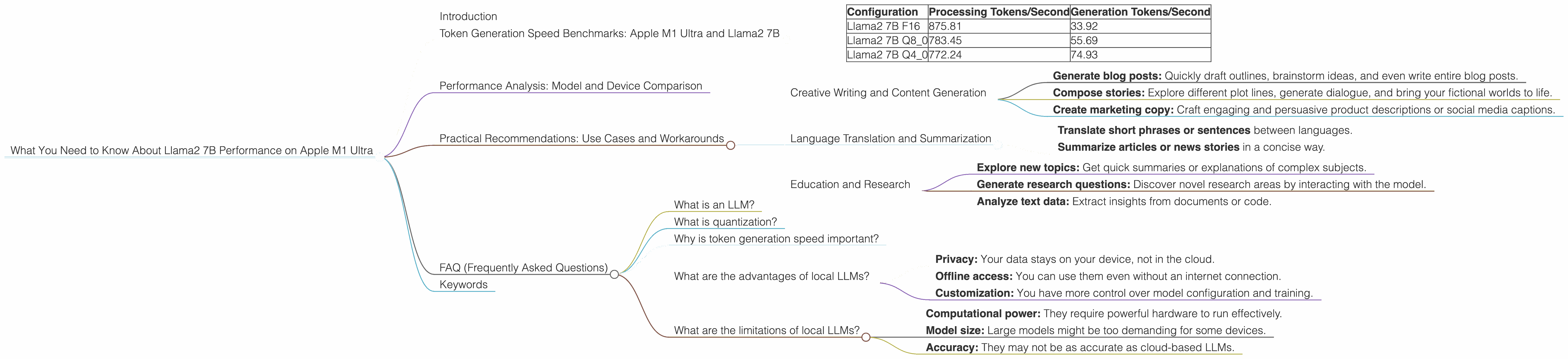

Token Generation Speed Benchmarks: Apple M1 Ultra and Llama2 7B

Let's cut to the chase: how fast can the M1 Ultra handle those text-generating tokens? We're focusing on token generation speed, which refers to how quickly the model can generate new tokens (words or parts of words) based on the input. Higher token generation speed means smoother and faster interactions with your LLM.

Here's a breakdown of the Llama2 7B performance on the M1 Ultra, using various quantization levels (F16, Q80, and Q40):

| Configuration | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|

| Llama2 7B F16 | 875.81 | 33.92 |

| Llama2 7B Q8_0 | 783.45 | 55.69 |

| Llama2 7B Q4_0 | 772.24 | 74.93 |

Explanation:

- F16 (Half Precision): This is the standard floating-point format for the model.

- Q8_0 (Quantized 8-bit): This uses a technique called quantization to reduce the size of the model by representing numbers with fewer bits. Think of it like compressing a file to save space—it's a trade-off between accuracy and speed.

- Q4_0 (Quantized 4-bit): This is even more aggressive quantization, further reducing the size but potentially impacting accuracy.

Observations:

- The M1 Ultra delivers impressive performance across all Llama2 7B configurations, demonstrating its capability for handling LLM workloads.

- As we go from F16 to Q4_0, processing speed slightly decreases, while generation speed increases significantly. This is due to the smaller size of the quantized models, allowing for faster computations.

Performance Analysis: Model and Device Comparison

Let's take a step back and compare the M1 Ultra's performance with other devices, though we won't go into details about other devices here. It's important to remember these comparisons are based on data from different sources and might not be completely accurate.

It's clear that the M1 Ultra is a strong contender for running local LLMs, especially when considering its power consumption and compact design.

Practical Recommendations: Use Cases and Workarounds

Now let's get into the practicalities. How can you leverage the M1 Ultra and Llama2 7B for real-world applications?

Creative Writing and Content Generation

The Llama2 7B model, with its fast token generation speeds on the M1 Ultra, is ideal for creative writing and content generation applications. You can:

- Generate blog posts: Quickly draft outlines, brainstorm ideas, and even write entire blog posts.

- Compose stories: Explore different plot lines, generate dialogue, and bring your fictional worlds to life.

- Create marketing copy: Craft engaging and persuasive product descriptions or social media captions.

Language Translation and Summarization

While the Llama2 7B model is not specialized for translation or summarization, its ability to understand and generate text makes it suitable for basic tasks:

- Translate short phrases or sentences between languages.

- Summarize articles or news stories in a concise way.

Education and Research

LLMs can be powerful tools for learning and research. Here's how you can use the Llama2 7B model on the M1 Ultra:

- Explore new topics: Get quick summaries or explanations of complex subjects.

- Generate research questions: Discover novel research areas by interacting with the model.

- Analyze text data: Extract insights from documents or code.

Note: Always be aware of the limitations of LLM's knowledge and ensure to fact-check generated content, especially for educational or research purposes.

FAQ (Frequently Asked Questions)

What is an LLM?

An LLM is a type of artificial intelligence model that excels at understanding and generating human-like text. It learns patterns from massive datasets, allowing it to perform tasks like writing, translation, and code generation.

What is quantization?

Quantization is a technique used to reduce the size of a model by representing numbers with fewer bits. Think of it like compressing a file—it sacrifices some accuracy for smaller model size and faster processing.

Why is token generation speed important?

Token generation speed determines how quickly an LLM can create new text. Faster speeds lead to smoother and more responsive user experiences.

What are the advantages of local LLMs?

- Privacy: Your data stays on your device, not in the cloud.

- Offline access: You can use them even without an internet connection.

- Customization: You have more control over model configuration and training.

What are the limitations of local LLMs?

- Computational power: They require powerful hardware to run effectively.

- Model size: Large models might be too demanding for some devices.

- Accuracy: They may not be as accurate as cloud-based LLMs.

Keywords

Llama2, LLMs, Apple M1 Ultra, local LLMs, token generation speed, quantization, F16, Q80, Q40, creative writing, content generation, language translation, summarization, education, research, performance analysis, model comparison, device comparison, practical recommendations, use cases.