What Are the Limitations of Apple M3 Pro for AI Tasks?

Introduction

The Apple M3 Pro is a powerful chip designed for demanding tasks like video editing, 3D modeling, and gaming. But what about AI tasks? Can this chip handle the computationally intense world of large language models (LLMs)? The answer is complex and depends on factors like the specific LLM model and its quantization level. In this article, we'll explore the limitations of the Apple M3 Pro for AI tasks by focusing on the processing and generation speeds of popular LLMs like Llama 2.

Understanding LLM Performance

Before diving into the specifics, let's understand what we're measuring. For LLMs, we care about two main aspects:

Processing Speed (Tokens/Second): This measures how quickly the model can comprehend the input text. It's like reading a book at a blazing speed, understanding every word.

Generation Speed (Tokens/Second): This measures how quickly the model can create new text based on the input. Think of it as writing a coherent story based on a prompt.

Both these speeds are important for a smooth and efficient AI experience.

Apple M3 Pro for Llama 2

We'll focus on Llama 2, a popular open-source LLM, to understand the M3 Pro's capabilities. The data we'll use is gathered from various sources, including ggerganov's llama.cpp repository and XiongjieDai's GPU Benchmarks on LLM Inference.

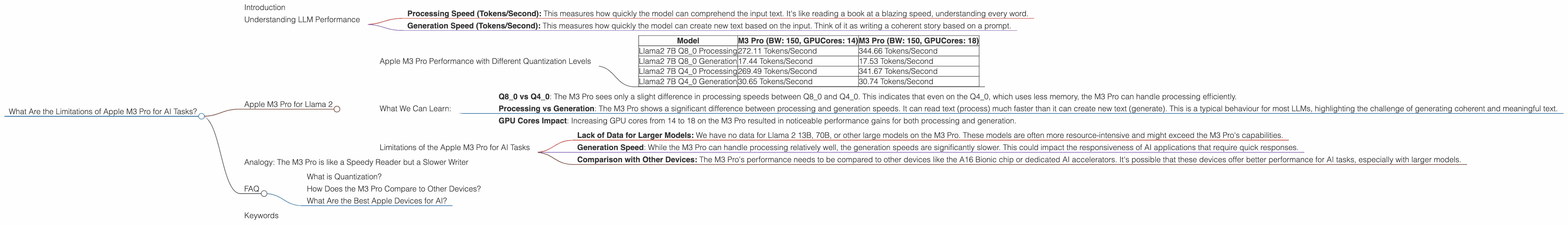

Apple M3 Pro Performance with Different Quantization Levels

LLMs can be compressed (or quantized) to reduce their memory footprint and improve performance. Let's see how the Apple M3 Pro performs with Llama 2 at different quantization levels:

| Model | M3 Pro (BW: 150, GPUCores: 14) | M3 Pro (BW: 150, GPUCores: 18) |

|---|---|---|

| Llama2 7B Q8_0 Processing | 272.11 Tokens/Second | 344.66 Tokens/Second |

| Llama2 7B Q8_0 Generation | 17.44 Tokens/Second | 17.53 Tokens/Second |

| Llama2 7B Q4_0 Processing | 269.49 Tokens/Second | 341.67 Tokens/Second |

| Llama2 7B Q4_0 Generation | 30.65 Tokens/Second | 30.74 Tokens/Second |

Note: *This information focuses on Llama 2 7B. Data for other models and configurations is unavailable.

What We Can Learn:

Q80 vs Q40: The M3 Pro sees only a slight difference in processing speeds between Q80 and Q40. This indicates that even on the Q4_0, which uses less memory, the M3 Pro can handle processing efficiently.

Processing vs Generation: The M3 Pro shows a significant difference between processing and generation speeds. It can read text (process) much faster than it can create new text (generate). This is a typical behaviour for most LLMs, highlighting the challenge of generating coherent and meaningful text.

GPU Cores Impact: Increasing GPU cores from 14 to 18 on the M3 Pro resulted in noticeable performance gains for both processing and generation.

Limitations of the Apple M3 Pro for AI Tasks

While the M3 Pro offers respectable performance, it's important to acknowledge its limitations:

- Lack of Data for Larger Models: We have no data for Llama 2 13B, 70B, or other large models on the M3 Pro. These models are often more resource-intensive and might exceed the M3 Pro's capabilities.

- Generation Speed: While the M3 Pro can handle processing relatively well, the generation speeds are significantly slower. This could impact the responsiveness of AI applications that require quick responses.

- Comparison with Other Devices: The M3 Pro's performance needs to be compared to other devices like the A16 Bionic chip or dedicated AI accelerators. It's possible that these devices offer better performance for AI tasks, especially with larger models.

Analogy: The M3 Pro is like a Speedy Reader but a Slower Writer

Imagine the M3 Pro as a speed reader who can devour books at an incredible pace. It understands the text quickly, but its writing speed is much slower. It takes time to formulate new ideas and create coherent text, like writing a novel. This analogy highlights the M3 Pro's strength in processing information and its challenge in generating text.

FAQ

What is Quantization?

Quantization is like compressing a text file. It reduces the number of bits used to represent each number in the LLM's weights, making the model smaller and faster. Think of it as using a smaller word list to represent a large book. The book takes up less space and can be read faster.

How Does the M3 Pro Compare to Other Devices?

The M3 Pro's performance depends on the LLM model and its quantization. We don't have data to compare it to other devices for all LLMs. However, dedicated AI accelerators or powerful GPUs might offer better performance for larger models or with high-precision calculations.

What Are the Best Apple Devices for AI?

Current information suggests that the M2 Pro and M2 Max chips offer better performance for AI tasks compared to the M3 Pro, particularly with large LLMs. However, the choice depends on the specific LLM model and your application's needs.

Keywords

Apple M3 Pro, AI, LLM, Llama 2, Quantization, Processing Speed, Generation Speed, Token/Second, GPU Cores, Limitations, Performance, AI Accelerator, M2 Max, M2 Pro, M1,