What Are the Limitations of Apple M3 Max for AI Tasks?

Introduction

The Apple M3 Max chip is a powerhouse for various tasks, including video editing, graphics design, and gaming. But how does it perform when it comes to AI, specifically in the realm of large language models (LLMs)?

LLMs are like the brains of AI applications, capable of understanding and generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way.

This article dives into the capabilities of the Apple M3 Max chip for running LLMs, analyzing its performance benchmarks and highlighting its limitations. We'll explore how the M3 Max handles different LLM models and investigate potential bottlenecks that could impact your AI projects.

Apple M3 Max Performance for LLMs

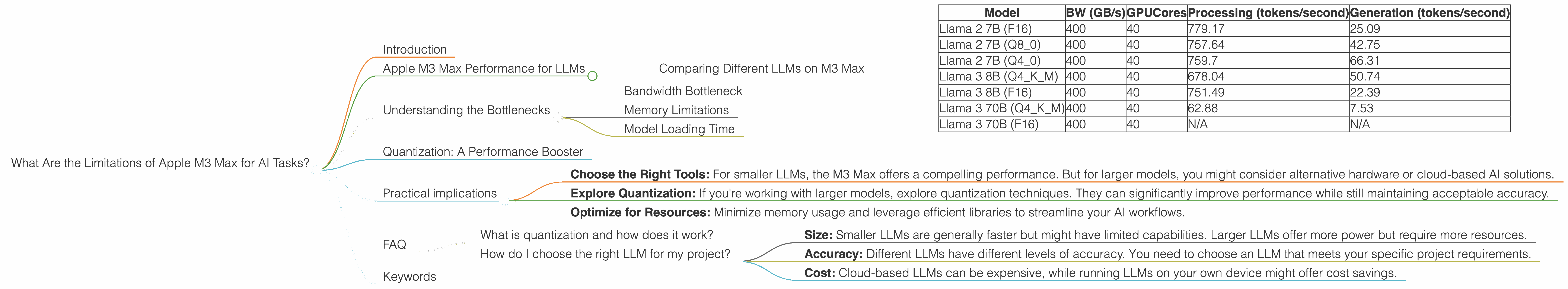

Let's get down to the nitty-gritty. The M3 Max boasts impressive specifications, including a massive 40-core GPU and a high bandwidth memory (BW) of 400 GB/s. How do these specs translate to real-world AI performance?

Comparing Different LLMs on M3 Max

The data we're analyzing comes from benchmark tests using llama.cpp and GPU Benchmarks on LLM Inference. These benchmarks reveal valuable insights into how the M3 Max performs with various LLMs, including Llama 2 and Llama 3.

To make the data easier to understand, let's use descriptive labels instead of generic terms. "BW" stands for bandwidth, "GPUCores" represent the number of GPU cores, and "Q" denotes the quantization method used for the LLM.

| Model | BW (GB/s) | GPUCores | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|---|

| Llama 2 7B (F16) | 400 | 40 | 779.17 | 25.09 |

| Llama 2 7B (Q8_0) | 400 | 40 | 757.64 | 42.75 |

| Llama 2 7B (Q4_0) | 400 | 40 | 759.7 | 66.31 |

| Llama 3 8B (Q4KM) | 400 | 40 | 678.04 | 50.74 |

| Llama 3 8B (F16) | 400 | 40 | 751.49 | 22.39 |

| Llama 3 70B (Q4KM) | 400 | 40 | 62.88 | 7.53 |

| Llama 3 70B (F16) | 400 | 40 | N/A | N/A |

Key Observations:

- Smaller Models Shine: The M3 Max excels with smaller LLMs like Llama 2 7B and Llama 3 8B. It achieves impressive token processing speeds, exceeding 700 tokens per second in some instances.

- Quantization Impacts Performance: Quantization techniques, like Q8 and Q4, reduce the precision but boost performance. The M3 Max shows faster token generation rates with Q8 and Q4 quantization compared to F16 for the Llama 2 7B model.

- Larger Models Struggle: As the LLM size increases, the M3 Max's performance takes a hit. The token generation speed drops dramatically for Llama 3 70B, highlighting a potential bandwidth bottleneck. Unfortunately, data on the performance of Llama 3 70B with F16 is not available.

Understanding the Bottlenecks

Why does the M3 Max struggle with larger LLMs despite its impressive specifications? The answer lies in a combination of factors:

Bandwidth Bottleneck

Bandwidth is the rate at which data can be transferred between the CPU, GPU, and memory. Imagine a highway with a limited number of lanes. If you have a lot of cars trying to use the same highway at the same time, you'll experience traffic jams. Similarly, if the bandwidth is insufficient, the data flow between the M3 Max's components can become congested, leading to slower performance.

In the case of the M3 Max, the 400 GB/s bandwidth might not be enough to handle the demands of larger LLMs. These models require significantly more data to be processed, potentially exceeding the M3 Max's bandwidth capabilities.

Memory Limitations

Memory stores data that the CPU and GPU need to access. Imagine a library with limited shelf space. If you try to store too many books, you'll run out of space. Similarly, if the M3 Max's memory is insufficient, it might not be able to store all the data required for a large LLM, leading to performance degradation.

While the M3 Max offers a generous amount of memory, the demands of larger LLMs can easily overwhelm it, especially when considering the massive size of these models.

Model Loading Time

Loading time is the time it takes for the LLM to be loaded into the memory before it can start processing. Imagine a huge library where you need time to find the specific book you need. Similarly, if the model is very large, it takes time for the M3 Max to load it, causing delays in your AI tasks.

The M3 Max's hardware might be capable of processing large LLMs at a decent speed, but the initial loading process might become a major bottleneck, slowing down your AI workflows.

Quantization: A Performance Booster

Quantization is a technique that reduces the storage size and computational requirements of LLMs by using smaller data representations. Imagine a library with a special program that compresses books without losing much information. This compression allows you to store more books in the same space, achieving a higher "storage efficiency."

Quantization works similarly for LLMs, reducing the size of the model and speeding up processing. The M3 Max demonstrates improved performance with quantized LLMs, as shown in the benchmark results.

But be aware: Quantization comes with a tradeoff. While it significantly improves performance, it also leads to a reduction in accuracy. Think of it as reducing the resolution of a photo. You save space, but you lose some quality.

Practical implications

The M3 Max is a capable chip for AI, particularly for smaller and quantized LLMs. However, its limitations become apparent when dealing with large LLMs. Here are some key takeaways for developers working on AI projects:

- Choose the Right Tools: For smaller LLMs, the M3 Max offers a compelling performance. But for larger models, you might consider alternative hardware or cloud-based AI solutions.

- Explore Quantization: If you're working with larger models, explore quantization techniques. They can significantly improve performance while still maintaining acceptable accuracy.

- Optimize for Resources: Minimize memory usage and leverage efficient libraries to streamline your AI workflows.

FAQ

What is quantization and how does it work?

Quantization is a technique used to reduce the precision of numbers used in a neural network to make it smaller and faster. Imagine a library with books written in different languages. Quantization is like using a translator to condense all the books into a single language, making the library more efficient.

How do I choose the right LLM for my project?

Consider the following factors:

- Size: Smaller LLMs are generally faster but might have limited capabilities. Larger LLMs offer more power but require more resources.

- Accuracy: Different LLMs have different levels of accuracy. You need to choose an LLM that meets your specific project requirements.

- Cost: Cloud-based LLMs can be expensive, while running LLMs on your own device might offer cost savings.

Keywords

Apple M3 Max, LLM, AI, Natural Language Processing, Machine Learning, Token Processing, Quantization, F16, Q8, Q4, Llama 2, Llama 3, GPU, Performance Benchmark, Bandwidth, Memory, Bottleneck, Model Loading Time, Cloud-based AI, AI Project Optimization, AI Development, AI Hardware, AI Software, AI Resources.