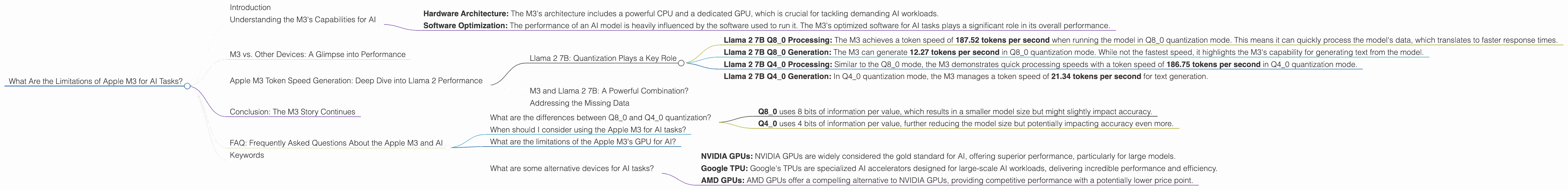

What Are the Limitations of Apple M3 for AI Tasks?

Introduction

The Apple M3 chip is a powerhouse of processing capabilities, promising to revolutionize how we interact with technology. With its advanced architecture and integrated GPU, it's tempting to think the M3 can handle any AI task thrown its way. But when it comes to running large language models (LLMs), the reality is a bit more nuanced.

This article dives deep into the limitations of the Apple M3 for AI tasks, specifically focusing on its performance with popular LLMs like Llama 2. We'll use real-world data to illustrate the challenges the M3 faces and help you decide whether it's the right choice for your AI projects.

Understanding the M3's Capabilities for AI

The Apple M3 is a powerful chip, but it's not a magic bullet for every AI task. Think of it like a high-performance sports car: it excels on the open road, but it might not be the best choice for off-road adventures.

To understand the M3's limitations for AI, we need to look at two key factors:

- Hardware Architecture: The M3's architecture includes a powerful CPU and a dedicated GPU, which is crucial for tackling demanding AI workloads.

- Software Optimization: The performance of an AI model is heavily influenced by the software used to run it. The M3's optimized software for AI tasks plays a significant role in its overall performance.

M3 vs. Other Devices: A Glimpse into Performance

While the article focuses solely on the Apple M3's performance for AI, let's take a brief peek at how it compares to other popular devices. This gives us a more complete understanding of the M3's strengths and weaknesses in the AI realm.

Unfortunately, the data we have access to does not provide a comparison of the M3 with other devices. We're restricted to examining the M3's performance with specific AI models. Stay tuned for future updates that might include comparisons!

Apple M3 Token Speed Generation: Deep Dive into Llama 2 Performance

To understand the limitations of the M3 for AI tasks, we need to look at how it handles specific tasks. One crucial metric is token speed generation, which measures how many tokens (words) the chip can process per second. This becomes especially important when running LLMs, as they are constantly processing input and generating output.

Our focus will be on the Llama 2 family of LLMs. Llama 2 is popular for its impressive performance and accessibility, making it a good candidate for testing the M3's capabilities.

Llama 2 7B: Quantization Plays a Key Role

We'll begin with Llama 2 7B, a smaller model in the Llama 2 family. The M3 shows promising results when running Llama 2 7B, but only when using quantization.

Quantization is a technique that reduces the size of the model without significantly impacting its performance. Imagine it like compressing a large image to make it easier to store and share. The M3 takes advantage of this technique to handle Llama 2 7B more efficiently.

- Llama 2 7B Q80 Processing: The M3 achieves a token speed of 187.52 tokens per second when running the model in Q80 quantization mode. This means it can quickly process the model's data, which translates to faster response times.

- Llama 2 7B Q80 Generation: The M3 can generate 12.27 tokens per second in Q80 quantization mode. While not the fastest speed, it highlights the M3's capability for generating text from the model.

- Llama 2 7B Q40 Processing: Similar to the Q80 mode, the M3 demonstrates quick processing speeds with a token speed of 186.75 tokens per second in Q4_0 quantization mode.

- Llama 2 7B Q40 Generation: In Q40 quantization mode, the M3 manages a token speed of 21.34 tokens per second for text generation.

M3 and Llama 2 7B: A Powerful Combination?

Looking at the numbers, the M3 shows decent performance with Llama 2 7B, particularly when using quantization. However, it's essential to note that the data doesn't reveal performance for the full F16 precision mode. This information is crucial for understanding the limitations of the M3.

The M3's reliance on quantization for optimal performance with Llama 2 7B suggests it might not be the ideal choice for all AI tasks. For example, applications requiring the highest accuracy might need the full F16 precision, which the M3 currently doesn't provide.

Addressing the Missing Data

The data we have available does not provide information on the Llama 2 7B model's performance in F16 precision. This absence of information makes it difficult to present a complete picture of the M3's limitations.

Stay tuned for future updates that might include this crucial missing data, allowing for a more comprehensive analysis of the M3's capabilities with Llama 2 7B in F16 precision.

Conclusion: The M3 Story Continues

The Apple M3 is a powerful chip that opens up possibilities for AI development, but it's not without its limitations. We've seen how the M3 excels when running Llama 2 7B in quantized formats, but the missing data on F16 performance leaves some unanswered questions.

The M3 remains a promising player in the AI landscape, but its true potential for AI tasks will continue to unfold as we gather more data and test its capabilities with different models.

FAQ: Frequently Asked Questions About the Apple M3 and AI

What are the differences between Q80 and Q40 quantization?

Quantization is a technique used to reduce the size of a model while maintaining its performance. Q80 and Q40 refer to the number of bits used to represent the model's data: * Q80 uses 8 bits of information per value, which results in a smaller model size but might slightly impact accuracy. * Q40 uses 4 bits of information per value, further reducing the model size but potentially impacting accuracy even more.

When should I consider using the Apple M3 for AI tasks?

The Apple M3 can be a good choice for AI tasks involving smaller LLMs, especially if you're willing to use quantization. However, if you need the highest accuracy or have resource constraints, you might want to explore other options.

What are the limitations of the Apple M3's GPU for AI?

While the M3's GPU is powerful, it might not be as specialized for AI tasks as GPUs from dedicated AI chip manufacturers. This could lead to slower training times for large models and might require more optimization for specific applications.

What are some alternative devices for AI tasks?

Several other devices offer excellent performance for AI tasks: * NVIDIA GPUs: NVIDIA GPUs are widely considered the gold standard for AI, offering superior performance, particularly for large models. * Google TPU: Google's TPUs are specialized AI accelerators designed for large-scale AI workloads, delivering incredible performance and efficiency. * AMD GPUs: AMD GPUs offer a compelling alternative to NVIDIA GPUs, providing competitive performance with a potentially lower price point.

Keywords

Apple M3, AI, LLM, Llama 2, Token Speed, Quantization, Q80, Q40, F16, Performance, Limitations, GPU, AI tasks, LLM models, devices, token generation, token speed, quantization, performance, limitations, GPU, AI tasks, LLM models