What Are the Limitations of Apple M2 Ultra for AI Tasks?

Introduction

The world of AI has exploded with the rise of Large Language Models (LLMs), and these models are hungry for processing power! Apple's M2 Ultra chip, a beast of a processor, promises lightning-fast performance, but are its capabilities enough to tame the computational demands of LLMs? Let's dive into the intricate world of LLM benchmarks and see how the M2 Ultra stacks up. We'll be focusing on Apple M2 Ultra's limitations, where its power falls short, and explore the factors that limit its performance for AI tasks.

Apple M2 Ultra: A Powerhouse Unveiled

The Apple M2 Ultra is a colossal chip boasting a staggering 24 cores, 76 GPU cores, and a massive 96GB of unified memory. Think of it as a supercharged brain with a limitless storage capacity! This combination makes it a compelling contender for AI tasks, but does it truly deliver? Let's find out!

Performance of Apple M2 Ultra for Large Language Models

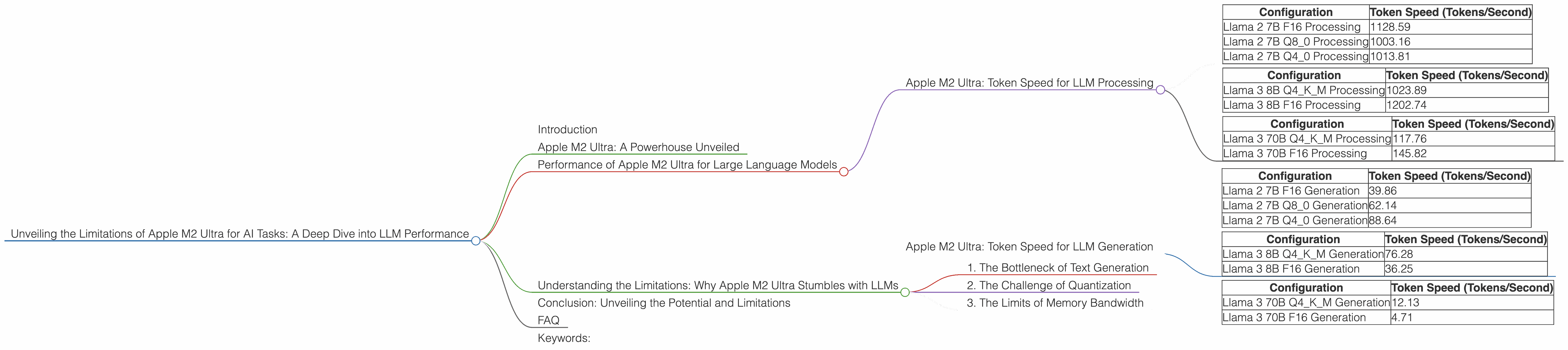

To assess the M2 Ultra's prowess for LLMs, we'll use the benchmark results of Llama 2 7B and Llama 3 8B and 70B. These numbers represent tokens per second, a measure of how quickly the chip processes data.

Apple M2 Ultra: Token Speed for LLM Processing

Llama 2 7B:

| Configuration | Token Speed (Tokens/Second) |

|---|---|

| Llama 2 7B F16 Processing | 1128.59 |

| Llama 2 7B Q8_0 Processing | 1003.16 |

| Llama 2 7B Q4_0 Processing | 1013.81 |

Llama 3 8B:

| Configuration | Token Speed (Tokens/Second) |

|---|---|

| Llama 3 8B Q4KM Processing | 1023.89 |

| Llama 3 8B F16 Processing | 1202.74 |

Llama 3 70B:

| Configuration | Token Speed (Tokens/Second) |

|---|---|

| Llama 3 70B Q4KM Processing | 117.76 |

| Llama 3 70B F16 Processing | 145.82 |

Notes:

- No data is available for Llama 7B on Apple M2 Ultra.

- Llama 2 7B and Llama 3 8B exhibit slightly faster token speeds when using F16 (half-precision floating point) compared to quantized configurations (Q80 and Q40).

Apple M2 Ultra: Token Speed for LLM Generation

Llama 2 7B:

| Configuration | Token Speed (Tokens/Second) |

|---|---|

| Llama 2 7B F16 Generation | 39.86 |

| Llama 2 7B Q8_0 Generation | 62.14 |

| Llama 2 7B Q4_0 Generation | 88.64 |

Llama 3 8B:

| Configuration | Token Speed (Tokens/Second) |

|---|---|

| Llama 3 8B Q4KM Generation | 76.28 |

| Llama 3 8B F16 Generation | 36.25 |

Llama 3 70B:

| Configuration | Token Speed (Tokens/Second) |

|---|---|

| Llama 3 70B Q4KM Generation | 12.13 |

| Llama 3 70B F16 Generation | 4.71 |

Notes:

- No data is available for Llama 7B on Apple M2 Ultra.

- The generation token speeds are significantly slower than the processing speeds, highlighting the bottleneck for text output.

Understanding the Limitations: Why Apple M2 Ultra Stumbles with LLMs

While the Apple M2 Ultra showcases impressive processing power, it faces limitations when handling the complex demands of LLMs. Here's a breakdown of the key factors that hold it back:

1. The Bottleneck of Text Generation

The numbers reveal a stark contrast between processing and generation speeds. While the M2 Ultra excels in processing text data, its performance dwindles when it comes to generating text, especially for larger models. This disparity stems from the computational complexity of text generation, which involves intricate calculations and memory management.

Imagine it like this: the M2 Ultra is like a superfast train, capable of carrying a massive amount of passengers (data) across a vast distance (processing) smoothly. However, at the destination (text generation), the train faces congestion at the platform, slowing down the disembarkation process.

2. The Challenge of Quantization

Quantization, a technique used to reduce the size of model parameters, can significantly impact performance. The M2 Ultra demonstrates a noticeable drop in token generation speeds when using quantized configurations. This reduction in speed is a direct result of the trade-off between model size and computational efficiency.

Think of quantization as condensing information into smaller packages for easier transportation. While it reduces the size of the luggage, it slows down the loading and unloading process.

3. The Limits of Memory Bandwidth

The sheer size of modern LLMs demands substantial memory bandwidth. While the M2 Ultra boasts impressive memory capacity, the bandwidth might not be sufficient to keep up with the data demands of larger models.

Picture the memory bandwidth as a highway connecting the train station to the platform. A narrow highway will cause traffic jams, hindering the rapid delivery of passengers (data) to their destination (text generation).

Conclusion: Unveiling the Potential and Limitations

The Apple M2 Ultra, with its powerful processing capabilities and generous memory, is a formidable chip for AI tasks. Nonetheless, its performance with LLMs, particularly larger models, is hindered by the limitations of text generation, quantization, and memory bandwidth. As AI models continue to evolve, the demands for processing power will only escalate, requiring even more efficient and powerful solutions.

FAQ

Q: What are the best LLM models for the Apple M2 Ultra?

A: The Apple M2 Ultra consistently delivers high performance with smaller LLMs like Llama 2 7B and Llama 3 8B. For larger models like Llama 3 70B, the performance is still decent; however, it could be significantly enhanced with future optimizations and advancements in hardware.

Q: What is quantization and how does it impact LLM performance on the Apple M2 Ultra?

A: Quantization is a technique for reducing the size of model parameters, allowing for faster processing and smaller memory footprint. While quantization can benefit LLM performance, it often results in a slight reduction in accuracy and a noticeable drop in token generation speed for the Apple M2 Ultra.

Q: Why does Apple M2 Ultra struggle with text generation?

A: Text generation is computationally demanding, requiring complex calculations and memory management. The M2 Ultra's processing power might not be sufficient to handle the intricate operations involved in text generation, especially for larger LLMs.

Keywords:

Apple M2 Ultra, LLM, Large Language Model, Llama 2, Llama 3, Token Speed, Processing, Generation, Quantization, Memory Bandwidth, AI, Deep Learning, Neural Network, Performance, Limitations, Bottleneck, GPU Cores, Unified Memory.