What Are the Limitations of Apple M2 Pro for AI Tasks?

Introduction

The Apple M2 Pro chip, a powerful integrated processor designed for both CPU and performance-demanding tasks, has been praised for its impressive processing power and efficiency. But how does this powerful chip perform when it comes to AI tasks, specifically, working with large language models (LLMs)? This article dives deep into the capabilities of the M2 Pro chip, examining its strengths and limitations for running LLMs.

Understanding LLMs and Token Speed

LLMs, like ChatGPT, are powerful AI models trained on massive amounts of text data to understand and generate human-like text. These models work by processing text as a sequence of "tokens" - words, punctuation marks, or even parts of words. The speed at which an LLM can process these tokens determines its overall performance.

The M2 Pro's AI Capabilities: A Closer Look at Token Speeds

The M2 Pro chip comes equipped with a powerful GPU and a dedicated Neural Engine. This combination is designed to accelerate tasks that involve heavy computation, such as training and running AI models. However, let's look at the specific performance numbers regarding token speeds for different LLMs on the M2 Pro.

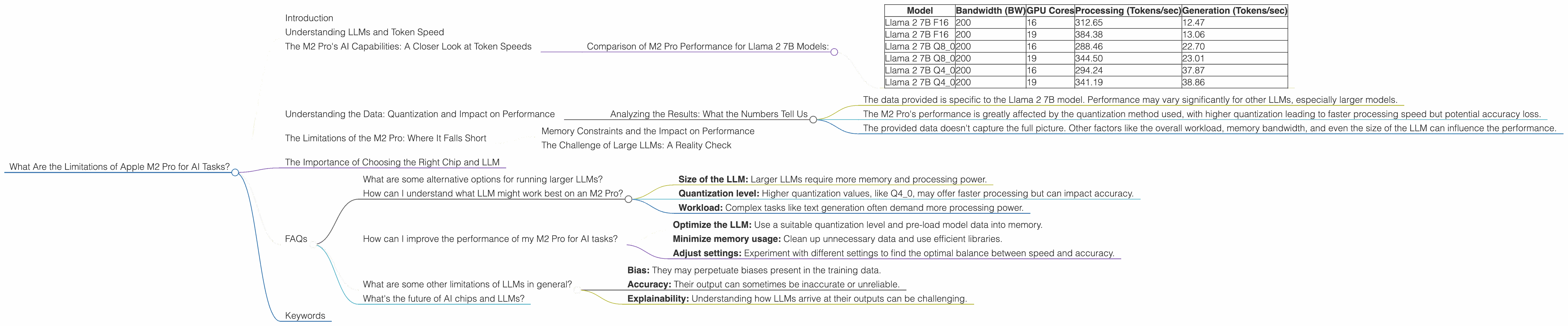

Comparison of M2 Pro Performance for Llama 2 7B Models:

| Model | Bandwidth (BW) | GPU Cores | Processing (Tokens/sec) | Generation (Tokens/sec) |

|---|---|---|---|---|

| Llama 2 7B F16 | 200 | 16 | 312.65 | 12.47 |

| Llama 2 7B F16 | 200 | 19 | 384.38 | 13.06 |

| Llama 2 7B Q8_0 | 200 | 16 | 288.46 | 22.70 |

| Llama 2 7B Q8_0 | 200 | 19 | 344.50 | 23.01 |

| Llama 2 7B Q4_0 | 200 | 16 | 294.24 | 37.87 |

| Llama 2 7B Q4_0 | 200 | 19 | 341.19 | 38.86 |

Important Note: This data only reflects the performance of the M2 Pro for the Llama 2 7B model. Performance may vary considerably depending on the specific LLM you're using and the configuration used (the 'Q' value, or quantization level for the model).

Understanding the Data: Quantization and Impact on Performance

Quantization is a technique used to compress and optimize an LLM for efficient processing. It allows the model to fit into smaller memory spaces, leading to faster processing speeds. However, the trade-off is that quantization can sometimes reduce the accuracy of the LLM.

- F16: This format, often called "half-precision," is a standard for efficiently representing floating-point numbers. This format has a lower storage requirement and can be processed faster but can sometimes lead to a slight decrease in accuracy.

- Q8_0: This format represents numbers with 8 bits, with zero being the number of bits dedicated to the exponent. This format is even more compact than F16 but may have more significant accuracy limitations.

- Q40: This format uses even fewer bits (4) than Q80 and can further reduce the storage requirements and increase processing speed. However, this approach can lead to more significant accuracy loss compared to F16 or Q8_0.

Remember: Higher quantization values, like Q4_0, generally result in faster processing speeds but may compromise the accuracy of the LLM's outputs.

Analyzing the Results: What the Numbers Tell Us

The data reveals that the M2 Pro chip can achieve impressive token speeds, particularly for smaller LLMs. For example, the Llama 2 7B model performs exceptionally well on the M2 Pro, reaching token speeds between 288.46 and 384.38 tokens per second for processing and 12.47 to 38.86 tokens per second for generation.

However, it is essential to consider the following:

- The data provided is specific to the Llama 2 7B model. Performance may vary significantly for other LLMs, especially larger models.

- The M2 Pro's performance is greatly affected by the quantization method used, with higher quantization leading to faster processing speed but potential accuracy loss.

- The provided data doesn't capture the full picture. Other factors like the overall workload, memory bandwidth, and even the size of the LLM can influence the performance.

The Limitations of the M2 Pro: Where It Falls Short

While the M2 Pro showcases impressive AI performance for smaller models like Llama 2 7B, running larger LLMs, especially those exceeding 13B parameters, on the M2 Pro can become a bottleneck. The M2 Pro's GPU architecture is not designed to handle the memory demands of these larger LLMs efficiently.

Memory Constraints and the Impact on Performance

The M2 Pro offers a good amount of memory, though it may still fall short for running extremely large LLMs. The limited memory capacity can lead to scenarios where the LLM needs to swap data between the main memory and the storage, impacting performance. This is especially noticeable during the processing stage, where the LLM requires constant access to large amounts of data.

The Challenge of Large LLMs: A Reality Check

Think of it this way: Imagine trying to fit a massive library of books (a large LLM) into a small room (the M2 Pro's memory). You'd likely have to keep moving books in and out of the room to accommodate everything, slowing down the process.

The Importance of Choosing the Right Chip and LLM

The performance of the M2 Pro for AI tasks depends heavily on the specific LLM and the chosen configuration. For smaller LLMs like Llama 2 7B, the M2 Pro can deliver solid results. However, running larger models can result in significant performance limitations due to memory constraints and the architecture's limitations.

FAQs

What are some alternative options for running larger LLMs?

For larger LLMs, consider devices with specialized GPUs like the NVIDIA A100 or A40 GPUs, which are designed to handle the memory requirements of these models. Alternatively, you can choose cloud-based solutions like Google Colab or Amazon SageMaker, which provide access to more powerful hardware and resources.

How can I understand what LLM might work best on an M2 Pro?

Consider the following factors:

- Size of the LLM: Larger LLMs require more memory and processing power.

- Quantization level: Higher quantization values, like Q4_0, may offer faster processing but can impact accuracy.

- Workload: Complex tasks like text generation often demand more processing power.

How can I improve the performance of my M2 Pro for AI tasks?

Here are some tips:

- Optimize the LLM: Use a suitable quantization level and pre-load model data into memory.

- Minimize memory usage: Clean up unnecessary data and use efficient libraries.

- Adjust settings: Experiment with different settings to find the optimal balance between speed and accuracy.

What are some other limitations of LLMs in general?

LLMs can have limitations in terms of:

- Bias: They may perpetuate biases present in the training data.

- Accuracy: Their output can sometimes be inaccurate or unreliable.

- Explainability: Understanding how LLMs arrive at their outputs can be challenging.

What's the future of AI chips and LLMs?

The field of AI is rapidly evolving. We can expect to see advancements in hardware design and LLM algorithms. New chips with improved memory capacity and specialized architectures are likely to emerge, making it easier to run larger and more complex LLMs locally.

Keywords

Apple M2 Pro, LLM, Large Language Models, AI, Token Speed, Llama 2, Quantization, Memory, GPU, Performance, Limitations, AI Tasks, Generation, Processing, Bandwidth, FAQ, Keywords, AI Chips