What Are the Limitations of Apple M2 Max for AI Tasks?

Introduction

The Apple M2 Max is a powerful chip that boasts impressive performance for a wide range of tasks, including running AI models. However, while it excels in certain areas, it has limitations when it comes to handling the demands of large language models (LLMs). This article will delve into these limitations, exploring how the M2 Max performs with different LLMs and quantization levels, using publicly available benchmark data.

Remember, the world of AI is always evolving, so these limitations can change as new models and optimizations emerge.

Apple M2 Max: A Powerhouse with Potential

The Apple M2 Max is a marvel of chip engineering, designed to push the boundaries of performance for both creative and demanding workloads. It's packed with features like a 38-core GPU and a massive amount of memory, enabling it to tackle complex tasks with ease.

But is it truly the AI powerhouse we dream of? While it can handle smaller LLMs with aplomb, the M2 Max faces challenges when it comes to processing the giants of AI. Let's dive deeper into the specifics.

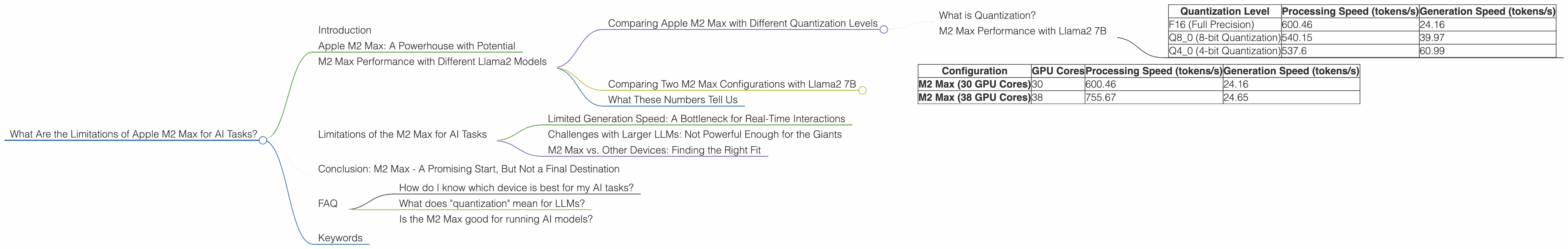

M2 Max Performance with Different Llama2 Models

Our focus will be on the performance of the Apple M2 Max with the popular Llama 2 family of LLMs, specifically the 7B variant. We'll examine how different quantization levels affect performance, and how the M2 Max stacks up against other options.

The numbers we'll be working with are in tokens per second (tokens/s). Tokens are essentially the building blocks of text, so a higher tokens/s number means faster processing and quicker results.

Comparing Apple M2 Max with Different Quantization Levels

What is Quantization?

Think of quantization like squeezing a giant water balloon into a smaller container. LLMs are massive, taking up a lot of space and requiring tons of computing power. Quantization is like compressing the model, making it smaller and more efficient, but sacrificing some accuracy in the process. More compression, also known as a lower quantization level, typically means faster processing but with potentially reduced quality.

M2 Max Performance with Llama2 7B

Let's examine the token speed performance of the M2 Max with different quantization levels for the Llama2 7B model.

| Quantization Level | Processing Speed (tokens/s) | Generation Speed (tokens/s) |

|---|---|---|

| F16 (Full Precision) | 600.46 | 24.16 |

| Q8_0 (8-bit Quantization) | 540.15 | 39.97 |

| Q4_0 (4-bit Quantization) | 537.6 | 60.99 |

We see that the M2 Max achieves impressive processing speeds even at the highest quantization level (F16). However, the generation speed, which is how fast the model can generate text, is significantly lower. This is an important consideration, especially for applications like chatbots where real-time text generation is crucial.

Comparing Two M2 Max Configurations with Llama2 7B

Now, let's compare two different M2 Max configurations with Llama2 7B.

| Configuration | GPU Cores | Processing Speed (tokens/s) | Generation Speed (tokens/s) |

|---|---|---|---|

| M2 Max (30 GPU Cores) | 30 | 600.46 | 24.16 |

| M2 Max (38 GPU Cores) | 38 | 755.67 | 24.65 |

The configuration with 38 GPU cores delivers a noticeably faster processing speed, offering a 25.8% improvement compared to the 30 GPU core configuration. However, the generation speed remains relatively similar, suggesting that the M2 Max's GPU cores might be less effective for this aspect of LLM work.

What These Numbers Tell Us

The M2 Max exhibits impressive processing power when dealing with Llama2 7B, even under different quantization levels. However, its performance in text generation, which is often the most crucial aspect of LLM applications, is not as remarkable.

Limitations of the M2 Max for AI Tasks

The M2 Max, while capable, faces limitations that might restrict its suitability for certain AI workloads, particularly when it comes to large and complex LLMs.

Limited Generation Speed: A Bottleneck for Real-Time Interactions

The relatively low generation speed of the M2 Max is a significant limitation. While it can process information quickly, it struggles to generate text at a pace that is ideal for real-time AI interaction. This means that applications demanding fast response times, such as chatbots, might find the M2 Max lacking.

For a simple analogy, imagine a super-fast conveyor belt bringing you a massive amount of raw ingredients. The M2 Max is great at quickly processing these ingredients, but it’s a bit slow at cooking them into a finished dish.

Challenges with Larger LLMs: Not Powerful Enough for the Giants

The M2 Max struggles to handle larger LLMs, like the 13B and 70B variants of Llama2, which are capable of more complex and nuanced responses.

Imagine a chef trying to cook a multi-course meal for a large party. While they might be skilled and efficient for smaller gatherings, they might lack the equipment and resources to handle a large feast. Similarly, the M2 Max might not have enough processing power to effectively handle the larger, more complex LLMs.

M2 Max vs. Other Devices: Finding the Right Fit

The M2 Max is a powerful chip, but it's not the best choice for every AI task. Other devices, like powerful GPUs with more processing power, might be more suitable for handling larger LLMs or demanding AI applications.

Consider it like choosing the right tool for the job. A hammer might be effective for pounding nails, but it's not the best choice for driving screws. Similarly, the M2 Max might be perfect for some AI tasks, but it's not ideal for everything.

Conclusion: M2 Max - A Promising Start, But Not a Final Destination

The Apple M2 Max offers a compelling combination of performance and efficiency, especially for processing smaller LLMs at different quantization levels. However, its generation speed limitations and struggles with larger models highlight the need for more powerful devices when working with demanding AI workloads.

The world of AI is constantly evolving, and it's likely that future generations of chips, including those from Apple, will be even better equipped to handle the increasing complexity and scale of LLMs. For now, the M2 Max is a good starting point for those exploring the realm of local LLMs, particularly for smaller models.

FAQ

How do I know which device is best for my AI tasks?

The optimal device depends on the specific LLM you are using, the size of your model, and your desired performance levels. For smaller LLMs, the M2 Max might be sufficient. However, if you require high generation speed or need to handle large LLMs, consider other devices like GPUs with more processing power.

What does "quantization" mean for LLMs?

Quantization is a technique for reducing the size and complexity of LLMs without significantly impacting their performance. Think of it like compressing a large image file into a smaller version without losing too much detail. This makes the models lighter and faster to process, but some accuracy might be lost in the process. While lower quantization levels typically lead to faster processing, they might result in slightly less accurate results.

Is the M2 Max good for running AI models?

The M2 Max is a capable chip for running AI models, particularly for smaller LLMs. It offers excellent processing speed, even at lower quantization levels. However, its limitations in generation speed and struggles with larger models might make it less suitable for demanding AI workloads.

Keywords

Apple M2 Max, AI, LLMs, Llama2, token speed, quantization, generation speed, processing speed, limitations, benchmarks, performance, GPU, GPU cores, F16, Q80, Q40, real-time interaction, AI tasks, chatbots, local LLMs