What Are the Limitations of Apple M2 for AI Tasks?

Introduction

The Apple M2 chip is a powerhouse of a processor, offering phenomenal performance for everyday tasks and even professional workflows. But what about its capabilities for AI tasks, specifically for working with large language models (LLMs)? This is a question that's on the minds of many developers and AI enthusiasts.

In this article, we'll delve into the world of LLMs running on the M2 chip, dissecting its strengths and limitations when it comes to AI performance. We'll use real-world data to understand how the M2 tackles the demands of processing and generating text with various LLM models. Let's dive right in!

Understanding the M2 Chip and LLMs

The Apple M2 chip boasts impressive processing power, with a focus on efficiency and speed. It's specifically designed to handle complex tasks smoothly, which is crucial for AI workloads.

Large language models are a type of AI model that can understand and generate human-like text. They're basically super-smart algorithms that learn from vast amounts of data, allowing them to do things like translate languages, write different kinds of creative content, and even answer your questions in a comprehensive, informative way.

Apple M2 Performance with Llama 2: A Detailed Look

To evaluate the M2's performance for AI tasks, we're going to look at a popular LLM called Llama 2. The Llama 2 series includes models with varying sizes – we'll be focusing on the Llama 2 7B model (7 billion parameters) in this article.

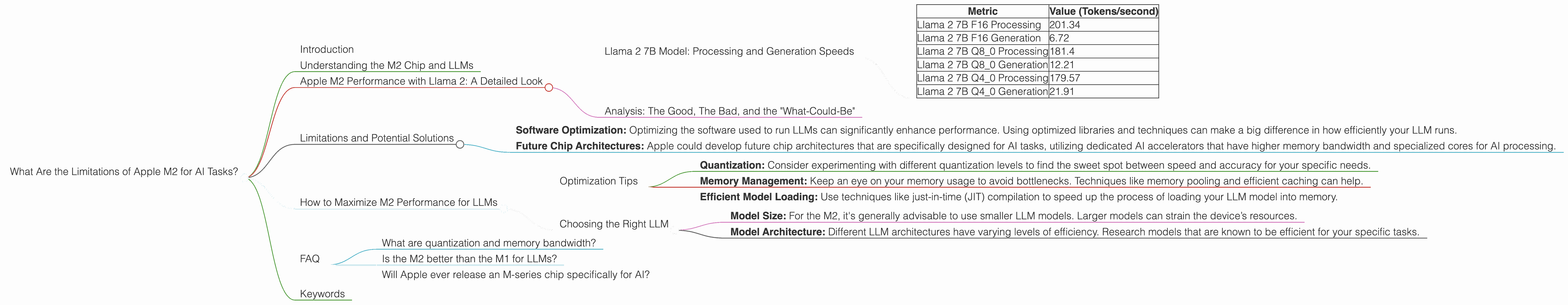

Llama 2 7B Model: Processing and Generation Speeds

Let's get into the nitty-gritty! We'll examine the M2's ability to both process and generate text using the Llama 2 7B model. The numbers we'll be looking at represent the number of tokens (chunks of text) the M2 can process or generate per second.

| Metric | Value (Tokens/second) |

|---|---|

| Llama 2 7B F16 Processing | 201.34 |

| Llama 2 7B F16 Generation | 6.72 |

| Llama 2 7B Q8_0 Processing | 181.4 |

| Llama 2 7B Q8_0 Generation | 12.21 |

| Llama 2 7B Q4_0 Processing | 179.57 |

| Llama 2 7B Q4_0 Generation | 21.91 |

The table shows the results for different quantization levels:

- F16: This uses a 16-bit floating-point representation, offering good accuracy.

- Q8_0: This uses 8-bit quantization, which reduces memory usage while potentially impacting accuracy slightly.

- Q40: This uses 4-bit quantization, further reducing memory footprint but potentially sacrificing accuracy more than Q80.

Analysis: The Good, The Bad, and the "What-Could-Be"

Processing Power: The M2 shines when it comes to processing text. For all the quantization levels, it handles Llama 2 7B with impressive speed, exceeding 179 tokens per second. This means it's lightning-fast at understanding and interpreting the text you feed it.

Generation Time: The generation speeds, however, show a different story. While still decent, they are significantly slower than the processing speeds. The M2 struggles to keep up with the demands of generating text, particularly with the F16 quantization level.

Quantization Effects: It's interesting to note that as we move to lower quantization levels (Q80 and Q40), the generation speeds improve slightly. This is because using less information per token can make calculations faster, but it comes at the cost of potentially lower accuracy.

Overall: The M2 is a capable device for working with LLMs, but its performance when generating text with the Llama 2 7B model leaves room for improvement.

Limitations and Potential Solutions

The M2's limitations when it comes to LLM generation speeds are primarily related to the architecture of the chip.

Memory Bandwidth: The M2's memory bandwidth is a key limiting factor. LLMs require a lot of memory to store and access vast amounts of data, and a high memory bandwidth is essential for keeping up.

GPU Cores: While the M2 has a decent number of GPU cores (10), these are designed for general-purpose graphics processing rather than the highly specialized tasks required for AI workloads.

Potential Solutions

- Software Optimization: Optimizing the software used to run LLMs can significantly enhance performance. Using optimized libraries and techniques can make a big difference in how efficiently your LLM runs.

- Future Chip Architectures: Apple could develop future chip architectures that are specifically designed for AI tasks, utilizing dedicated AI accelerators that have higher memory bandwidth and specialized cores for AI processing.

How to Maximize M2 Performance for LLMs

Even with the limitations, you can maximize the M2's potential for working with LLMs.

Optimization Tips

- Quantization: Consider experimenting with different quantization levels to find the sweet spot between speed and accuracy for your specific needs.

- Memory Management: Keep an eye on your memory usage to avoid bottlenecks. Techniques like memory pooling and efficient caching can help.

- Efficient Model Loading: Use techniques like just-in-time (JIT) compilation to speed up the process of loading your LLM model into memory.

Choosing the Right LLM

- Model Size: For the M2, it's generally advisable to use smaller LLM models. Larger models can strain the device’s resources.

- Model Architecture: Different LLM architectures have varying levels of efficiency. Research models that are known to be efficient for your specific tasks.

FAQ

What are quantization and memory bandwidth?

Quantization is a technique that reduces the amount of data required to represent a number. Think of it like using smaller boxes to store your belongings – you can fit more things, but each box holds less.

Memory bandwidth is the speed at which data can be transferred between the processor and memory. It's like a highway – more lanes mean more traffic can flow, and faster speeds mean data moves quickly.

Is the M2 better than the M1 for LLMs?

The M2 offers some improvements over the M1, but its performance for LLMs is still limited by the same fundamental factors. Both chips face challenges with memory bandwidth and specialized AI hardware.

Will Apple ever release an M-series chip specifically for AI?

It’s hard to say for sure, but given Apple's commitment to AI and their recent focus on machine learning, it's certainly a possibility! They might release a future chip with dedicated AI accelerators that are even more optimized for AI tasks.

Keywords

Apple M2, LLMs, Llama 2, AI, Quantization, Memory Bandwidth, GPU Cores, Performance, Limitations, Optimization, Token Speed, Generation, Processing, Deep Learning.