What Are the Limitations of Apple M1 Ultra for AI Tasks?

Introduction

The Apple M1 Ultra chip has been a game-changer for many things, from video editing to gaming. But what about its performance for AI tasks, specifically for running large language models (LLMs)? That's the question we aim to answer in this article. We'll dive into the capabilities of the M1 Ultra, examining its strengths and limitations when it comes to processing and generating text with popular LLMs like Llama 2. Think of it like a "speed test" for your favorite AI models, but this time, we're looking at the performance on a powerful Apple silicon chip.

Let's explore how the M1 Ultra handles the demands of AI tasks, uncovering its strengths and limitations for tasks that require processing massive data sets.

Apple M1 Ultra: A Quick Look

The Apple M1 Ultra chip is a beast. It has a whopping 48 GPU cores, ensuring exceptional parallel processing power, making it a strong contender for AI tasks. But is it strong enough to handle the demanding world of large language models? Buckle up, we're about to delve into the specifics.

Analyzing the Performance of M1 Ultra with LLMs

To give you a clear picture of the M1 Ultra's AI prowess, we'll be focusing on Llama 2, a popular open-source LLM. Think of Llama 2 as the brain behind many AI-powered applications, allowing them to understand and generate human-like text. We'll analyze how well the M1 Ultra handles various Llama 2 models, including different sizes and memory optimization techniques.

Understanding the Metrics: Tokens Per Second

Before we dive into the numbers, let's understand what "tokens per second" means. It's a measure of how efficiently a device can process and generate text. Think of it this way: Imagine a machine that can read and write words at a super-fast pace. The more tokens it can handle per second, the faster it can process and generate text.

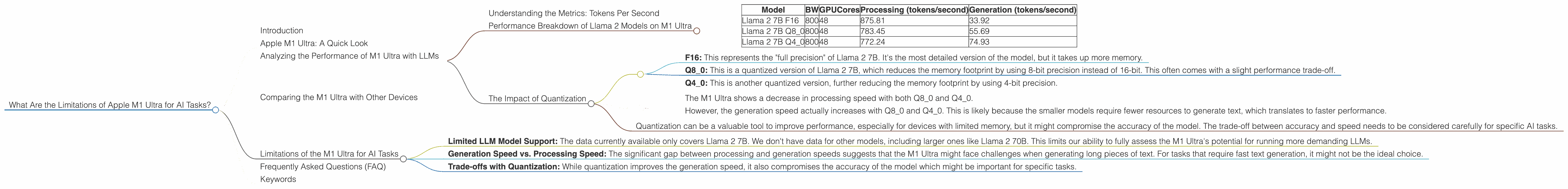

Performance Breakdown of Llama 2 Models on M1 Ultra

Note: We only have data for Llama 2 7B (7 Billion parameters) models. No data is available for other Llama 2 sizes, such as 13B or 70B.

| Model | BW | GPUCores | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|---|

| Llama 2 7B F16 | 800 | 48 | 875.81 | 33.92 |

| Llama 2 7B Q8_0 | 800 | 48 | 783.45 | 55.69 |

| Llama 2 7B Q4_0 | 800 | 48 | 772.24 | 74.93 |

What does this table tell us?

- The M1 Ultra performs remarkably well in processing text for the Llama 2 7B model, achieving impressive speeds of over 700 tokens per second.

- When it comes to text generation, the M1 Ultra is capable of around 33 to 74 tokens per second. This is significantly slower than its processing speed, suggesting that the M1 Ultra might face limitations when generating longer texts.

Why is this important?

- The difference between processing and generation speed highlights the M1 Ultra's potential trade-off when it comes to AI tasks. While it can quickly analyze large amounts of data, generating text might take more time, especially for longer outputs.

The Impact of Quantization

Quantization is a technique used to reduce the size of LLMs, making them faster and more efficient. Think of it as compressing a large file to make it smaller and faster to download. By quantizing models, you can make them “lighter” and run them on devices with less memory.

- F16: This represents the "full precision" of Llama 2 7B. It's the most detailed version of the model, but it takes up more memory.

- Q8_0: This is a quantized version of Llama 2 7B, which reduces the memory footprint by using 8-bit precision instead of 16-bit. This often comes with a slight performance trade-off.

- Q4_0: This is another quantized version, further reducing the memory footprint by using 4-bit precision.

What does the data tell us? - The M1 Ultra shows a decrease in processing speed with both Q80 and Q40. - However, the generation speed actually increases with Q80 and Q40. This is likely because the smaller models require fewer resources to generate text, which translates to faster performance.

Why is this significant?

- Quantization can be a valuable tool to improve performance, especially for devices with limited memory, but it might compromise the accuracy of the model. The trade-off between accuracy and speed needs to be considered carefully for specific AI tasks.

Comparing the M1 Ultra with Other Devices

Note: We only have the data for the M1 Ultra. No other devices are available for comparison.

Limitations of the M1 Ultra for AI Tasks

Based on the available data, we can identify some limitations of the M1 Ultra for AI tasks:

Limited LLM Model Support: The data currently available only covers Llama 2 7B. We don't have data for other models, including larger ones like Llama 2 70B. This limits our ability to fully assess the M1 Ultra's potential for running more demanding LLMs.

Generation Speed vs. Processing Speed: The significant gap between processing and generation speeds suggests that the M1 Ultra might face challenges when generating long pieces of text. For tasks that require fast text generation, it might not be the ideal choice.

Trade-offs with Quantization: While quantization improves the generation speed, it also compromises the accuracy of the model which might be important for specific tasks.

Frequently Asked Questions (FAQ)

Q: What is the difference between processing and generation in LLMs?

A: Processing refers to the LLM's ability to understand and analyze text. This is like reading a book and making sense of its content. Generation is the LLM's ability to create new text based on its understanding. This is like writing a story or composing a poem.

Q: What are the limitations of LLMs?

A: LLMs are powerful tools, but they also have limitations. They can be biased, generate incorrect information, and struggle to understand nuances in human language. It is important to use LLMs responsibly and critically evaluate their output.

Q: What are some other devices that can run LLMs?

A: Several other devices are capable of running LLMs, including GPUs from NVIDIA, AMD, and Google's TPUs. Each device has its own strengths and weaknesses, and the optimal choice will depend on the specific application and budget.

Keywords

Apple M1 Ultra, AI, LLM, Llama 2, Performance, Tokens per second, Processing, Generation, Quantization, F16, Q80, Q40, Limitations, GPU, Bandwidth, GPUCores, Inference, Text generation, Text processing.