What Are the Limitations of Apple M1 Pro for AI Tasks?

Introduction: Unleashing the Power of Apple's M1 Pro for AI

The Apple M1 Pro chip is a powerful piece of hardware that has revolutionized the performance of Apple's laptops. It boasts impressive speed and efficiency, making it a popular choice for a wide range of tasks, including AI development. But while it excels in many areas, it has its limitations when it comes to handling complex AI tasks, specifically for large language models (LLMs).

This article delves into the performance of Apple's M1 Pro in the context of AI, focusing on its capabilities with LLMs like Llama 2. We will discuss the factors that influence the performance of LLMs on the M1 Pro, particularly the impact of quantization levels and the trade-off between processing and generation speed. Get ready to dive into the world of LLMs and discover how the Apple M1 Pro stacks up in this exciting domain!

## Apple M1 Pro Token Speed Generation: A Deep Dive

The M1 Pro is a powerful chip, but its performance for AI tasks, especially with LLMs, can be impacted by several factors. These factors include:

- Quantization Levels: Quantization is a technique used to reduce the size of LLM models by using lower-precision data types. This can lead to faster processing times, but it can also result in a decrease in accuracy.

- GPU Cores: The number of GPU cores on a device directly affects the speed at which it can process information, including AI model inference. The Apple M1 Pro comes with 14 or 16 GPU cores depending on the configuration.

- Model Size: The size of the LLM model significantly affects the speed at which it can be processed. Larger models require more computational resources and often result in slower processing times.

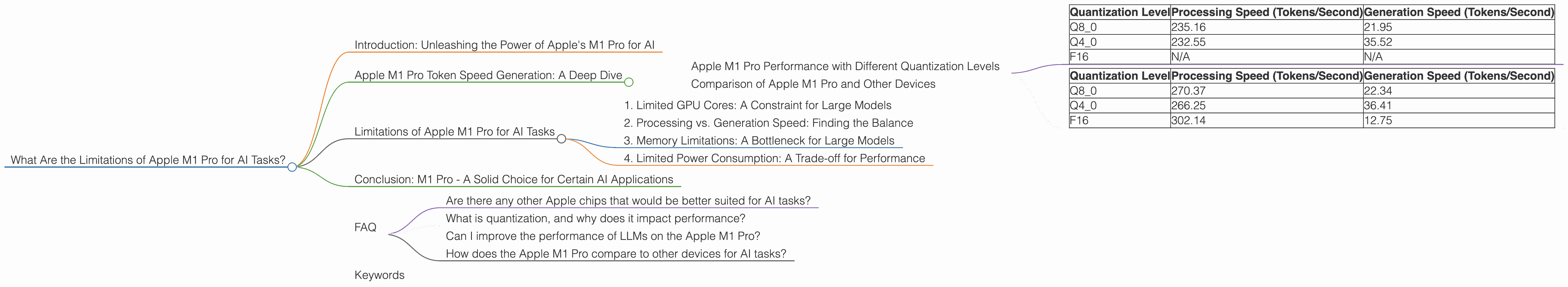

Apple M1 Pro Performance with Different Quantization Levels

To understand the limitations of the Apple M1 Pro for AI tasks, let's look at the performance of the Llama 2 7B model with different quantization levels.

The Apple M1 Pro with 14 GPU Cores:

| Quantization Level | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Q8_0 | 235.16 | 21.95 |

| Q4_0 | 232.55 | 35.52 |

| F16 | N/A | N/A |

The Apple M1 Pro with 16 GPU Cores:

| Quantization Level | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Q8_0 | 270.37 | 22.34 |

| Q4_0 | 266.25 | 36.41 |

| F16 | 302.14 | 12.75 |

Key Takeaways:

- In general, higher quantization levels (Q80, Q40) lead to faster processing speeds compared to F16. This is because these quantization levels use smaller data types to represent the model parameters, resulting in less memory usage and faster calculations.

- However, the generation speed doesn't always follow this trend. For example, the Q40 level shows a faster generation speed than the Q80 level on both M1 Pro configurations. This highlights the complex interplay between quantization and model performance.

- The M1 Pro with 16 GPU cores consistently outperforms the 14 GPU core version across different quantization levels, demonstrating the impact of GPU cores on performance.

Comparison of Apple M1 Pro and Other Devices

While the M1 Pro provides decent speeds with Llama 2 7B, it falls short compared to dedicated GPUs often used in AI development. For example, the NVIDIA GeForce RTX 3090, a powerful GPU designed for high-performance computing, can achieve significantly faster processing and generation speeds for LLMs, especially at lower quantization levels.

Limitations of Apple M1 Pro for AI Tasks

While the Apple M1 Pro boasts impressive performance for many tasks, it faces limitations when it comes to handling complex AI models, especially for LLMs. Let’s look at some of the key limitations:

1. Limited GPU Cores: A Constraint for Large Models

The Apple M1 Pro, with its 14 or 16 GPU cores, performs admirably well with smaller LLMs, like the 7B Llama 2 model. But its performance can be hampered when working with larger models (e.g., Llama 2 13B or 70B). This is because the GPU cores need to handle more data and computations, leading to slower speeds.

Imagine you have a group of people trying to assemble a large jigsaw puzzle. If you have a small team, it takes longer to complete the puzzle. Similarly, with fewer GPU cores, the M1 Pro can struggle to process large LLMs efficiently.

2. Processing vs. Generation Speed: Finding the Balance

As seen in the data above, the Apple M1 Pro often exhibits faster processing speeds when working with lower quantization levels. However, this can sometimes come at the expense of slower generation speeds. This trade-off between processing and generation speed is a common issue when optimizing for LLMs.

Think of it like this: You can quickly read through a book (processing), but it might take longer to write your own book (generation). The M1 Pro might prioritize speed when processing information but might struggle to generate text as quickly, especially when using more compressed model representations.

3. Memory Limitations: A Bottleneck for Large Models

The Apple M1 Pro features a significant amount of memory (up to 32GB), which can handle medium-sized LLMs relatively well. However, as AI models continue to grow in size, memory becomes a limiting factor. Larger models demand more memory resources, and the M1 Pro, while powerful, might struggle to accommodate these demands.

4. Limited Power Consumption: A Trade-off for Performance

The M1 Pro is known for its energy efficiency, which is a boon for battery life. But this efficiency comes at the cost of limited power consumption. This means the chip might not be able to fully unleash its potential to handle computationally intensive tasks like larger LLMs or extensive AI model training. When it comes to AI tasks, power is a major driving force for performance.

Conclusion: M1 Pro - A Solid Choice for Certain AI Applications

The Apple M1 Pro offers a compelling balance of performance and efficiency, making it a great choice for various AI applications. It is a powerful chip capable of handling smaller LLMs and tasks like image recognition or natural language processing with reasonable speed and efficiency.

However, for tackling more demanding AI tasks like working with the latest, massive LLMs or conducting extensive AI training, the M1 Pro might struggle. In these cases, a dedicated GPU or a cloud-based solution might be a better fit.

FAQ

Are there any other Apple chips that would be better suited for AI tasks?

Yes, the Apple M1 Max and M1 Ultra chips offer more GPU cores and memory, making them better suited for handling larger LLMs and more demanding AI tasks.

What is quantization, and why does it impact performance?

Quantization is a technique used to reduce the size of AI models by representing their parameters with lower-precision data types. This results in faster processing speeds but can potentially affect the accuracy of the model.

Can I improve the performance of LLMs on the Apple M1 Pro?

There are ways to improve LLM performance on the M1 Pro, including optimizing the model quantization level and utilizing techniques like model parallelization. However, the M1 Pro might still struggle with very large models.

How does the Apple M1 Pro compare to other devices for AI tasks?

The Apple M1 Pro is a capable chip for handling smaller LLMs and general AI tasks. However, dedicated GPUs like the NVIDIA GeForce RTX 3090 significantly outperform the M1 Pro in handling larger LLMs and more complex AI workloads.

Keywords

Apple M1 Pro, AI, LLM, Llama 2, quantization, GPU cores, processing speed, generation speed, limitations, performance, AI tasks, memory, power consumption, cloud-based, GPU, RTX 3090, M1 Max, M1 Ultra.