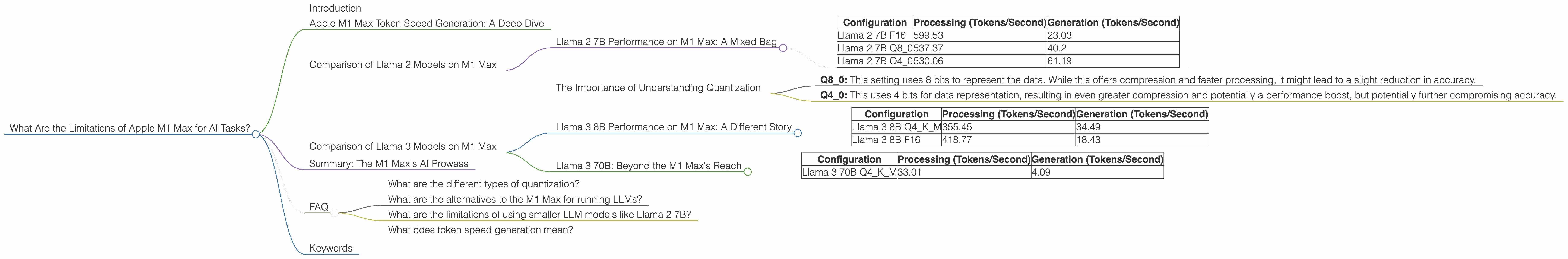

What Are the Limitations of Apple M1 Max for AI Tasks?

Introduction

The Apple M1 Max chip, with its impressive performance and power efficiency, has been a game-changer for many tasks, from video editing to creative work. But can it handle the demanding world of AI, specifically large language models (LLMs)? This article explores the limitations of the M1 Max when it comes to running AI tasks, focusing on its capabilities with popular LLM models like Llama 2 and Llama 3.

Imagine a world where your computer could understand and respond to your requests in a way that feels eerily human. LLMs are making this a reality, and their performance depends heavily on the hardware they run on. The M1 Max, while a powerhouse, has its own quirks and limitations when it comes to AI tasks.

Apple M1 Max Token Speed Generation: A Deep Dive

To illustrate the limitations of the M1 Max, consider a key metric: token speed generation. This measures how quickly the chip can process and generate language tokens, the building blocks of text. The faster the token generation, the faster the LLM can process and respond to your requests.

We'll dive into the specifics of the M1 Max's performance with different LLM models and configurations, using data from real-world benchmarks. We'll analyze factors like quantization, the process of compressing the LLM model for better performance, and its impact on the M1 Max's capabilities.

Comparison of Llama 2 Models on M1 Max

For the sake of clarity, we'll focus on one specific M1 Max configuration:

- GPU Cores: 32

- Bandwidth (BW): 400 GB/s

Llama 2 7B Performance on M1 Max: A Mixed Bag

Let's start with Llama 2, a popular open-source LLM. The table below showcases the token speed generation rates for different Llama 2 7B configurations on the M1 Max:

| Configuration | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama 2 7B F16 | 599.53 | 23.03 |

| Llama 2 7B Q8_0 | 537.37 | 40.2 |

| Llama 2 7B Q4_0 | 530.06 | 61.19 |

Key takeaways:

- F16: The M1 Max demonstrates decent processing speed for the full-precision F16 configuration. However, the generation speed lags significantly behind the processing speed. This means the model is quick at understanding the text but takes longer to generate a response.

- Q8_0: Quantization significantly impacts the M1 Max's performance. While the processing speed drops compared to F16, the generation speed sees a significant improvement.

- Q40: Moving to Q40 quantization further improves the generation speed but comes with a slight decrease in processing speed.

The Importance of Understanding Quantization

Think of quantization like compressing an image. You reduce the file size, sacrificing some image quality (precision) for a smaller file size (faster processing). The same concept applies to LLMs. Quantization reduces the model's size, enabling faster inference and lower memory usage.

- Q8_0: This setting uses 8 bits to represent the data. While this offers compression and faster processing, it might lead to a slight reduction in accuracy.

- Q4_0: This uses 4 bits for data representation, resulting in even greater compression and potentially a performance boost, but potentially further compromising accuracy.

Overall, the M1 Max can handle Llama 2 7B fairly well, but its generation speed is significantly lower than that of other dedicated AI hardware. Remember, we're talking about tokens, not full sentences! So, while the numbers might seem high, they still translate to a noticeable delay in real-world use.

Comparison of Llama 3 Models on M1 Max

Now let's move on to Llama 3, the newest generation of this open-source LLM. Llama 3 is known for its improved performance and ability to generate even more coherent and informative text.

Llama 3 8B Performance on M1 Max: A Different Story

The M1 Max can handle Llama 3 8B in both F16 and Q4KM quantized configurations.

| Configuration | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama 3 8B Q4KM | 355.45 | 34.49 |

| Llama 3 8B F16 | 418.77 | 18.43 |

Key takeaways:

- Q4KM: The Q4KM configuration indicates a specific type of quantization often used with Llama 3. Though the M1 Max demonstrates solid performance in both processing and generation, it still lags behind dedicated AI hardware for this LLM.

- F16: Interestingly, the F16 configuration for Llama 3 8B shows a faster processing speed compared to Q4KM but a significantly slower generation speed. This suggests that the M1 Max struggles to efficiently leverage the F16 data format for this particular model.

Llama 3 70B: Beyond the M1 Max's Reach

The M1 Max's limitations become even clearer with the larger Llama 3 70B model. Only the Q4KM configuration is available, and the results paint a less-than-optimistic picture.

| Configuration | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama 3 70B Q4KM | 33.01 | 4.09 |

Key takeaways:

- The M1 Max struggles: The M1 Max clearly struggles with the larger Llama 3 70B model, even with quantization. The token speed generation rates are significantly lower compared to smaller models.

- Limited Capabilities: This limitation means running the full Llama 3 70B model on an M1 Max would be a frustrating experience, resulting in slow responses and potentially even crashing the system.

Summary: The M1 Max's AI Prowess

The M1 Max is a powerful chip with its own strengths and weaknesses when it comes to AI tasks. While it can handle smaller models like Llama 2 7B with decent performance, its capabilities are limited with larger models, especially Llama 3 70B.

The M1 Max may not be the ideal choice for running these larger LLMs, especially if you require fast responses and smooth performance. It's worth noting that the M1 Max is a general-purpose chip, not specifically designed for AI workloads like dedicated AI hardware.

FAQ

What are the different types of quantization?

Quantization is a technique for compressing model weights to reduce memory footprint and potentially improve inference speed. There are various types of quantization, with Q4KM being a specific technique used for Llama 3 models.

What are the alternatives to the M1 Max for running LLMs?

For AI tasks, dedicated AI hardware like GPUs from NVIDIA or AMD, or AI accelerators like Google TPUs, are more suitable for running large LLMs. These specialized chips offer significant performance gains compared to general-purpose chips like the M1 Max.

What are the limitations of using smaller LLM models like Llama 2 7B?

Smaller models, while faster and more efficient, might have limitations in terms of accuracy, knowledge base, and overall capabilities compared to large LLMs like Llama 3 70B.

What does token speed generation mean?

Token speed generation refers to the speed at which a chip can process and generate language tokens, the building blocks of text. The higher the token speed generation, the faster the LLM can process and generate text.

Keywords

Apple M1 Max, AI, LLM, Llama 2, Llama 3, Performance, Limitations, Quantization, Token Speed Generation, GPU Cores, Bandwidth, Inference, Processing, Generation, F16, Q80, Q40, Q4KM, Dedicated AI Hardware.