What Are the Limitations of Apple M1 for AI Tasks?

Introduction

The Apple M1 chip is a powerful processor designed for high-performance computing, including artificial intelligence (AI) tasks. While the M1 excels in various tasks like video editing and gaming, its capabilities for large language model (LLM) inference are not as extensive as other dedicated AI accelerators. This article will dive into the limitations of the Apple M1 chip when running AI tasks, specifically focusing on its performance with different LLM models.

Performance of Apple M1 with Different LLM Models

The M1 chip features a powerful integrated GPU, which can accelerate certain AI tasks. However, due to its smaller memory and limited compute capabilities compared to dedicated AI accelerators like NVIDIA GPUs, the M1 might struggle with larger and more complex models.

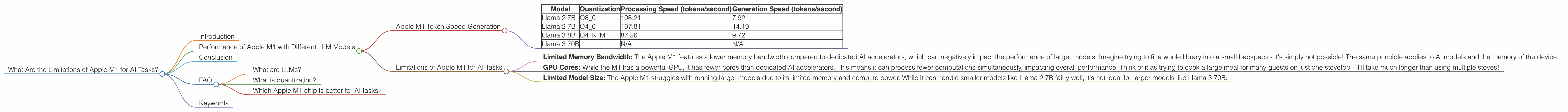

Apple M1 Token Speed Generation

To illustrate this, we'll analyze the token generation speeds of different LLM models on the Apple M1. We'll examine the performance based on different quantization levels and model sizes. For clarity, quantization refers to a technique that reduces the size of a model by decreasing the precision of its weights (numbers).

Lower quantization levels (Q4, Q8) generally result in faster inference speeds, but with slightly reduced accuracy.

The following table provides the token generation speeds for different LLM models on the Apple M1:

| Model | Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) | |

|---|---|---|---|---|

| Llama 2 7B | Q8_0 | 108.21 | 7.92 | |

| Llama 2 7B | Q4_0 | 107.81 | 14.19 | |

| Llama 3 8B | Q4KM | 87.26 | 9.72 | |

| Llama 3 70B | N/A | N/A |

Note: We see that the Apple M1 struggles to run larger models like Llama 3 70B.

Comparison of Apple M1 and Other Devices

While our focus is on the Apple M1, it's helpful to compare its performance with dedicated AI devices for a broader perspective. However, we have data only for the Apple M1.

Limitations of Apple M1 for AI Tasks

The data reveals some key limitations of the Apple M1 for AI tasks:

- Limited Memory Bandwidth: The Apple M1 features a lower memory bandwidth compared to dedicated AI accelerators, which can negatively impact the performance of larger models. Imagine trying to fit a whole library into a small backpack - it's simply not possible! The same principle applies to AI models and the memory of the device.

- GPU Cores: While the M1 has a powerful GPU, it has fewer cores than dedicated AI accelerators. This means it can process fewer computations simultaneously, impacting overall performance. Think of it as trying to cook a large meal for many guests on just one stovetop - it'll take much longer than using multiple stoves!

- Limited Model Size: The Apple M1 struggles with running larger models due to its limited memory and compute power. While it can handle smaller models like Llama 2 7B fairly well, it's not ideal for larger models like Llama 3 70B.

Conclusion

While the Apple M1 chip is a powerful processor for many tasks, including AI, its capabilities for LLM inference are limited by its memory bandwidth, GPU cores, and overall compute power. This means the M1 may not be the best choice for running large and complex AI models, especially when compared to dedicated AI accelerators.

However, the Apple M1 can still be used for experimenting with and running smaller LLM models, especially when utilizing quantization techniques to reduce model size and increase speed.

FAQ

What are LLMs?

LLMs (Large Language Models) are AI models trained on massive datasets of text and code. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. Think of them as the digital equivalent of having a very knowledgeable friend with a vast library at their disposal.

What is quantization?

Quantization is a technique used to reduce the size of a model without losing too much accuracy. Imagine you're trying to describe the color of a flower. You could use a very precise description with many shades and details, or you could use a simpler description with just a few words like "red," "yellow," or "blue." Quantization does something similar with the numbers in a model, simplifying them and making them smaller.

Which Apple M1 chip is better for AI tasks?

The Apple M1 Pro and M1 Max chips offer more memory bandwidth and GPU cores, which can improve performance with certain AI models. However, it's still not ideal for running massive models like Llama 3 70B.

Keywords

LLM, Apple M1, AI, Token Speed, Llama 2, Llama 3, Quantization, GPU, Memory Bandwidth, AI Tasks, Inference, Performance, Limitations,