Should I Use Llama3 8B or Llama3 70B on NVIDIA RTX 5000 Ada 32GB? Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding with new advancements and the ability to run them locally on your own device is becoming a reality. Two popular choices are the Llama3 8B and Llama3 70B models, both known for their impressive capabilities. But with so many different options available, how do you decide which model is right for you, especially when considering your hardware limitations?

This article dives into the performance of Llama3 8B and Llama3 70B on the NVIDIA RTX5000Ada_32GB, providing a detailed benchmark analysis to help you make informed decisions. We'll compare their strengths and weaknesses, explore their performance in different use cases, and offer practical recommendations based on the data we gather.

NVIDIA RTX5000Ada_32GB: A Powerhouse for Local LLMs

The NVIDIA RTX5000Ada_32GB is a high-performance graphics card designed for demanding tasks, including machine learning and AI applications. Its robust Ada architecture and ample 32GB of GDDR6 memory make it an excellent choice for running LLMs locally. This will allow you to experiment with these powerful models without relying on cloud-based services.

Performance Analysis: Token Generation and Processing

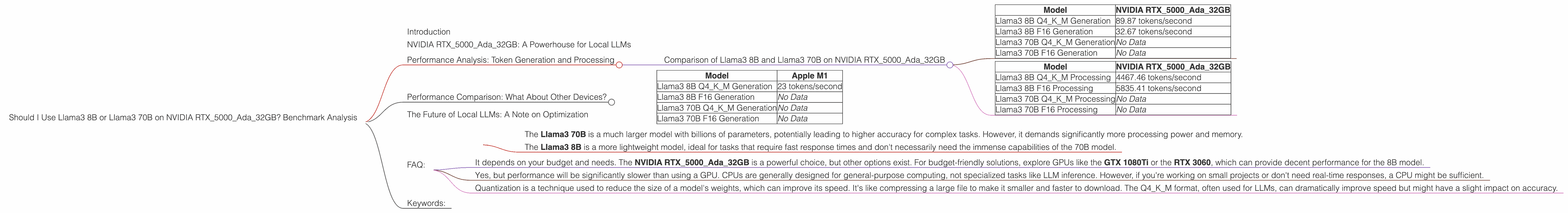

Comparison of Llama3 8B and Llama3 70B on NVIDIA RTX5000Ada_32GB

Let's break down the performance of Llama3 8B and Llama3 70B on the RTX5000Ada_32GB, focusing on token generation and token processing speeds.

Token Generation:

| Model | NVIDIA RTX5000Ada_32GB |

|---|---|

| Llama3 8B Q4KM Generation | 89.87 tokens/second |

| Llama3 8B F16 Generation | 32.67 tokens/second |

| Llama3 70B Q4KM Generation | No Data |

| Llama3 70B F16 Generation | No Data |

Token Processing:

| Model | NVIDIA RTX5000Ada_32GB |

|---|---|

| Llama3 8B Q4KM Processing | 4467.46 tokens/second |

| Llama3 8B F16 Processing | 5835.41 tokens/second |

| Llama3 70B Q4KM Processing | No Data |

| Llama3 70B F16 Processing | No Data |

Key Takeaways:

The Llama3 8B model delivers significantly faster token generation and processing speeds compared to the Llama3 70B model on the RTX5000Ada_32GB. This is because the 70B model's larger size puts a strain on the available memory and processing power.

Quantization plays a crucial role in performance. The Llama3 8B model, when quantized to Q4KM format (representing weights using 4 bits), showcases a significant improvement in token generation speed compared to the F16 format (using 16 bits).

The 8B model's speed is impressive, but remember that the 70B model offers greater potential for complex tasks. The absence of data for the 70B model's performance on this specific device suggests that further optimization is needed to make it run efficiently on the RTX5000Ada_32GB.

Quantization Explained:

Think of quantization as a way of compressing the model's weights. It's like converting a high-resolution photo into a smaller size. The Q4KM format uses fewer bits to represent the weights, allowing the model to run faster. However, this compression might come with a slight reduction in accuracy.

Practical Considerations:

For latency-sensitive tasks: If you need fast response times, the Llama3 8B model is the superior choice on the RTX5000Ada_32GB. Its quick token generation and processing make it ideal for applications like real-time chatbots or interactive text generation projects.

For demanding tasks: The Llama3 70B model, even though it lacks data for this specific device, might be better suited for complex tasks that require a deeper understanding of language. If you're working on tasks like summarization, translation, or code generation, the 70B model's potential is worth exploring.

Performance Comparison: What About Other Devices?

While we're focused on the NVIDIA RTX5000Ada_32GB, it's worth noting that other devices might offer different performance characteristics. For instance, consider the popular Apple M1 chip:

Apple M1 Token Speed Generation:

| Model | Apple M1 |

|---|---|

| Llama3 8B Q4KM Generation | 23 tokens/second |

| Llama3 8B F16 Generation | No Data |

| Llama3 70B Q4KM Generation | No Data |

| Llama3 70B F16 Generation | No Data |

As you can see, the Apple M1 struggles to keep up with the RTX5000Ada_32GB when working with the 8B model. This emphasizes the importance of choosing the right hardware for your LLM workload.

The Future of Local LLMs: A Note on Optimization

The landscape of local LLMs is rapidly evolving. Researchers are constantly working on improving performance and making these models more accessible. You can expect to see more data available for larger models like the Llama3 70B on various devices, including the RTX5000Ada_32GB.

FAQ:

Q: What are the key differences between Llama3 8B and Llama3 70B?

The Llama3 70B is a much larger model with billions of parameters, potentially leading to higher accuracy for complex tasks. However, it demands significantly more processing power and memory.

The Llama3 8B is a more lightweight model, ideal for tasks that require fast response times and don't necessarily need the immense capabilities of the 70B model.

Q: What is the best device for running Llama3 models locally?

- It depends on your budget and needs. The NVIDIA RTX5000Ada_32GB is a powerful choice, but other options exist. For budget-friendly solutions, explore GPUs like the GTX 1080Ti or the RTX 3060, which can provide decent performance for the 8B model.

Q: Can I run Llama3 models on my CPU?

- Yes, but performance will be significantly slower than using a GPU. CPUs are generally designed for general-purpose computing, not specialized tasks like LLM inference. However, if you're working on small projects or don't need real-time responses, a CPU might be sufficient.

Q: What is quantization, and how does it affect performance?

- Quantization is a technique used to reduce the size of a model's weights, which can improve its speed. It's like compressing a large file to make it smaller and faster to download. The Q4KM format, often used for LLMs, can dramatically improve speed but might have a slight impact on accuracy.

Keywords:

Llama3, 8B, 70B, NVIDIA, RTX5000Ada, GPU, LLM, benchmark, performance, token generation, token processing, quantization, Q4KM, F16, speed, efficiency, local models, AI, machine learning, AI development, hardware, optimization.