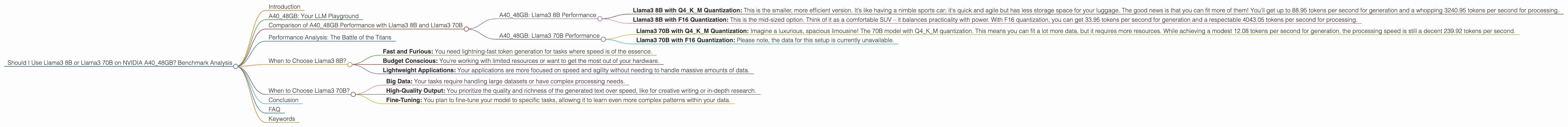

Should I Use Llama3 8B or Llama3 70B on NVIDIA A40 48GB? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is abuzz with excitement, and rightfully so! These AI marvels can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in a comprehensive and informative way. But choosing the right LLM for your needs can be a bit like picking the right tool for the job. Today, we're diving deep into the performance of Llama3 8B and Llama3 70B models on the mighty NVIDIA A40_48GB, a powerhouse GPU designed for high-performance computing. We'll analyze token speed generation and processing performance to help you make the best decision for YOUR LLM needs!

A40_48GB: Your LLM Playground

The A40_48GB is a beast of a GPU, designed to handle the demands of demanding workloads, including natural language processing tasks like running large language models. But even with such a powerful machine, the choice between Llama3 8B and Llama3 70B comes down to a critical balance between size, speed, and memory consumption.

Comparison of A40_48GB Performance with Llama3 8B and Llama3 70B

Let's get down to the nitty-gritty! We'll analyze the performance of Llama3 8B and Llama3 70B on the A40_48GB using key metrics like token speed generation and processing, while keeping in mind the different quantization levels.

A40_48GB: Llama3 8B Performance

Llama3 8B with Q4KM Quantization: This is the smaller, more efficient version. It’s like having a nimble sports car; it's quick and agile but has less storage space for your luggage. The good news is that you can fit more of them! You'll get up to 88.95 tokens per second for generation and a whopping 3240.95 tokens per second for processing.

Llama3 8B with F16 Quantization: This is the mid-sized option. Think of it as a comfortable SUV – it balances practicality with power. With F16 quantization, you can get 33.95 tokens per second for generation and a respectable 4043.05 tokens per second for processing.

A40_48GB: Llama3 70B Performance

Llama3 70B with Q4KM Quantization: Imagine a luxurious, spacious limousine! The 70B model with Q4KM quantization. This means you can fit a lot more data, but it requires more resources. While achieving a modest 12.08 tokens per second for generation, the processing speed is still a decent 239.92 tokens per second.

Llama3 70B with F16 Quantization: Please note, the data for this setup is currently unavailable.

Performance Analysis: The Battle of the Titans

Token Speed Generation: While Llama3 70B delivers the most powerful text output, the Llama3 8B takes the lead in token speed for both Q4KM and F16 quantization. The 8B models are much faster at churning out those precious tokens but also have a smaller capacity.

Token Speed Processing: For processing speed, both Llama3 8B models have a significant advantage over the Llama3 70B model with Q4KM. This means that while the smaller models may take longer to generate the text, they are better at handling the complex computations behind the scenes, making them a good choice for tasks that require a lot of background processing.

Quantization: It's clear that Q4KM quantization enables a smaller model like Llama3 8B to achieve better performance. This is all about finding the perfect balance between precision and speed. Imagine it like using a different level of detail in a video game. Q4KM prioritizes speed by making some sacrifices in detail, while F16 quantization gives you more details but at a slightly slower pace.

When to Choose Llama3 8B?

Here’s when the smaller Llama3 8B model is the champion:

- Fast and Furious: You need lightning-fast token generation for tasks where speed is of the essence.

- Budget Conscious: You're working with limited resources or want to get the most out of your hardware.

- Lightweight Applications: Your applications are more focused on speed and agility without needing to handle massive amounts of data.

When to Choose Llama3 70B?

The Llama3 70B model is a better fit when:

- Big Data: Your tasks require handling large datasets or have complex processing needs.

- High-Quality Output: You prioritize the quality and richness of the generated text over speed, like for creative writing or in-depth research.

- Fine-Tuning: You plan to fine-tune your model to specific tasks, allowing it to learn even more complex patterns within your data.

Conclusion

The choice between Llama3 8B and Llama3 70B on the A40_48GB depends on your specific needs. The 8B model offers a powerful blend of speed and efficiency, while the 70B model excels in its ability to handle larger datasets and generate high-quality text. Consider your application’s requirements for speed, memory, and overall performance to make the right call.

FAQ

Q: Can I run both Llama3 8B and Llama3 70B on the same A40_48GB? A: You sure can! It will depend on how many instances of each model you want to run simultaneously.

Q: Why is Llama3 70B slower for token generation than Llama3 8B on A40_48GB? A: This primarily comes down to the complexity of the model. A 70B model has many more parameters and connections to process, making it inherently slower in generating text.

Q: What is quantization, and how does it affect performance? A: Quantization is like reducing the number of colors in an image. It decreases the precision of the model's weights, reducing its memory footprint and increasing its speed. Think of it as trading some detail for faster results.

Q: Can I use a cheaper GPU with Llama3 8B or Llama3 70B? A: It depends on the GPU you're considering! While the A40_48GB is designed to handle these LLMs with ease, smaller GPUs like the NVIDIA GeForce RTX 3090 can also be a good option, especially for Llama3 8B. To find out which GPUs are compatible, check out the official Llama.cpp documentation.

Q: What about other LLMs, like GPT-3? A: The world of LLMs is vast! While we’ve focused on comparing Llama3 8B and Llama3 70B on the A40_48GB, you can find similar benchmark data for other models online.

Keywords

Llama3, LLM, A4048GB, NVIDIA, GPU, Benchmark, Performance, Token Speed, Quantization, Q4K_M, F16, Generation, Processing, Comparison, LLM Inference, Natural Language Processing, Speed, Efficiency, Memory, Data Handling, Application Requirements, GPT-3, GeForce RTX 3090, Llama.cpp, Open-source, OpenAI, AI, Deep Learning, Machine Learning