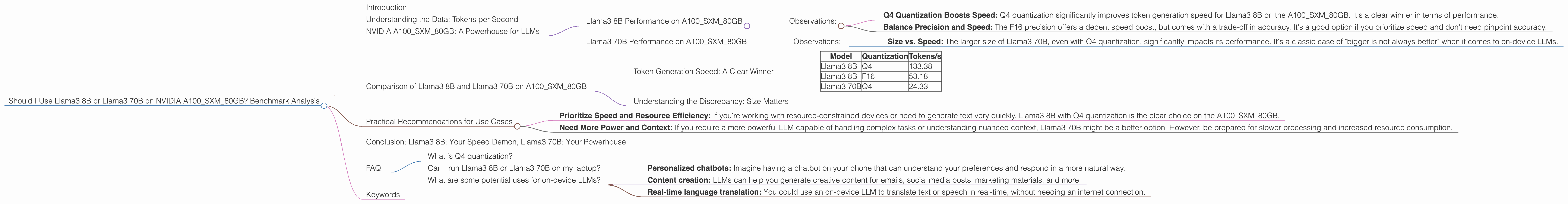

Should I Use Llama3 8B or Llama3 70B on NVIDIA A100 SXM 80GB? Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, with new models and capabilities emerging every day. While cloud-based LLMs like ChatGPT are incredibly powerful, they often come with limitations like privacy concerns and the need for constant internet access. That's where on-device LLMs come in – they offer the flexibility and power to run these models locally, without relying on the cloud.

In this article, we'll dive deep into the performance of two popular on-device LLMs – Llama3 8B and Llama3 70B – running on the powerful NVIDIA A100SXM80GB GPU. We'll compare their token generation speeds, analyze their strengths and weaknesses, and ultimately help you decide which model is the best fit for your needs.

But first, let's understand a little about what we're comparing. Llama3 8B and Llama3 70B are both powerful language models, but they differ significantly in terms of size and complexity. Llama3 8B is a smaller (“smaller” is relative here, it's still a massive model) and less complex model, while Llama3 70B is much larger and more powerful (though still not as large as GPT-3). Imagine comparing a bicycle to a motorcycle – both can get you places, but the motorcycle has more power and can handle tougher terrain.

Understanding the Data: Tokens per Second

Before we jump into the nitty-gritty, let's define what we mean by "token generation speed." Think of a language model as a sentence-building machine. It takes words (or "tokens") as input and combines them to form sentences. Token generation speed tells us how fast the model can process these tokens, which directly impacts how fast it can generate text.

We'll be using tokens per second (token/s) as our primary metric. The higher the tokens per second, the faster the model can generate text.

NVIDIA A100SXM80GB: A Powerhouse for LLMs

The NVIDIA A100SXM80GB is a beastly GPU, specifically designed for high-performance computing like AI inference. It boasts massive memory and processing power, making it an ideal choice for running large and complex LLMs.

Llama3 8B Performance on A100SXM80GB

Let's start with the smaller Llama3 8B model. Here's how it performs on the A100SXM80GB:

- Llama38BQ4KM_Generation: 133.38 tokens/s - This is the performance with Q4 quantization, a technique that reduces the size of the model while maintaining accuracy (more on that later!). Think of it like compressing a photo to save space, but without losing too much detail.

- Llama38BF16_Generation: 53.18 tokens/s - Here, the model is using F16 precision, which is less accurate than Q4 but can be faster. It's like using a lower-resolution photo – it's smaller and loads faster, but might not look as sharp.

Observations:

- Q4 Quantization Boosts Speed: Q4 quantization significantly improves token generation speed for Llama3 8B on the A100SXM80GB. It's a clear winner in terms of performance.

- Balance Precision and Speed: The F16 precision offers a decent speed boost, but comes with a trade-off in accuracy. It's a good option if you prioritize speed and don't need pinpoint accuracy.

Llama3 70B Performance on A100SXM80GB

Now, let's move on to the larger Llama3 70B model. Here's what we saw:

- Llama370BQ4KMGeneration: 24.33 tokens/s - Even with Q4 quantization, the larger Llama3 70B model is significantly slower than Llama3 8B on the A100SXM_80GB.

Observations:

- Size vs. Speed: The larger size of Llama3 70B, even with Q4 quantization, significantly impacts its performance. It's a classic case of "bigger is not always better" when it comes to on-device LLMs.

Comparison of Llama3 8B and Llama3 70B on A100SXM80GB

Token Generation Speed: A Clear Winner

Let's put these numbers into a table for easy comparison:

| Model | Quantization | Tokens/s |

|---|---|---|

| Llama3 8B | Q4 | 133.38 |

| Llama3 8B | F16 | 53.18 |

| Llama3 70B | Q4 | 24.33 |

Based on the available data, Llama3 8B with Q4 quantization is the clear winner in terms of token generation speed on the A100SXM80GB. It's over five times faster than Llama3 70B. This is a significant difference that could be crucial for real-time applications or tasks requiring fast responses.

Understanding the Discrepancy: Size Matters

The difference in performance between Llama3 8B and Llama3 70B is largely due to their size. Llama3 70B has a much larger parameter count, meaning it has more connections and computations to perform. Think of it like trying to solve a complex puzzle versus a simple one – the larger puzzle takes much longer.

Practical Recommendations for Use Cases

The best LLM for your needs depends heavily on your use case, but here are some general guidelines based on our benchmark analysis:

- Prioritize Speed and Resource Efficiency: If you're working with resource-constrained devices or need to generate text very quickly, Llama3 8B with Q4 quantization is the clear choice on the A100SXM80GB.

- Need More Power and Context: If you require a more powerful LLM capable of handling complex tasks or understanding nuanced context, Llama3 70B might be a better option. However, be prepared for slower processing and increased resource consumption.

Conclusion: Llama3 8B: Your Speed Demon, Llama3 70B: Your Powerhouse

The choice between Llama3 8B and Llama3 70B on the A100SXM80GB ultimately boils down to your specific needs. Llama3 8B is a nimble speed demon, while Llama3 70B is a powerful powerhouse.

By understanding their strengths and weaknesses, you can make an informed choice that aligns with your requirements for on-device LLM performance and accuracy.

FAQ

What is Q4 quantization?

Quantization is a process that reduces the size of a model by representing its parameters with fewer bits. It aims to reduce memory usage and computation, leading to faster inference. Q4 quantization uses only 4 bits to represent each parameter, significantly reducing the overall model size compared to traditional floating-point representations.

Can I run Llama3 8B or Llama3 70B on my laptop?

You might be able to run these models on a powerful laptop with a dedicated GPU, but performance will be significantly lower than what we saw on the A100SXM80GB. You'll need a GPU with a significant amount of RAM to handle the model size and computations.

What are some potential uses for on-device LLMs?

On-device LLMs can be used for a wide range of applications, including:

- Personalized chatbots: Imagine having a chatbot on your phone that can understand your preferences and respond in a more natural way.

- Content creation: LLMs can help you generate creative content for emails, social media posts, marketing materials, and more.

- Real-time language translation: You could use an on-device LLM to translate text or speech in real-time, without needing an internet connection.

Keywords

Large language models, on-device LLMs, Llama3, Llama3 8B, Llama3 70B, NVIDIA A100SXM80GB, token generation speed, Q4 quantization, F16 precision, GPU, performance, benchmark, analysis, comparison, inference, use cases