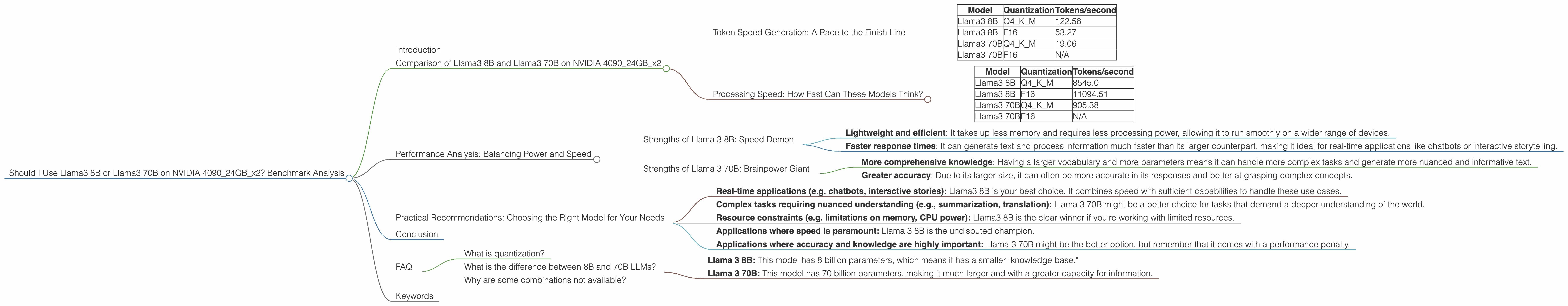

Should I Use Llama3 8B or Llama3 70B on NVIDIA 4090 24GB x2? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, with new models and optimizations appearing all the time. One of the biggest challenges for developers is choosing the right model for their needs and ensuring it runs efficiently on their hardware. This article dives into the performance of Llama3 8B and Llama3 70B on a powerful NVIDIA 409024GBx2 setup, helping you decide which model is best for your use case.

Think of LLMs like a giant library with tons of information. The size of the LLM (e.g. 8B or 70B) represents the number of books in that library. The bigger the library, the more information it holds, but it also takes longer to find what you need. The NVIDIA 409024GBx2 setup is like a super-fast librarian who helps you retrieve information quickly.

We'll be comparing these models based on their token speed, which is essentially a measure of how quickly they can generate text. We'll also be looking at how their performance changes with different quantization levels, which is a technique used to reduce the memory footprint of LLMs while maintaining their accuracy. Buckle up, it's going to be a wild ride through the exciting world of AI!

Comparison of Llama3 8B and Llama3 70B on NVIDIA 409024GBx2

Token Speed Generation: A Race to the Finish Line

Let's start with the most obvious benchmark - token speed generation. This refers to how many tokens (words or parts of words) the model can produce per second. In this scenario, both models are running on the NVIDIA 409024GBx2 setup. The results are summarized in the table below:

| Model | Quantization | Tokens/second |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 8B | F16 | 53.27 |

| Llama3 70B | Q4KM | 19.06 |

| Llama3 70B | F16 | N/A |

Key Takeaways:

- Llama3 8B is significantly faster than Llama3 70B: This makes sense because smaller models have fewer parameters to process, leading to faster computations. You can see that even the smaller Llama 3 8B model is quite impressive.

- Quantization impacts performance: The Q4KM quantization level is designed for maximum performance, and you can see that it significantly boosts token speed compared to F16. Think of quantization as a clever way to "compress" the model to make it more efficient.

- Llama3 70B on F16: No data was available for this specific configuration. It might not be supported, or the benchmarks are still pending.

Processing Speed: How Fast Can These Models Think?

In addition to token speed, it's also important to consider how quickly these models can process information. This is measured as tokens per second for processing. Here's what we found:

| Model | Quantization | Tokens/second |

|---|---|---|

| Llama3 8B | Q4KM | 8545.0 |

| Llama3 8B | F16 | 11094.51 |

| Llama3 70B | Q4KM | 905.38 |

| Llama3 70B | F16 | N/A |

Key Takeaways:

- The performance gap is even more pronounced: Although processing speed is generally higher than generation speed, the difference between the two models is vast. The smaller Llama 3 8B is remarkably faster.

- F16 performs better with processing speed: It's interesting to see that in this specific scenario, F16 quantization outperforms Q4KM for Llama 3 8B. This is an example of how different techniques might yield different results depending on the model and hardware.

- Llama 3 70B for processing: The 70B model, while significantly slower than 8B, is still capable of decent processing speeds.

Performance Analysis: Balancing Power and Speed

Strengths of Llama 3 8B: Speed Demon

Llama 3 8B is a clear winner in the speed department. It shines in scenarios that require quick text generation or processing tasks. Here's why it's a great choice:

- Lightweight and efficient: It takes up less memory and requires less processing power, allowing it to run smoothly on a wider range of devices.

- Faster response times: It can generate text and process information much faster than its larger counterpart, making it ideal for real-time applications like chatbots or interactive storytelling.

Think of Llama 3 8B as a nimble sprinter. It might not have the same strength as a heavyweight, but it's incredibly quick and agile, perfect for sprints and quick bursts of energy.

Strengths of Llama 3 70B: Brainpower Giant

Despite its slower speed, Llama 3 70B packs a punch in terms of its knowledge and understanding. Here's why it's a good choice:

- More comprehensive knowledge: Having a larger vocabulary and more parameters means it can handle more complex tasks and generate more nuanced and informative text.

- Greater accuracy: Due to its larger size, it can often be more accurate in its responses and better at grasping complex concepts.

Think of Llama 3 70B as a marathon runner. It might take a bit longer to get going, but it has incredible endurance and can handle long, demanding tasks with ease.

Practical Recommendations: Choosing the Right Model for Your Needs

- Real-time applications (e.g. chatbots, interactive stories): Llama3 8B is your best choice. It combines speed with sufficient capabilities to handle these use cases.

- Complex tasks requiring nuanced understanding (e.g., summarization, translation): Llama 3 70B might be a better choice for tasks that demand a deeper understanding of the world.

- Resource constraints (e.g. limitations on memory, CPU power): Llama3 8B is the clear winner if you're working with limited resources.

- Applications where speed is paramount: Llama 3 8B is the undisputed champion.

- Applications where accuracy and knowledge are highly important: Llama 3 70B might be the better option, but remember that it comes with a performance penalty.

Conclusion

The choice between Llama3 8B and Llama3 70B is ultimately a trade-off between power and speed. Llama 3 8B is a fast and efficient option, perfect for applications that demand quick responses. Llama 3 70B is a powerful but slower choice, ideal for complex tasks that require a deeper understanding of the world.

By understanding the strengths and weaknesses of each model and the nature of your application, you can make an informed decision that will help you achieve your objectives.

FAQ

What is quantization?

Quantization is a technique used to reduce the memory footprint of LLMs while maintaining their accuracy. Think of it like a compression algorithm that reduces the size of a large file without losing too much information.

For example, if you took a photo and wanted to store it on your phone, you might compress it to save space. Quantization works similarly by reducing the number of numbers used to represent the model's weights, making it more compact.

What is the difference between 8B and 70B LLMs?

The number after "B" represents the number of parameters in the model. Parameters are like the "knowledge" that an LLM holds. In this case, we have:

- Llama 3 8B: This model has 8 billion parameters, which means it has a smaller "knowledge base."

- Llama 3 70B: This model has 70 billion parameters, making it much larger and with a greater capacity for information.

Why are some combinations not available?

The configuration combinations might not be supported, or the benchmarks were not yet available at the time this article was written.

Keywords

Llama 3, Llama 3 8B, Llama 3 70B, NVIDIA 409024GBx2, benchmark analysis, LLM performance, token speed, quantization, Q4KM, F16, processing speed, deep learning, natural language processing, AI, machine learning, model selection, practical recommendations, use cases