Should I Use Llama3 8B or Llama3 70B on NVIDIA 3090 24GB x2? Benchmark Analysis

Introduction

The world of large language models (LLMs) is buzzing with excitement, offering exciting possibilities for natural language processing tasks like text generation, translation, and question answering. But choosing the right LLM for your needs can be overwhelming, especially when considering the available hardware. Today we're diving deep into a showdown between two popular LLMs - Llama 3 8B and Llama 3 70B - running on the potent NVIDIA 3090 24GB x2 setup. We'll analyze their speeds and efficiency, helping you decide which model is the ideal fit for your project.

Understanding the Players: Llama 3 8B vs. Llama 3 70B

Imagine a world where you can have a conversation with a computer that understands your needs and responds in a way that feels natural. That's the promise of LLMs, and Llama 3 is a powerful player in this space.

But "Llama 3" isn't just one model; it's a family. The "8B" in Llama 3 8B refers to the model's size - 8 billion parameters. This smaller model is known for its speed and efficiency, making it a great choice for resource-constrained devices. The "70B" in Llama 3 70B means 70 billion parameters - a much larger model that boasts enhanced capabilities, but comes with a higher computational cost.

The Battlefield: NVIDIA 3090 24GB x2

We're deploying these LLMs on a powerful duo of NVIDIA 3090 24GB GPUs. These graphics powerhouses excel in parallel processing, which is essential for handling the complex computations required by LLMs. Think of these GPUs as your model's personal super-powered assistants, working tirelessly to generate text, translate languages, and answer your questions.

Performance Analysis: Speed vs. Size

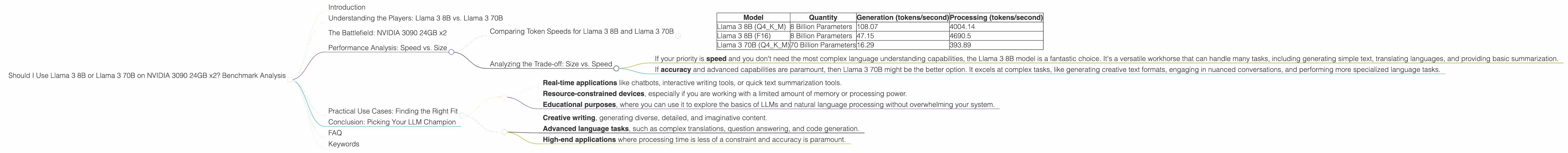

Comparing Token Speeds for Llama 3 8B and Llama 3 70B

Let's start with the core performance metric – how many tokens these models can process per second. Tokens are like the individual words or parts of words that LLMs use to understand and generate text. Think of them like LEGO blocks, which LLMs use to build sentences and paragraphs.

| Model | Quantity | Generation (tokens/second) | Processing (tokens/second) |

|---|---|---|---|

| Llama 3 8B (Q4KM) | 8 Billion Parameters | 108.07 | 4004.14 |

| Llama 3 8B (F16) | 8 Billion Parameters | 47.15 | 4690.5 |

| Llama 3 70B (Q4KM) | 70 Billion Parameters | 16.29 | 393.89 |

The results are fascinating! The smaller Llama 3 8B, despite having significantly fewer parameters, demonstrates impressive speeds. This is due to its smaller size, which allows for faster processing. Notice how the 8B model far surpasses the 70B model in terms of both generation and processing speeds.

However, it's crucial to understand that these models operate at different levels of quantization. Think of quantization like compressing a video – it reduces the file size, allowing for faster streaming, but some detail might be lost. In the table above, "Q4KM" represents a higher level of quantization, leading to greater efficiency but possibly compromising accuracy. Conversely, "F16" represents a lower quantization level, potentially offering higher accuracy but requiring more computational resources.

Analyzing the Trade-off: Size vs. Speed

Here's the million-dollar question: when should you go for the big, powerful model (Llama 3 70B), and when should you stick with the smaller, quicker one (Llama 3 8B)?

- If your priority is speed and you don't need the most complex language understanding capabilities, the Llama 3 8B model is a fantastic choice. It's a versatile workhorse that can handle many tasks, including generating simple text, translating languages, and providing basic summarization.

- If accuracy and advanced capabilities are paramount, then Llama 3 70B might be the better option. It excels at complex tasks, like generating creative text formats, engaging in nuanced conversations, and performing more specialized language tasks.

Remember, this is a balance. If you need to generate text quickly on a budget, the 8B model is the clear winner. If you need a powerful language model that can handle complex tasks and generate creative text, the 70B model is worth considering, but be prepared for a longer processing time.

Practical Use Cases: Finding the Right Fit

Llama 3 8B is a great choice for:

- Real-time applications like chatbots, interactive writing tools, or quick text summarization tools.

- Resource-constrained devices, especially if you are working with a limited amount of memory or processing power.

- Educational purposes, where you can use it to explore the basics of LLMs and natural language processing without overwhelming your system.

Llama 3 70B shines in:

- Creative writing, generating diverse, detailed, and imaginative content.

- Advanced language tasks, such as complex translations, question answering, and code generation.

- High-end applications where processing time is less of a constraint and accuracy is paramount.

Conclusion: Picking Your LLM Champion

Choosing the right LLM is like picking the right tool for the job. Are you building a sleek, efficient pocket knife, or a powerful, multi-functional Swiss Army knife?

The smaller Llama 3 8B model is your pocket knife – it's nimble, quick, and perfect for everyday tasks. The larger Llama 3 70B is the Swiss Army knife – it's powerful, versatile, and ideal for complex projects.

Remember to consider your needs and resource constraints before making your final decision. Whether you're a seasoned developer or a curious novice, understanding the capabilities of these models is crucial to harnessing the transformative power of LLMs.

FAQ

Q: What is "quantization" and how does it impact LLM performance?

A: Imagine you have a massive library of books, each representing a parameter in the LLM. Quantization is like simplifying the content of these books by reducing the number of words used. It speeds up reading and understanding, but you might miss some details.

Q: Can I run Llama 3 on a regular laptop or desktop?

A: Yes, you can! But for the larger models like Llama 3 70B, you might need a powerful computer with a dedicated graphics card. The 8B model can run on more modest devices.

Q: Can I use these models for commercial applications?

A: It depends on the model's licensing terms. Make sure to check the specific license details for each LLM you use.

Q: Are there other LLM models available besides Llama 3?

A: Yes, there are many other LLMs out there, like GPT-3, BERT, and BLOOM. Each model differs in its capabilities, training data, and licensing. It's a constantly evolving and exciting field!

Q: How do I choose the right LLM for my needs?

A: Start by defining your goals. Do you need the fastest model, the most accurate model, or a balanced combination? Then, research the available models and see which aligns with your requirements.

Keywords

Llama 3, LLM, 8B, 70B, NVIDIA 3090 24GB x2, GPU, token speed, quantization, performance, benchmark analysis, use cases, natural language model, AI, machine learning, deep learning.