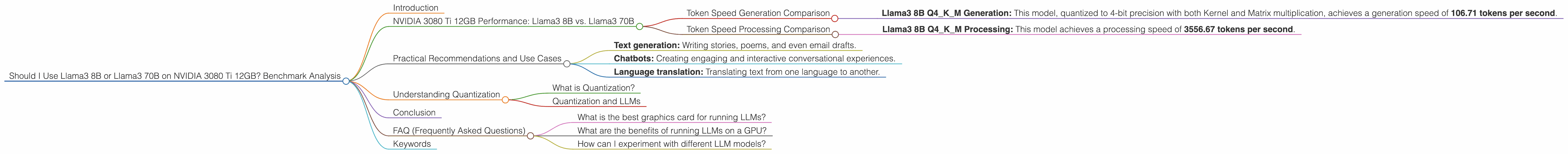

Should I Use Llama3 8B or Llama3 70B on NVIDIA 3080 Ti 12GB? Benchmark Analysis

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason! These powerful AI models are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But with so many LLM models available, how do you decide which one is right for you, especially when running them on a specific device?

In this article, we'll dive into the comparison of Llama3 8B and Llama3 70B running on the popular NVIDIA 3080 Ti 12GB graphics card. We'll analyze their performance using benchmark data, discuss their strengths and weaknesses, and provide practical recommendations for different use cases. So, buckle up, geeks, it's time to explore the fascinating world of LLMs!

NVIDIA 3080 Ti 12GB Performance: Llama3 8B vs. Llama3 70B

Token Speed Generation Comparison

Let's cut to the chase: the NVIDIA 3080 Ti 12GB can handle Llama3 8B quite well, but there's no data available for Llama3 70B on this specific device.

- Llama3 8B Q4KM Generation: This model, quantized to 4-bit precision with both Kernel and Matrix multiplication, achieves a generation speed of 106.71 tokens per second.

What does this mean for you? This means that Llama3 8B can churn out text at a pretty good clip on the 3080 Ti 12GB, but we can't say the same for Llama3 70B. The bigger model's performance is a mystery on this particular GPU.

Token Speed Processing Comparison

- Llama3 8B Q4KM Processing: This model achieves a processing speed of 3556.67 tokens per second.

What does this mean for you? This means that Llama3 8B is pretty darn fast at processing text on the 3080 Ti 12GB. While we don't have data for Llama3 70B on the same device, it's safe to assume that a larger model might take longer to process text. Remember, processing involves the steps the model takes to figure out its response.

Practical Recommendations and Use Cases

So, what does this all mean? If you are using a NVIDIA 3080 Ti 12GB, you can confidently run Llama3 8B and enjoy decent performance, particularly for text processing. You can use it for:

- Text generation: Writing stories, poems, and even email drafts.

- Chatbots: Creating engaging and interactive conversational experiences.

- Language translation: Translating text from one language to another.

However, based on the lack of data, we would suggest caution when choosing Llama3 70B on the 3080 Ti 12GB. A larger model might not have the same speed and efficiency as the smaller one. Before committing to it, you might want to consider alternative devices or explore smaller model options.

Understanding Quantization

What is Quantization?

Imagine you have a photo with millions of colors, but you need to compress it to reduce its size. You might use a technique called quantization where you reduce the number of colors used in the image. While the quality might be slightly reduced, you save storage space.

Quantization and LLMs

The same principle applies to LLMs. When you quantize an LLM, you reduce the number of bits used to represent the model's parameters, resulting in a smaller model size.

In our case, Llama3 8B Q4KM means that the model is quantized to 4 bits. This is like using only 4 colors instead of millions for our image. While it reduces the model's size and memory requirements, it might slightly compromise performance.

Conclusion

Choosing the right LLM for your device is crucial, and the results for the NVIDIA 3080 Ti 12GB tell us a lot. While Llama3 8B performs well on the 3080 Ti 12GB, we don't have data for Llama3 70B's performance on this device. This suggests considering factors like model size and device capabilities together.

Remember, smaller models might be quicker and more efficient, while larger models offer more potential for complex tasks. The key is to find the right balance for your needs!

FAQ (Frequently Asked Questions)

What is the best graphics card for running LLMs?

The 'best' graphics card depends on the size of the LLM you want to run. For larger models like Llama3 70B, you might need a high-end GPU with more memory, like an NVIDIA RTX 4090. For smaller models like Llama3 8B, a card like the 3080 Ti 12GB could be sufficient.

What are the benefits of running LLMs on a GPU?

GPUs are highly optimized for parallel processing which makes them ideal for processing the large number of calculations required by LLMs. This allows for faster token generation and processing speeds, enhancing your overall LLM experience.

How can I experiment with different LLM models?

You can find pre-trained LLM models on platforms like Hugging Face (https://huggingface.co/). Additionally, tools like llama.cpp (https://github.com/ggerganov/llama.cpp) offer convenient ways to run these models locally.

Keywords

Llama3, 8B, 70B, NVIDIA 3080 Ti 12GB, GPU, performance, benchmark, token speed, generation, processing, quantization, LLM, text generation, chatbot, language translation, Hugging Face, llama.cpp