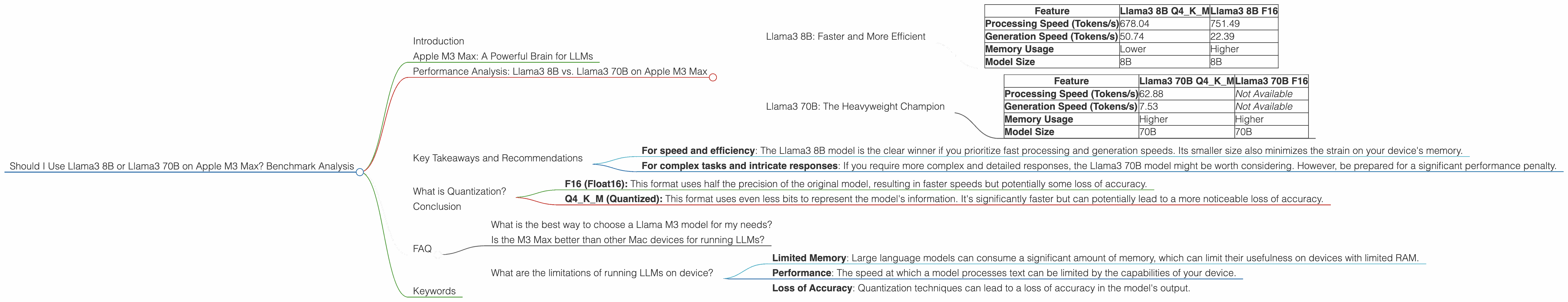

Should I Use Llama3 8B or Llama3 70B on Apple M3 Max? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, with new models and advancements emerging constantly. Among the most exciting developments are the Llama models, developed by Meta AI, which offer impressive capabilities in natural language processing. These models are becoming increasingly popular for various tasks, including text generation, translation, and question answering. But when it comes to running these models locally on your device, you have to consider the performance and memory limitations. This article dives into the performance of two Llama 3 models – Llama3 8B and Llama3 70B – on the powerful Apple M3 Max chip. We will look at their strengths and weaknesses, and provide practical recommendations based on real-world benchmark data to help you choose the model that best suits your needs.

Apple M3 Max: A Powerful Brain for LLMs

The Apple M3 Max chip is a beast of a processor. Designed for demanding tasks like video editing, 3D rendering, and yes, even running large language models like Llama, the M3 Max offers powerful performance and efficiency. It combines a massive amount of processing power with a dedicated GPU, making it perfect for running large AI models.

Performance Analysis: Llama3 8B vs. Llama3 70B on Apple M3 Max

Let's dive into the numbers and compare the two Llama 3 models on the M3 Max. We'll use tokens per second (a measure of how fast the model processes text) as our primary metric.

Llama3 8B: Faster and More Efficient

| Feature | Llama3 8B Q4KM | Llama3 8B F16 |

|---|---|---|

| Processing Speed (Tokens/s) | 678.04 | 751.49 |

| Generation Speed (Tokens/s) | 50.74 | 22.39 |

| Memory Usage | Lower | Higher |

| Model Size | 8B | 8B |

The Llama3 8B model, with its smaller size, shines in terms of speed and efficiency.

- Processing Speed: It processes text at a rate of 678.04 tokens/second in Q4KM format and 751.49 tokens/second in F16 format. This means it can tackle a lot of text quickly.

- Generation Speed: While it's not as fast as the Llama 7B model in generation, it still manages to churn out 50.74 tokens/second (Q4KM) and 22.39 tokens/second (F16). This is a good speed for generating responses and content.

- Memory Usage: Llama 3 8B uses less memory compared to the 70B model, making it a better choice for devices with limited RAM.

Llama3 70B: The Heavyweight Champion

| Feature | Llama3 70B Q4KM | Llama3 70B F16 |

|---|---|---|

| Processing Speed (Tokens/s) | 62.88 | Not Available |

| Generation Speed (Tokens/s) | 7.53 | Not Available |

| Memory Usage | Higher | Higher |

| Model Size | 70B | 70B |

The Llama3 70B model is the powerhouse with its massive size. But its performance on the M3 Max is a bit more limited.

- Processing Speed: The 70B model processes text at a rate of 62.88 tokens/second in Q4KM format. This is significantly slower than the 8B model. The data for F16 processing is not available for this combination.

- Generation Speed: The 70B model generates text at a rate of 7.53 tokens/second in Q4KM format. It's considerably slower than the 8B model. Again, there is no data for the F16 format.

- Memory Usage: The 70B model demands a significant amount of memory. This might make it unsuitable for devices with limited RAM.

Key Takeaways and Recommendations

The choice between Llama3 8B and Llama3 70B on the Apple M3 Max depends on your specific needs.

- For speed and efficiency: The Llama3 8B model is the clear winner if you prioritize fast processing and generation speeds. Its smaller size also minimizes the strain on your device's memory.

- For complex tasks and intricate responses: If you require more complex and detailed responses, the Llama3 70B model might be worth considering. However, be prepared for a significant performance penalty.

What is Quantization?

Quantization is like simplifying a complex language model into a more compact and efficient version. Imagine you have a large dictionary with thousands of words, each with a detailed definition. Quantization is like replacing those detailed definitions with smaller, simpler descriptions. The model may not be as accurate or nuanced, but it's much faster and uses less memory, making it ideal for devices with limited resources.

Common Quantization Formats:

- F16 (Float16): This format uses half the precision of the original model, resulting in faster speeds but potentially some loss of accuracy.

- Q4KM (Quantized): This format uses even less bits to represent the model's information. It's significantly faster but can potentially lead to a more noticeable loss of accuracy.

Conclusion

Choosing the right Llama 3 model for your Apple M3 Max depends on your specific needs and priorities. The Llama3 8B model offers a great balance of speed, efficiency, and accuracy, making it an excellent choice for general-purpose tasks. The Llama3 70B model provides more power and complexity but comes with a significant performance trade-off. By understanding the strengths and weaknesses of each model, you can make an informed decision and unlock the power of LLMs on your M3 Max device.

FAQ

What is the best way to choose a Llama M3 model for my needs?

The best way to choose a Llama M3 model is to consider your specific needs and priorities. If you prioritize speed and efficiency, the Llama3 8B model is a great choice. If you need more complex and detailed responses, the Llama3 70B model might be worth considering.

Is the M3 Max better than other Mac devices for running LLMs?

The M3 Max is one of the most powerful Mac devices on the market, making it a great choice for running large language models. However, other devices like the M2 Pro and M2 Max also deliver impressive performance.

What are the limitations of running LLMs on device?

Running LLMs on device comes with limitations, including:

- Limited Memory: Large language models can consume a significant amount of memory, which can limit their usefulness on devices with limited RAM.

- Performance: The speed at which a model processes text can be limited by the capabilities of your device.

- Loss of Accuracy: Quantization techniques can lead to a loss of accuracy in the model's output.

Keywords

Llama3 8B, Llama3 70B, Apple M3 Max, LLMs, Token Speed, Model Size, Quantization, F16, Q4KM, Performance, Benchmark, Natural Language Processing, NLP, AI, Machine Learning.