Should I Use Llama3 8B or Llama3 70B on Apple M1 Max? Benchmark Analysis

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason! These powerful AI systems are making waves in various fields, from generating creative content to translating languages and even writing code. But with so many LLMs and devices out there, choosing the right combination can be a daunting task. Today, we're diving deep into the exciting world of Apple's M1 Max chip and two popular LLMs: Llama 3 8B and Llama 3 70B.

Imagine you're trying to build a chatbot that can engage in witty banter or a code generator that can write lines of Python code. You're excited to bring these ideas to life, but you're also a little overwhelmed by the sheer number of options. Fear not, dear reader! This article will guide you through the intricate details of these models, comparing their performance on the M1 Max to help you make the best choice for your project. We'll analyze their strengths and weaknesses, and ultimately, help you decide which LLM is the best fit for your unique needs.

Think of this article as your ultimate guide to navigating the world of LLMs on the M1 Max—a journey filled with insights, benchmarks, and hopefully, a little bit of fun!

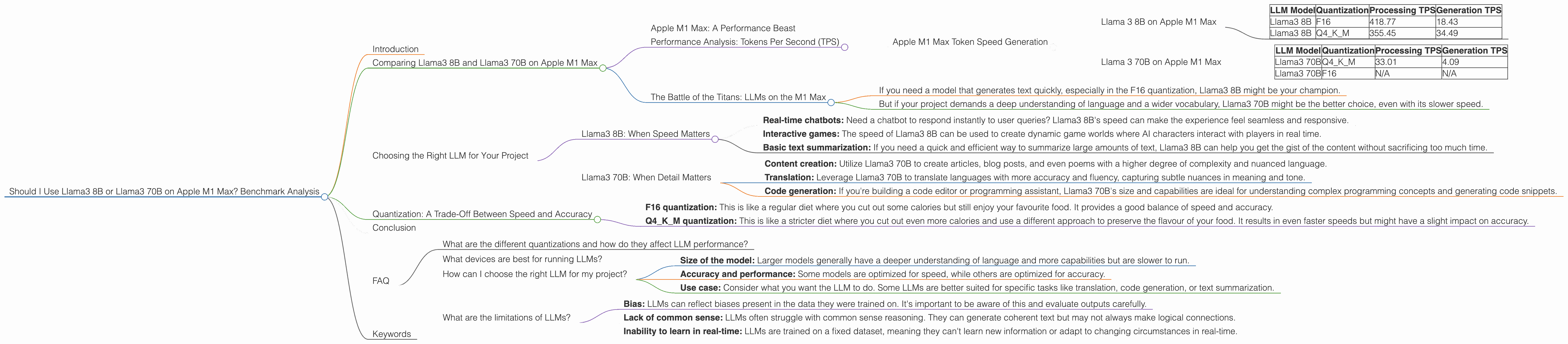

Comparing Llama3 8B and Llama3 70B on Apple M1 Max

Apple M1 Max: A Performance Beast

The Apple M1 Max is a powerful chip designed for professionals and enthusiasts alike. It boasts a staggering 32 GPU cores and a massive bandwidth of 400 GB/s, making it a formidable contender for tackling complex tasks like running LLMs.

Performance Analysis: Tokens Per Second (TPS)

Apple M1 Max Token Speed Generation

Let's get down to the nitty-gritty. We'll analyze the performance of Llama3 8B and Llama3 70B on the M1 Max by diving into the tokens per second (TPS) they achieve.

Higher TPS means a faster model, enabling quicker generation of text, which can be crucial for interactive applications. Think of it like this: imagine you're playing a video game where the speed of your character depends on how fast your computer can process information. A higher TPS means your character zips around the screen, while a lower TPS leads to sluggish movements and lag.

Llama 3 8B on Apple M1 Max

| LLM Model | Quantization | Processing TPS | Generation TPS |

|---|---|---|---|

| Llama3 8B | F16 | 418.77 | 18.43 |

| Llama3 8B | Q4KM | 355.45 | 34.49 |

Llama 3 70B on Apple M1 Max

| LLM Model | Quantization | Processing TPS | Generation TPS |

|---|---|---|---|

| Llama3 70B | Q4KM | 33.01 | 4.09 |

| Llama3 70B | F16 | N/A | N/A |

Important Note: There is no performance data available for the F16 quantization of Llama3 70B on the Apple M1 Max.

The Battle of the Titans: LLMs on the M1 Max

Llama3 8B takes the crown for speed when compared to Llama3 70B, churning out tokens significantly faster in both processing and generation. This is especially noticeable in the F16 quantization, where Llama3 8B achieves almost 10 times the Generation TPS of Llama3 70B.

However, this speed comes at the cost of a trade-off. Llama3 8B is a smaller model than Llama3 70B, which means it has fewer parameters and thus, a smaller vocabulary and less intricate understanding of language. Think of it like comparing a compact car to a luxury sedan. The compact car is nimble and zippy, but the sedan boasts more space and features.

So, what's the best choice for you? It all depends on your specific needs.

- If you need a model that generates text quickly, especially in the F16 quantization, Llama3 8B might be your champion.

- But if your project demands a deep understanding of language and a wider vocabulary, Llama3 70B might be the better choice, even with its slower speed.

Choosing the Right LLM for Your Project

Now that you have data on Llama 3 8B and Llama 3 70B performance, let's dive into the best use cases for each model on the M1 Max:

Llama3 8B: When Speed Matters

Llama3 8B is a great choice for projects where speed is paramount, especially when it comes to text generation. Think about applications like:

- Real-time chatbots: Need a chatbot to respond instantly to user queries? Llama3 8B's speed can make the experience feel seamless and responsive.

- Interactive games: The speed of Llama3 8B can be used to create dynamic game worlds where AI characters interact with players in real time.

- Basic text summarization: If you need a quick and efficient way to summarize large amounts of text, Llama3 8B can help you get the gist of the content without sacrificing too much time.

Llama3 70B: When Detail Matters

Llama3 70B is a powerful model that excels in understanding complex questions and generating creative content. Here are some ideal use cases:

- Content creation: Utilize Llama3 70B to create articles, blog posts, and even poems with a higher degree of complexity and nuanced language.

- Translation: Leverage Llama3 70B to translate languages with more accuracy and fluency, capturing subtle nuances in meaning and tone.

- Code generation: If you're building a code editor or programming assistant, Llama3 70B's size and capabilities are ideal for understanding complex programming concepts and generating code snippets.

Quantization: A Trade-Off Between Speed and Accuracy

Quantization is like a diet for LLMs. It reduces the size of the model by using smaller numbers to represent its weights, making it more efficient and faster to run on devices like the M1 Max.

- F16 quantization: This is like a regular diet where you cut out some calories but still enjoy your favourite food. It provides a good balance of speed and accuracy.

Q4KM quantization: This is like a stricter diet where you cut out even more calories and use a different approach to preserve the flavour of your food. It results in even faster speeds but might have a slight impact on accuracy.

In the case of Llama3 8B, the F16 quantization is clearly the winner in terms of speed, while the Q4KM quantization offers a little more speed at the potential cost of accuracy.

Conclusion

Choosing the right LLM for your project on the M1 Max is a decision that depends on your specific needs. If speed is your priority, Llama3 8B is the way to go. But if you need a model that can generate nuanced and creative content, Llama3 70B is the better option. Remember, both LLMs are capable and powerful, and the key is to select the one that best aligns with your goals.

FAQ

What are the different quantizations and how do they affect LLM performance?

Quantization is like a diet for LLMs, it helps them slim down and run faster on devices like the M1 Max. Think of it as reducing the precision of the numbers used to represent the model's weights. F16 quantization uses fewer bits to represent these numbers, making it faster but potentially sacrificing some accuracy. Q4KM quantization goes even further, reducing the precision even more for even faster speeds but potentially impacting accuracy a little more. Ultimately, you need to balance the trade-offs between speed and accuracy based on your specific needs.

What devices are best for running LLMs?

The best device for running LLMs depends on the size of the model and the desired performance. Devices with powerful GPUs like the Apple M1 Max are well-suited for larger models and those requiring high processing power. Newer generation devices often have an edge in terms of performance and efficiency. Ultimately, the best device for you will depend on the specific LLM you're using and the nature of your project.

How can I choose the right LLM for my project?

The choice of LLM depends on your project's specific requirements. Consider factors like:

- Size of the model: Larger models generally have a deeper understanding of language and more capabilities but are slower to run.

- Accuracy and performance: Some models are optimized for speed, while others are optimized for accuracy.

- Use case: Consider what you want the LLM to do. Some LLMs are better suited for specific tasks like translation, code generation, or text summarization.

What are the limitations of LLMs?

Despite their amazing capabilities, LLMs have some limitations:

- Bias: LLMs can reflect biases present in the data they were trained on. It's important to be aware of this and evaluate outputs carefully.

- Lack of common sense: LLMs often struggle with common sense reasoning. They can generate coherent text but may not always make logical connections.

- Inability to learn in real-time: LLMs are trained on a fixed dataset, meaning they can't learn new information or adapt to changing circumstances in real-time.

Keywords

LLMs, Llama3, Llama3 8B, Llama3 70B, Apple M1 Max, performance, benchmarks, quantization, F16, Q4KM, tokens per second, TPS, processing, generation, speed, accuracy, use cases, applications, chatbots, games, code generation, translation, content creation, limitations, bias, real-time, common sense, AI.