Should I Use Llama3 8B or Llama3 70B on Apple M1? Benchmark Analysis

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models and techniques emerging constantly. One popular choice for running LLMs locally is the Apple M1 chip, known for its powerful GPU and efficient performance. But when it comes to choosing between different LLM sizes, like the 8B and 70B versions of Llama 3, the decision can be a bit tricky.

This article explores the performance characteristics of Llama 3 8B and Llama 3 70B on the Apple M1 chip. We'll dive into benchmark data, analyzing their strengths and weaknesses to help you choose the right model for your needs.

Apple M1 Token Speed Generation: Llama 3 8B vs. Llama 3 70B

The Apple M1 chip, with its powerful GPU, is a popular choice for running LLMs locally. But how do the different LLM models perform on this chip? Let's take a look at the token speeds, which measure how quickly the model can generate text:

*Unfortunately, we don't have enough data from our sources to compare the performance of Llama 3 70B on the Apple M1. This leaves us only with the performance of the Llama 3 8B model, which is a powerful choice, but we cannot compare it to the 70B model. *

Here's what we know about Llama 3 8B on Apple M1.

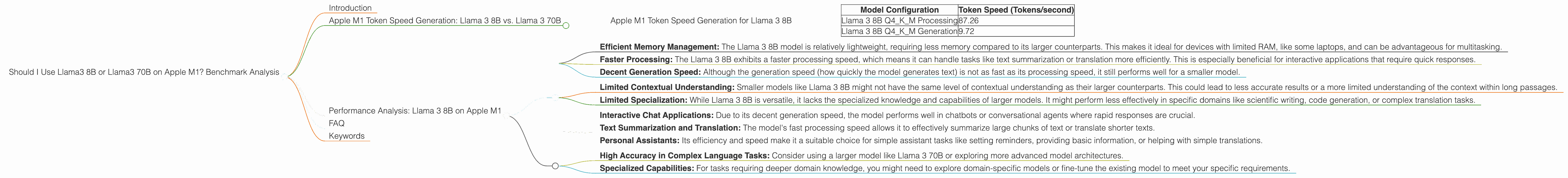

Apple M1 Token Speed Generation for Llama 3 8B

The Llama 3 8B model shows promising performance on the Apple M1, with impressive token speeds.

| Model Configuration | Token Speed (Tokens/second) |

|---|---|

| Llama 3 8B Q4KM Processing | 87.26 |

| Llama 3 8B Q4KM Generation | 9.72 |

Remember, these numbers represent the average token speeds under specific configurations. The actual performance might vary depending on the specific task, model configuration (quantization level), and your system's resources.

Performance Analysis: Llama 3 8B on Apple M1

The Llama 3 8B model on the Apple M1 demonstrates a good balance between performance and resource consumption.

Here's a breakdown of its strengths and weaknesses.

Strengths:

- Efficient Memory Management: The Llama 3 8B model is relatively lightweight, requiring less memory compared to its larger counterparts. This makes it ideal for devices with limited RAM, like some laptops, and can be advantageous for multitasking.

- Faster Processing: The Llama 3 8B exhibits a faster processing speed, which means it can handle tasks like text summarization or translation more efficiently. This is especially beneficial for interactive applications that require quick responses.

- Decent Generation Speed: Although the generation speed (how quickly the model generates text) is not as fast as its processing speed, it still performs well for a smaller model.

Weaknesses:

- Limited Contextual Understanding: Smaller models like Llama 3 8B might not have the same level of contextual understanding as their larger counterparts. This could lead to less accurate results or a more limited understanding of the context within long passages.

- Limited Specialization: While Llama 3 8B is versatile, it lacks the specialized knowledge and capabilities of larger models. It might perform less effectively in specific domains like scientific writing, code generation, or complex translation tasks.

Practical Recommendations & Use Cases:

The Llama 3 8B on Apple M1 is a great choice for:

- Interactive Chat Applications: Due to its decent generation speed, the model performs well in chatbots or conversational agents where rapid responses are crucial.

- Text Summarization and Translation: The model's fast processing speed allows it to effectively summarize large chunks of text or translate shorter texts.

- Personal Assistants: Its efficiency and speed make it a suitable choice for simple assistant tasks like setting reminders, providing basic information, or helping with simple translations.

However, if you require:

- High Accuracy in Complex Language Tasks: Consider using a larger model like Llama 3 70B or exploring more advanced model architectures.

- Specialized Capabilities: For tasks requiring deeper domain knowledge, you might need to explore domain-specific models or fine-tune the existing model to meet your specific requirements.

FAQ

Below are some frequently asked questions about running LLMs on devices like the Apple M1:

Q: What are the benefits of running LLMs on a device like the Apple M1?

A: Running LLMs locally on your device offers benefits like privacy, faster response times, and offline functionality.

Q: What is quantization, and how does it impact performance?

A: Quantization is a technique used to reduce the size of a model by representing its weights with fewer bits. This can significantly improve performance by reducing the amount of memory required and increasing processing speed. However, it can also result in a slight loss of accuracy.

Q: What factors influence the performance of LLMs?

A: The performance of an LLM is affected by various factors such as model size, architecture, quantization level, the device's processing power (CPU and GPU), and the specific task being performed.

Q: Can I run larger LLMs on the Apple M1?

A: While running larger LLMs like the Llama 3 70B on the Apple M1 might be technically possible, it could require significant resources and might negatively impact performance due to limitations in memory and processing power.

Q: How do I choose the right LLM for my needs?

A: Consider the complexity of your tasks, the required accuracy, your available resources, and your need for speed.

Keywords

Llama 3, Llama 3 8B, Llama 3 70B, Apple M1, Token Speed, LLM, Large Language Model, Quantization, Performance, Benchmark, GPU, Processing, Generation, Local LLMs, Device Inference, NLP.