Should I Use Llama3 8B or Llama2 7B on Apple M2 Ultra? Benchmark Analysis

Introduction

In the world of large language models (LLMs), running these powerful AI models on your own device opens up a world of possibilities: imagine the potential of generating creative text, translating languages, summarizing documents, and more, all within your own control. This article delves into the performance of two popular LLMs, Llama3 8B and Llama2 7B, on the mighty Apple M2 Ultra, a chip renowned for its impressive computational power. We'll dissect their performance, analyze their strengths and weaknesses, and guide you on choosing the right model for your needs.

Apple M2 Ultra: A Performance Powerhouse

The Apple M2 Ultra packs a punch, boasting a whopping 76 GPU cores and 800 GB/s bandwidth. This means it can handle a massive amount of data at lightning speed. But how does this translate to LLM performance? Let's dive into the numbers.

Performance Comparison of Llama3 8B and Llama2 7B on M2 Ultra

We'll be comparing Llama3 8B and Llama2 7B under varying configurations: F16 (half-precision floating-point), Q80 (quantized 8-bit integers), and Q40 (quantized 4-bit integers). These quantization methods allow for smaller model sizes and faster inference, but at the cost of potential accuracy.

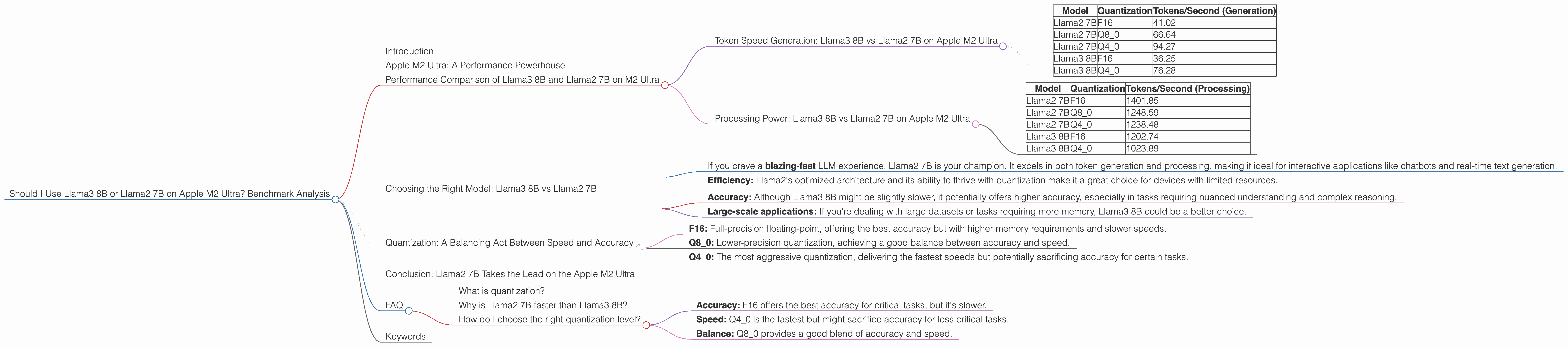

Token Speed Generation: Llama3 8B vs Llama2 7B on Apple M2 Ultra

| Model | Quantization | Tokens/Second (Generation) |

|---|---|---|

| Llama2 7B | F16 | 41.02 |

| Llama2 7B | Q8_0 | 66.64 |

| Llama2 7B | Q4_0 | 94.27 |

| Llama3 8B | F16 | 36.25 |

| Llama3 8B | Q4_0 | 76.28 |

Key Takeaways:

- Quantization: The Llama2 7B model consistently outperforms the Llama3 8B model in all quantization levels. This suggests that Llama2's architecture is better optimized for the M2 Ultra's specific hardware.

- Q40: Both LLMs see significant performance gains when using Q40 compared to F16. This highlights the benefits of quantization for speed, especially when dealing with resource-intensive tasks.

- Llama2's Speed: Llama2 7B boasts a 13% higher token generation speed compared to Llama3 8B in the Q4_0 configuration. This translates to quicker responses and potentially a more responsive conversational experience.

Processing Power: Llama3 8B vs Llama2 7B on Apple M2 Ultra

| Model | Quantization | Tokens/Second (Processing) |

|---|---|---|

| Llama2 7B | F16 | 1401.85 |

| Llama2 7B | Q8_0 | 1248.59 |

| Llama2 7B | Q4_0 | 1238.48 |

| Llama3 8B | F16 | 1202.74 |

| Llama3 8B | Q4_0 | 1023.89 |

Key Takeaways:

- Llama2's Edge: Llama2 7B consistently outperforms Llama3 8B in processing tokens across all quantization levels, with a significant difference in the F16 configuration. This means Llama2 can handle the heavy lifting of processing information more efficiently.

- Quantization and Performance: Interestingly, there's a slight drop in processing speed between Q80 and Q40 for Llama2 7B. While quantization generally improves speed, the drop might be due to specific optimizations related to the Q4_0 implementation.

Choosing the Right Model: Llama3 8B vs Llama2 7B

So, which model should you choose? It depends on your priorities.

Llama2 7B: Your Go-To for Speed and Efficiency

- If you crave a blazing-fast LLM experience, Llama2 7B is your champion. It excels in both token generation and processing, making it ideal for interactive applications like chatbots and real-time text generation.

- Efficiency: Llama2's optimized architecture and its ability to thrive with quantization make it a great choice for devices with limited resources.

Llama3 8B: A Powerful Contender for Accuracy and Complex Tasks

- Accuracy: Although Llama3 8B might be slightly slower, it potentially offers higher accuracy, especially in tasks requiring nuanced understanding and complex reasoning.

- Large-scale applications: If you're dealing with large datasets or tasks requiring more memory, Llama3 8B could be a better choice.

Quantization: A Balancing Act Between Speed and Accuracy

Quantization is like compressing your LLM model, making it smaller and faster, but potentially sacrificing some accuracy in the process. Think of it as trading some quality for speed, like watching a movie in a lower resolution to get a faster download.

- F16: Full-precision floating-point, offering the best accuracy but with higher memory requirements and slower speeds.

- Q8_0: Lower-precision quantization, achieving a good balance between accuracy and speed.

- Q4_0: The most aggressive quantization, delivering the fastest speeds but potentially sacrificing accuracy for certain tasks.

Conclusion: Llama2 7B Takes the Lead on the Apple M2 Ultra

Based on the benchmark results, Llama2 7B emerges as the top performer on the Apple M2 Ultra. Its impressive speed, combined with its efficiency in various quantization levels, makes it ideal for a wide range of applications, from chatbots to text generation. However, Llama3 8B remains a strong contender for tasks requiring higher accuracy or those requiring more resources. Remember, the right model depends on your specific needs and priorities.

FAQ

What is quantization?

Quantization is a technique used to make LLM models smaller and faster by representing numbers with fewer bits. Picture it like using a smaller ruler to measure something – you get a less precise measurement, but it's quicker and uses less space.

Why is Llama2 7B faster than Llama3 8B?

While Llama3 is a newer model, its architecture might not be as optimized for the M2 Ultra's specific hardware as Llama2. This could explain why Llama2 outperforms it in speed.

How do I choose the right quantization level?

It depends on your priorities:

- Accuracy: F16 offers the best accuracy for critical tasks, but it's slower.

- Speed: Q4_0 is the fastest but might sacrifice accuracy for less critical tasks.

- Balance: Q8_0 provides a good blend of accuracy and speed.

Keywords

LLM, Llama2, Llama3, Apple M2 Ultra, GPU, Token Speed, Quantization, F16, Q80, Q40, Benchmark, Performance, Inference, Conversational AI, Natural Language Processing, Text Generation, Chatbot, AI, Machine Learning, Deep Learning.