Should I Use Llama3 8B or Llama2 7B on Apple M1? Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and advancements popping up all the time. Two popular contenders for on-device deployment are Llama 2 and Llama 3, offering impressive capabilities for tasks like text generation, translation, and code completion. But with so many options, choosing the right model for your needs can be a challenge, especially because it's always good to try things locally! This article will explore the performance of Llama 3 8B and Llama 2 7B on the Apple M1 chip, helping you decide which model suits your specific use case.

The Apple M1: A Powerhouse for LLMs

The Apple M1 chip has become a popular choice for developers and researchers running LLMs, offering a compelling blend of performance and energy efficiency. 🤯 It's about as powerful as an Intel Core i9, but uses less energy – perfect for local AI projects! The M1's powerful GPU and unified memory architecture make it well-suited for running these computationally demanding models with a satisfying speed.

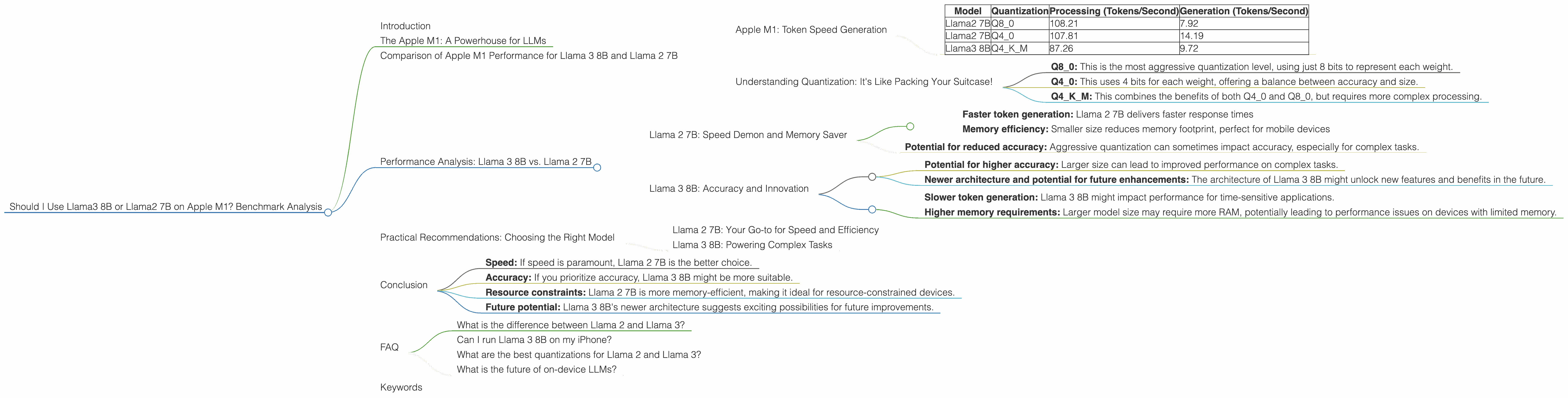

Comparison of Apple M1 Performance for Llama 3 8B and Llama 2 7B

Apple M1: Token Speed Generation

The key metric for comparing LLM performance is token speed—how many tokens the model can process per second. 🤯 A higher token speed translates to faster response times and smoother user experiences. Let's dive into the numbers:

Table 1: Token Speed (Tokens/Second) on Apple M1

| Model | Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|

| Llama2 7B | Q8_0 | 108.21 | 7.92 |

| Llama2 7B | Q4_0 | 107.81 | 14.19 |

| Llama3 8B | Q4KM | 87.26 | 9.72 |

Observations:

- Llama 2 7B outperforms Llama 3 8B in both processing and generation speeds.

- Quantization levels play a significant role: Llama 2 7B with Q40 quantization displays a noticeable advantage in token generation speed compared to Llama 3 8B with Q4K_M quantization.

Understanding Quantization: It's Like Packing Your Suitcase!

Quantization is a technique that reduces the size of the model by representing weights with fewer bits. Imagine packing your suitcase for a trip: if you pack only essential items (Q4), it takes less space and is faster to pack! This is similar to quantization - smaller models are faster.

- Q8_0: This is the most aggressive quantization level, using just 8 bits to represent each weight.

- Q4_0: This uses 4 bits for each weight, offering a balance between accuracy and size.

- Q4KM: This combines the benefits of both Q40 and Q80, but requires more complex processing.

Performance Analysis: Llama 3 8B vs. Llama 2 7B

Llama 2 7B: Speed Demon and Memory Saver

Llama 2 7B emerges as the speed champion in this comparison, consistently displaying faster token speeds for both processing and generation on the Apple M1. This makes it ideal for applications that require quick responses and seamless user experiences. Additionally, the smaller size of Llama 2 7B makes it a memory-efficient choice for devices with limited RAM, particularly for mobile deployments.

Strengths:

- Faster token generation: Llama 2 7B delivers faster response times

- Memory efficiency: Smaller size reduces memory footprint, perfect for mobile devices

Weaknesses:

- Potential for reduced accuracy: Aggressive quantization can sometimes impact accuracy, especially for complex tasks.

Llama 3 8B: Accuracy and Innovation

While Llama 3 8B might be slower, it boasts a larger size, which can translate to higher accuracy and capacity for more complex tasks. It offers a more detailed understanding of the input and can generate more nuanced outputs, including longer and more creative text. Additionally, Llama 3 8B is based on a newer architecture, suggesting potential future improvements and optimizations.

Strengths:

- Potential for higher accuracy: Larger size can lead to improved performance on complex tasks.

- Newer architecture and potential for future enhancements: The architecture of Llama 3 8B might unlock new features and benefits in the future.

Weaknesses:

- Slower token generation: Llama 3 8B might impact performance for time-sensitive applications.

- Higher memory requirements: Larger model size may require more RAM, potentially leading to performance issues on devices with limited memory.

Practical Recommendations: Choosing the Right Model

Llama 2 7B: Your Go-to for Speed and Efficiency

For applications that prioritize speed and efficiency, especially on devices with limited resources, Llama 2 7B is the clear winner. Its fast token speeds and smaller size make it ideal for mobile deployments, chatbots with quick response times, and tasks that require quick and efficient processing.

Llama 3 8B: Powering Complex Tasks

If you need to run complex tasks that require high accuracy and the potential for future enhancements, Llama 3 8B is a viable option. Its larger size may require more powerful hardware and come with a performance penalty, but it offers a compelling alternative for tasks like generating detailed summaries, creative writing, or complex code completion.

Conclusion

The choice between Llama 3 8B and Llama 2 7B on the Apple M1 depends on your specific needs. Consider the following factors:

- Speed: If speed is paramount, Llama 2 7B is the better choice.

- Accuracy: If you prioritize accuracy, Llama 3 8B might be more suitable.

- Resource constraints: Llama 2 7B is more memory-efficient, making it ideal for resource-constrained devices.

- Future potential: Llama 3 8B's newer architecture suggests exciting possibilities for future improvements.

FAQ

What is the difference between Llama 2 and Llama 3?

Llama 2 and Llama 3 are both large language models developed by Meta, but they have key differences. Llama 2 is a more mature model with a well-established track record, while Llama 3 is a newer model with a more innovative architecture. The choice between them depends primarily on your priorities: speed or accuracy.

Can I run Llama 3 8B on my iPhone?

Running Llama 3 8B on an iPhone is not recommended due to the model's large size and memory requirements. It may lead to slow performance and potentially crash the device.

What are the best quantizations for Llama 2 and Llama 3?

For the best performance on the Apple M1, Q40 for Llama 2 7B and Q4K_M for Llama 3 8B are recommended.

What is the future of on-device LLMs?

On-device LLMs are rapidly evolving, with researchers and developers continuously exploring new architectures, optimizations, and techniques. The future looks bright for these models, with the potential for smaller, faster and even more accurate models in the coming years.

Keywords

large language model, LLM, Llama 2, Llama 3, Apple M1, token speed, processing, generation, quantization, Q80, Q40, Q4KM, performance, accuracy, memory, resource constraints, future, on-device LLMs, mobile deployment.