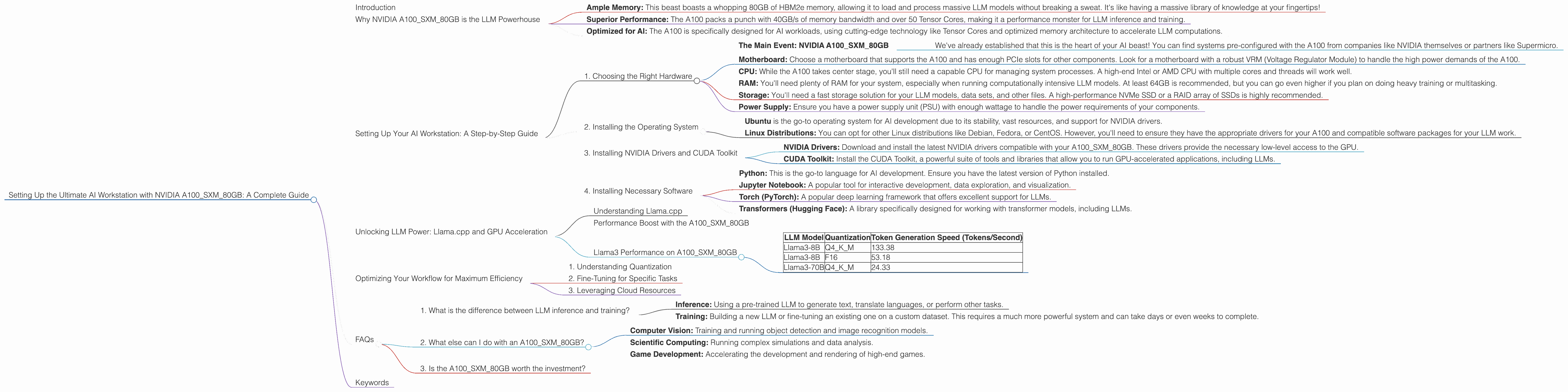

Setting Up the Ultimate AI Workstation with NVIDIA A100 SXM 80GB: A Complete Guide

Introduction

The world of artificial intelligence (AI) is exploding, and at the heart of this revolution are Large Language Models (LLMs). LLMs are powerful AI systems that can understand and generate human-like text, making them ideal for a range of applications from chatbots to content creation and even code generation.

But running these massive LLMs on your average laptop or desktop computer is like trying to fit a giraffe in a hamster cage. They need serious processing power, and that's where the NVIDIA A100SXM80GB comes in.

This guide will take you on a journey through the world of LLMs and the A100SXM80GB, helping you understand the crucial benefits of this powerhouse GPU and how to set up the ultimate AI workstation for your LLM adventures.

Why NVIDIA A100SXM80GB is the LLM Powerhouse

Imagine you're building a skyscraper. You need a strong foundation, and for LLMs, the A100SXM80GB is the perfect foundation. Here's why:

- Ample Memory: This beast boasts a whopping 80GB of HBM2e memory, allowing it to load and process massive LLM models without breaking a sweat. It's like having a massive library of knowledge at your fingertips!

- Superior Performance: The A100 packs a punch with 40GB/s of memory bandwidth and over 50 Tensor Cores, making it a performance monster for LLM inference and training.

- Optimized for AI: The A100 is specifically designed for AI workloads, using cutting-edge technology like Tensor Cores and optimized memory architecture to accelerate LLM computations.

Setting Up Your AI Workstation: A Step-by-Step Guide

Here's a rundown of the essential steps to set up your AI workstation with the A100SXM80GB:

1. Choosing the Right Hardware

- The Main Event: NVIDIA A100SXM80GB

- We've already established that this is the heart of your AI beast! You can find systems pre-configured with the A100 from companies like NVIDIA themselves or partners like Supermicro.

- Motherboard: Choose a motherboard that supports the A100 and has enough PCIe slots for other components. Look for a motherboard with a robust VRM (Voltage Regulator Module) to handle the high power demands of the A100.

- CPU: While the A100 takes center stage, you'll still need a capable CPU for managing system processes. A high-end Intel or AMD CPU with multiple cores and threads will work well.

- RAM: You'll need plenty of RAM for your system, especially when running computationally intensive LLM models. At least 64GB is recommended, but you can go even higher if you plan on doing heavy training or multitasking.

- Storage: You'll need a fast storage solution for your LLM models, data sets, and other files. A high-performance NVMe SSD or a RAID array of SSDs is highly recommended.

- Power Supply: Ensure you have a power supply unit (PSU) with enough wattage to handle the power requirements of your components.

2. Installing the Operating System

- Ubuntu is the go-to operating system for AI development due to its stability, vast resources, and support for NVIDIA drivers.

- Linux Distributions: You can opt for other Linux distributions like Debian, Fedora, or CentOS. However, you'll need to ensure they have the appropriate drivers for your A100 and compatible software packages for your LLM work.

3. Installing NVIDIA Drivers and CUDA Toolkit

- NVIDIA Drivers: Download and install the latest NVIDIA drivers compatible with your A100SXM80GB. These drivers provide the necessary low-level access to the GPU.

- CUDA Toolkit: Install the CUDA Toolkit, a powerful suite of tools and libraries that allow you to run GPU-accelerated applications, including LLMs.

4. Installing Necessary Software

- Python: This is the go-to language for AI development. Ensure you have the latest version of Python installed.

- Jupyter Notebook: A popular tool for interactive development, data exploration, and visualization.

- Torch (PyTorch): A popular deep learning framework that offers excellent support for LLMs.

- Transformers (Hugging Face): A library specifically designed for working with transformer models, including LLMs.

Unlocking LLM Power: Llama.cpp and GPU Acceleration

Let's dive into the exciting world of LLMs and how the A100SXM80GB brings them to life.

Understanding Llama.cpp

Imagine Llama.cpp as a universal LLM translator. It's an open-source project that allows you to run various LLMs on your machine, regardless of their original platform. This opens up a world of possibilities for experimenting with LLMs directly on your local system.

Performance Boost with the A100SXM80GB

Here's where the magic of the A100SXM80GB really shines. The A100's raw processing power significantly accelerates the token generation speed of Llama.cpp, allowing you to interact with LLMs in real-time.

Llama3 Performance on A100SXM80GB

Let's look at some real-world numbers to illustrate the power of this pairing:

| LLM Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3-8B | Q4KM | 133.38 |

| Llama3-8B | F16 | 53.18 |

| Llama3-70B | Q4KM | 24.33 |

Explanation:

- Llama3-8B: The 8-billion parameter Llama3 model is a popular and versatile choice.

- Q4KM: This refers to the quantization level used for the model – Q4KM refers to a specific quantization technique that balances accuracy and memory efficiency.

- F16: This is a type of floating-point representation used for the model. It's less precise than Q4KM but uses less memory.

- Token Generation Speed: This measures the speed at which the model can process tokens. Higher numbers indicate faster performance.

Key Takeaways:

- The A100SXM80GB significantly speeds up token generation for Llama3, even for the large 70B model.

- Using Quantization (Q4KM) can result in a huge performance boost compared to F16, showing the impact of optimizing for memory efficiency.

Optimizing Your Workflow for Maximum Efficiency

Now that you have a beast of a system, here are some tips to get the most out of it for your LLM work:

1. Understanding Quantization

Quantization is like shrinking your model's wardrobe for improved efficiency. It converts the large weights (numbers) in your LLM to smaller representations, reducing memory usage which can significantly boost performance.

Think of it like this: Imagine you're packing for a trip. If you pack everything in bulky suitcases, you'll have trouble fitting it all in and moving around. But, by using packing cubes to compress your clothes, you save space and can easily navigate.

Quantization does the same for your LLM, allowing you to run larger models with the same amount of memory.

2. Fine-Tuning for Specific Tasks

Fine-tuning is like training your LLM to become an expert in a specific area. You take an existing LLM and train it on a dataset related to your desired task.

For example, if you want to create a chatbot that specializes in answering questions about the history of the United States, you would fine-tune an LLM on a dataset containing relevant information from history books, documents, and other sources.

3. Leveraging Cloud Resources

If you need even more processing power for challenging tasks like training massive LLMs, cloud services offer a great alternative. Platforms like Google Cloud, Amazon Web Services (AWS), and Microsoft Azure provide access to A100 GPUs and other powerful hardware.

FAQs

1. What is the difference between LLM inference and training?

- Inference: Using a pre-trained LLM to generate text, translate languages, or perform other tasks.

- Training: Building a new LLM or fine-tuning an existing one on a custom dataset. This requires a much more powerful system and can take days or even weeks to complete.

2. What else can I do with an A100SXM80GB?

The A100 is ideal for tasks like:

- Computer Vision: Training and running object detection and image recognition models.

- Scientific Computing: Running complex simulations and data analysis.

- Game Development: Accelerating the development and rendering of high-end games.

3. Is the A100SXM80GB worth the investment?

The A100SXM80GB is a substantial investment, but if you're serious about AI development and want the ultimate performance, it can be a game-changer.

Consider the frequency and complexity of your LLM workloads and how much the A100 can accelerate your projects.

Keywords

A100SXM80GB, AI Workstation, LLM, Large Language Model, Llama.cpp, GPU, NVIDIA, Token Generation Speed, Quantization, Inference, Training, Fine-tuning, Cloud Resources, Computer Vision, Scientific Computing, Game Development.