Running LLMs on a NVIDIA RTX A6000 48GB Token Generation Speed Benchmark

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models and use cases emerging daily. These models are becoming increasingly powerful, capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way.

However, running these powerful LLMs on your computer can be demanding. A crucial aspect of using LLMs locally is understanding the hardware requirements and how different devices affect their performance.

This article dives into the token generation speed of various LLMs running on an NVIDIA RTX A6000 48GB GPU, providing a benchmark for developers and enthusiasts looking to set up their own local LLM environment. Let's dive in!

The NVIDIA RTX A6000 48GB Beast

The RTX A6000 is a high-end GPU designed for professionals in fields like artificial intelligence, machine learning, and scientific computing. Packed with 48 GB of GDDR6 memory, this GPU boasts impressive performance and is a popular choice for running demanding tasks like training and inference of large language models.

Token Generation: The Heart of LLM Inference

Think of a text like a string of beads, each bead representing a single token (a word or part of a word). LLMs process text one token at a time, using the context of previously processed tokens to predict the next one. "Token generation speed" measures how quickly an LLM can process these tokens, directly impacting the speed and efficiency of its operations.

Benchmark Results: LLMs on the RTX A6000 48GB

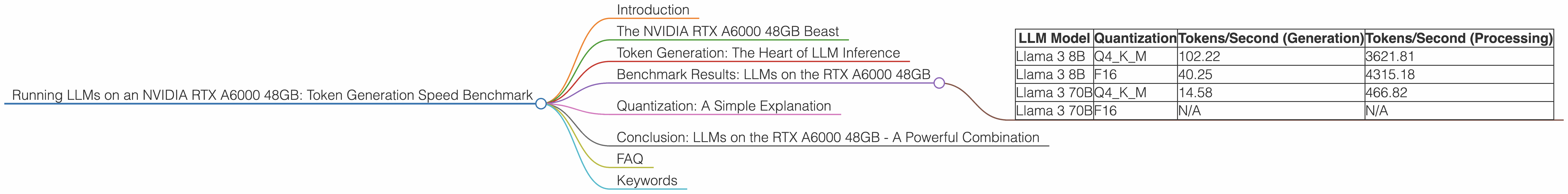

Let's get to the meat of the matter, the token generation speeds, measured in tokens per second (tokens/second). The table below summarizes the performance of different LLM models running on the NVIDIA RTX A6000 48GB, using various quantization techniques, including Q4KM (4-bit quantization with Kernel and Matrix Multiplication) and F16 (half-precision floating point).

Table: Token Generation Speed on RTX A6000 48GB

| LLM Model | Quantization | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 102.22 | 3621.81 |

| Llama 3 8B | F16 | 40.25 | 4315.18 |

| Llama 3 70B | Q4KM | 14.58 | 466.82 |

| Llama 3 70B | F16 | N/A | N/A |

Key Observations:

- Quantization Matters: Using Q4KM quantization significantly boosts token generation speed compared to F16 for both Llama 3 8B and 70B models. This is because Q4KM reduces the memory footprint required to store the model, allowing the GPU to process more tokens per second. Think of it like a car with a smaller engine that can still go faster because it's lighter!

- Larger Models are Slower: The Llama 3 70B model, despite using the same quantization techniques as the Llama 3 8B, exhibits significantly slower token generation speeds. This is expected, as larger models have a more complex network with billions of parameters, requiring more computational power and memory to run efficiently. Imagine trying to drive a large truck versus a compact car - the truck will naturally be slower.

- Processing vs. Generation: While Llama 3 8B is faster at processing tokens than generating them, the situation is reversed for the 70B model. This suggests that the processing step (where the LLM performs calculations on tokens to predict the next one) becomes more demanding than the generation step as the model size increases.

Quantization: A Simple Explanation

Quantization is a technique used to compress LLMs, making them more efficient and faster to run. Think of it like reducing the number of colors in an image, making the file size smaller without sacrificing too much detail.

The original LLM model uses 32 bits to represent each number, but through quantization, we can reduce this to 4 bits (Q4). This allows the model to be stored in less memory and processed faster, especially on GPUs.

Conclusion: LLMs on the RTX A6000 48GB - A Powerful Combination

The NVIDIA RTX A6000 48GB is a powerful GPU that can significantly accelerate the execution of LLMs, especially when using efficient quantization techniques like Q4KM. The results show that the A6000 can handle large language models like Llama 3 8B and 70B, providing a solid foundation for local development and experimentation.

FAQ

Q: What are other popular GPUs for running LLMs?

A: The RTX A6000 is a top-tier GPU. Other popular options include the NVIDIA GeForce RTX 4090, the AMD Radeon RX 7900 XTX, and the AMD MI250. The choice depends on your specific needs and budget.

Q: How can I get started with running LLMs locally?

A: You can find open-source resources like llama.cpp and other tools that allow you to run LLMs on your computer. Many resources and communities exist to help you navigate the process.

Q: Do I need a powerful GPU to run LLMs?

A: While a powerful GPU can significantly enhance the performance of LLMs, you can start with a less powerful GPU and experiment with smaller models. You can also use cloud-based solutions like Google Colab or AWS SageMaker to run LLMs without requiring a powerful local setup.

Q: What are the benefits of running LLMs locally?

A: Running LLMs locally gives you more control over the model, allowing you to customize it and experiment with different settings. You also have access to your data without the need for internet connectivity, which can be beneficial for privacy and security reasons.

Keywords

NVIDIA RTX A6000, LLMs, large language models, token generation speed, tokenization, quantization, Q4KM, F16, Llama 3, GPU, GPU benchmarks, performance, inference, local LLMs, open-source, development, experimentation, hardware requirements.