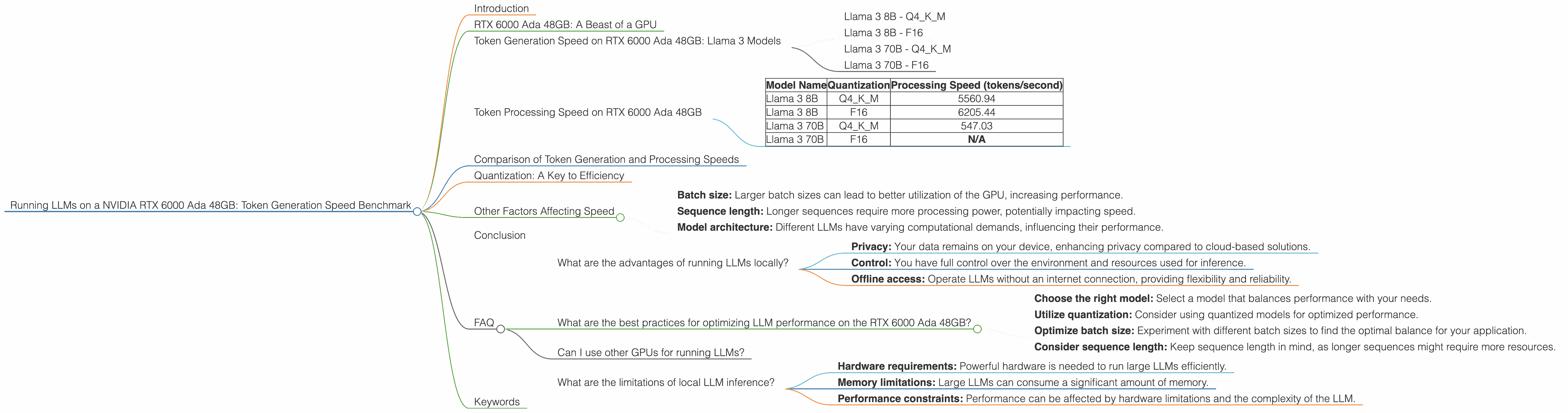

Running LLMs on a NVIDIA RTX 6000 Ada 48GB Token Generation Speed Benchmark

Introduction

Ever wondered how fast your NVIDIA RTX 6000 Ada 48GB can churn out tokens with different Large Language Models (LLMs)? We're diving deep into the world of local LLM inference, particularly on this powerful GPU from NVIDIA, to see how it handles the token generation process. This benchmark will explore the speed of LLMs like Llama 3, in their various sizes and quantization formats, using the RTX 6000 Ada 48GB.

Imagine a world where you can run powerful AI models on your own machine, without relying on cloud services. That's the promise of local LLM inference, and the RTX 6000 Ada 48GB shines as a potential powerhouse for this task. We'll unravel the performance of different LLMs, specifically focusing on token generation speed - the engine that drives these AI marvels.

RTX 6000 Ada 48GB: A Beast of a GPU

The RTX 6000 Ada 48GB is a powerful GPU designed for demanding tasks like AI research, machine learning, and high-performance computing. It's equipped with 48GB of GDDR6 memory and boasts a massive amount of processing power. But how does this translate to real-world LLM performance? Let's find out!

Token Generation Speed on RTX 6000 Ada 48GB: Llama 3 Models

Llama 3 8B - Q4KM

The Llama 3 8B model, quantized to 4-bit, is known for its efficiency and speed. On the RTX 6000 Ada 48GB, it achieves a token generation speed of 130.99 tokens per second. This means it can generate over 130 tokens every single second! While it's a solid performance, the GPU's capabilities can be further explored with other model variations.

Llama 3 8B - F16

When using the Llama 3 8B model with full 16-bit precision, the token generation speed drops to 51.97 tokens per second. This is approximately two and a half times slower than the quantized version. This difference highlights the impact of quantization, where a smaller representation of the model reduces computational demands, leading to faster performance.

Llama 3 70B - Q4KM

The Llama 3 70B model, quantized to 4-bit, achieves a token generation speed of 18.36 tokens per second on the RTX 6000 Ada 48GB - significantly slower than the 8B model. This makes sense, as the larger model is more computationally demanding.

Llama 3 70B - F16

Unfortunately, we do not have data for the Llama 3 70B model with full 16-bit precision (F16) on the RTX 6000 Ada 48GB, so we can't compare its performance with the quantized version.

Token Processing Speed on RTX 6000 Ada 48GB

The table below summarizes the token processing speed of various Llama 3 models on the RTX 6000 Ada 48GB:

| Model Name | Quantization | Processing Speed (tokens/second) |

|---|---|---|

| Llama 3 8B | Q4KM | 5560.94 |

| Llama 3 8B | F16 | 6205.44 |

| Llama 3 70B | Q4KM | 547.03 |

| Llama 3 70B | F16 | N/A |

Comparison of Token Generation and Processing Speeds

Let's break down the differences between token generation and processing speeds. Token generation refers to the actual output of the model, while token processing encompasses the internal calculations needed to reach that output. It's like the difference between typing out a sentence (token generation) and the complex processes happening within your brain to formulate those words (token processing).

The RTX 6000 Ada 48GB demonstrates remarkably higher token processing speed compared to token generation speed. This is expected, as token processing involves more intensive calculations, while token generation is a more streamlined process.

Quantization: A Key to Efficiency

Quantization is like a diet for LLMs. It involves reducing the size of the model by representing its weights with fewer bits, often using techniques like 4-bit quantization. This makes the model smaller and faster, especially on devices with limited memory or processing power. Think of it like reducing a high-resolution image to a smaller size for faster loading - you lose some detail, but gain speed in return.

As we saw in the benchmarks, the Llama 3 8B model with Q4KM quantization consistently outperformed the F16 version in token generation speed. This highlights the benefits of using quantization for efficient LLM inference on the RTX 6000 Ada 48GB.

Other Factors Affecting Speed

While these benchmarks paint a picture of token generation speed on the RTX 6000 Ada 48GB, several other factors can affect performance. These include:

- Batch size: Larger batch sizes can lead to better utilization of the GPU, increasing performance.

- Sequence length: Longer sequences require more processing power, potentially impacting speed.

- Model architecture: Different LLMs have varying computational demands, influencing their performance.

Conclusion

The NVIDIA RTX 6000 Ada 48GB proves to be a powerful platform for local LLM inference, particularly with models that utilize quantization. The benchmarks showcased the impressive token generation speeds of various Llama 3 models.

Quantization, as demonstrated, offers significant advantages in terms of speed and efficiency. For users working with large LLMs, exploring quantization techniques can be crucial for achieving optimal performance on this powerful GPU.

FAQ

What are the advantages of running LLMs locally?

Running LLMs locally offers several advantages, including:

- Privacy: Your data remains on your device, enhancing privacy compared to cloud-based solutions.

- Control: You have full control over the environment and resources used for inference.

- Offline access: Operate LLMs without an internet connection, providing flexibility and reliability.

What are the best practices for optimizing LLM performance on the RTX 6000 Ada 48GB?

Here are some tips:

- Choose the right model: Select a model that balances performance with your needs.

- Utilize quantization: Consider using quantized models for optimized performance.

- Optimize batch size: Experiment with different batch sizes to find the optimal balance for your application.

- Consider sequence length: Keep sequence length in mind, as longer sequences might require more resources.

Can I use other GPUs for running LLMs?

Yes, you can utilize other GPUs for running LLMs, including consumer-grade GPUs like the NVIDIA GeForce RTX 40 series. However, the performance may vary depending on the GPU's specifications and the LLM model.

What are the limitations of local LLM inference?

Local LLM inference can have limitations, including:

- Hardware requirements: Powerful hardware is needed to run large LLMs efficiently.

- Memory limitations: Large LLMs can consume a significant amount of memory.

- Performance constraints: Performance can be affected by hardware limitations and the complexity of the LLM.

Keywords

LLMs, NVIDIA RTX 6000 Ada 48GB, token generation, Llama 3, quantization, GPU, inference, performance, benchmarks, local LLM, AI, deep learning.