Running LLMs on a NVIDIA RTX 5000 Ada 32GB Token Generation Speed Benchmark

Introduction

The world of large language models (LLMs) is exploding, offering exciting possibilities for everything from creative text generation to complex code completion. But these powerful models demand considerable computational resources. For developers and researchers exploring local LLM deployment, choosing the right hardware is crucial for optimal performance.

This article focuses on the NVIDIA RTX 5000 Ada 32GB, a high-end graphics card designed for demanding workloads like machine learning and AI. We'll analyze its performance in generating tokens, the building blocks of text, for popular LLM models like Llama 3. This information is vital for developers looking to optimize their LLM setups for speed and efficiency.

Understanding Token Generation Speed

Imagine writing a book, but instead of words, you’re using individual letters as building blocks. Each letter represents a "token," and the faster you can assemble these tokens into words and sentences, the faster your text is generated. This is essentially what happens with LLMs.

Token generation speed measures how quickly a GPU can process these tokens, ultimately affecting the model's responsiveness and overall performance.

Benchmarking the NVIDIA RTX 5000 Ada 32GB

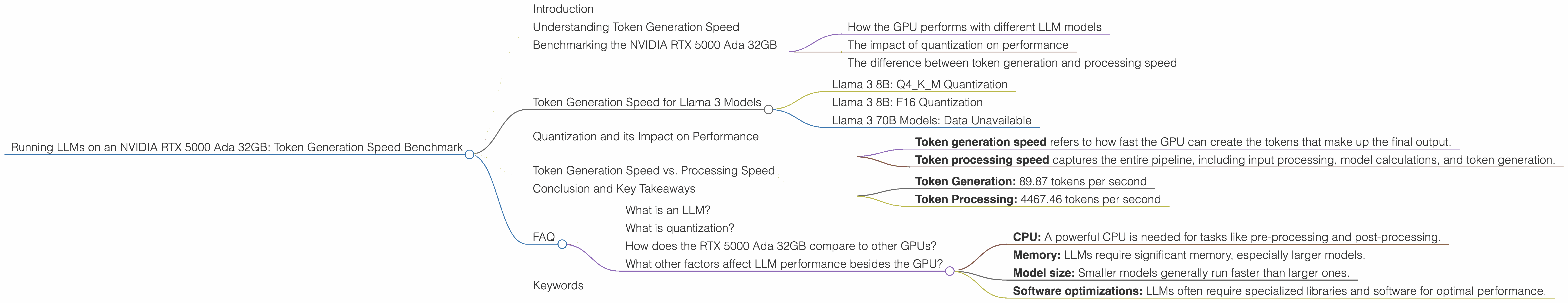

We've gathered data on the token generation speed of the NVIDIA RTX 5000 Ada 32GB for various LLM models. We'll analyze these results to understand:

- How the GPU performs with different LLM models

- The impact of quantization on performance

- The difference between token generation and processing speed

Token Generation Speed for Llama 3 Models

Llama 3 8B: Q4KM Quantization

The NVIDIA RTX 5000 Ada 32GB achieves an impressive 89.87 tokens per second with the Llama 3 8B model using Q4KM quantization. This is a significant speed, translating to a rapid generation of text.

Think of it like this: If you were typing a book, this GPU would be like having a lightning-fast typist hammering away, churning out words at an incredible pace.

Llama 3 8B: F16 Quantization

With F16 quantization, the token generation speed drops to 32.67 tokens per second for the Llama 3 8B model. While still fast, this represents a substantial decrease compared to Q4KM.

Llama 3 70B Models: Data Unavailable

Unfortunately, we don't have data available for the Llama 3 70B model on this GPU. This might be due to its massive size, requiring more memory and computational resources that even this powerful card struggles to handle.

Quantization and its Impact on Performance

Quantization is a technique used to reduce the size of LLM models by using fewer bits to represent each value. While this can lead to some loss of accuracy, it offers significant performance gains.

The Q4KM quantization, which uses four bits to represent each value, provides the best results for the RTX 5000 Ada 32GB. This indicates the card thrives with models that have been optimized for efficient processing.

Token Generation Speed vs. Processing Speed

It's important to distinguish between token generation speed and token processing speed.

- Token generation speed refers to how fast the GPU can create the tokens that make up the final output.

- Token processing speed captures the entire pipeline, including input processing, model calculations, and token generation.

The RTX 5000 Ada 32GB exhibits significantly higher processing speed compared to its token generation speed. For example, with Llama 3 8B model using Q4KM:

- Token Generation: 89.87 tokens per second

- Token Processing: 4467.46 tokens per second

This difference highlights the efficiency with which the card can handle the bulk of the computational workload involved in LLM processing, suggesting a potential bottleneck in the token generation stage.

Conclusion and Key Takeaways

The NVIDIA RTX 5000 Ada 32GB proves to be a capable hardware solution for running smaller LLM models like Llama 3 8B. The GPU achieves impressive token generation speed, particularly with Q4KM quantization. However, the data highlights a potential bottleneck in token generation, indicating that further optimizations may be needed for even faster output.

FAQ

What is an LLM?

An LLM, or large language model, is a type of artificial intelligence that excels at understanding and generating human-like text. Think of it like a super-powered version of your phone's auto-correct feature, capable of writing stories, translating languages, and even creating code.

What is quantization?

Quantization, in the context of LLMs, is like shrinking a model down to a smaller size. It allows developers to use less memory and computational resources, improving performance.

Example: Imagine you have a detailed map of a city, but you only need the main roads. Quantization is like taking that detailed map and simplifying it, keeping only the essential details, making it easier to navigate.

How does the RTX 5000 Ada 32GB compare to other GPUs?

The RTX 5000 Ada 32GB is a powerful GPU designed for demanding workloads. Its performance relative to other GPUs will depend on factors like the LLM model, quantization level, and specific use case.

It's like comparing cars: Each car has its strengths and weaknesses. You wouldn't use a sports car for moving furniture, just as you wouldn't use a truck for racing.

What other factors affect LLM performance besides the GPU?

Several factors contribute to LLM performance, including:

- CPU: A powerful CPU is needed for tasks like pre-processing and post-processing.

- Memory: LLMs require significant memory, especially larger models.

- Model size: Smaller models generally run faster than larger ones.

- Software optimizations: LLMs often require specialized libraries and software for optimal performance.

Keywords

Large language models, LLMs, NVIDIA RTX 5000 Ada 32GB, GPU, token generation, token processing, quantization, Llama 3, performance benchmarking, speed, efficiency, AI, machine learning, computational resources.