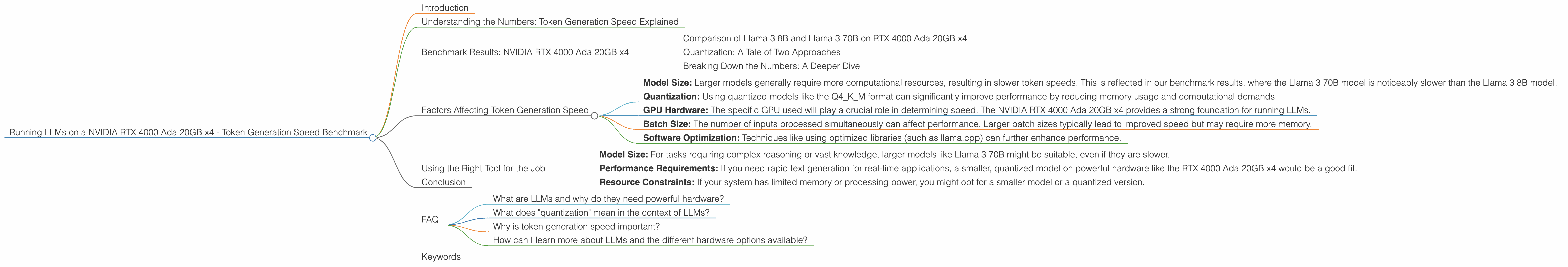

Running LLMs on a NVIDIA RTX 4000 Ada 20GB x4 Token Generation Speed Benchmark

Introduction

Running large language models (LLMs) locally on your own hardware can be a powerful and cost-effective way to unlock their potential. The NVIDIA RTX 4000 Ada 20GB x4, with its powerful Ada Lovelace architecture, is a popular choice for this purpose. In this article, we'll delve into the token generation speed of various LLM models on this specific GPU setup, providing insights into the performance you can expect.

This article is specifically for developers and geeks who are interested in exploring and running LLMs locally. We'll break down the benchmark numbers into digestible pieces, offering an understanding of the factors influencing speed, and the benefits of different model configurations. Buckle up, it's going to be a wild ride!

Understanding the Numbers: Token Generation Speed Explained

Before we dive into the benchmark results, let's define a critical term: token generation speed. In the context of LLMs, a token is a unit of text, typically representing a word, punctuation mark, or even a part of a word. Token generation speed, measured in tokens per second (tokens/s), tells us how quickly a model can process text and produce output.

Imagine a language model like a super-powered translator: it receives text in, processes it, and produces new text out. The token generation speed measures how efficiently the model performs this translation. The higher the tokens per second, the faster the model can process and generate text.

Benchmark Results: NVIDIA RTX 4000 Ada 20GB x4

We'll focus specifically on the performance of the NVIDIA RTX 4000 Ada 20GB x4 GPU setup. For this analysis, the benchmark numbers derived from llama.cpp and GPU Benchmarks on LLM Inference are presented in the table below. Let's break down the results:

| Model | Token Generation Speed (tokens/s) | Processing Speed (tokens/s) | Quantization |

|---|---|---|---|

| Llama 3 8B Q4KM | 56.14 | 3369.24 | Quantized (4-bit) |

| Llama 3 8B F16 | 20.58 | 4366.64 | Float16 |

| Llama 3 70B Q4KM | 7.33 | 306.44 | Quantized (4-bit) |

| Llama 3 70B F16 | N/A | N/A | Float16 |

Note: The benchmarks for the Llama 3 70B model with F16 quantization were not available.

Comparison of Llama 3 8B and Llama 3 70B on RTX 4000 Ada 20GB x4

Looking at the table, we see a significant difference in performance between the Llama 3 8B and Llama 3 70B models. The 8B model boasts a much higher token generation speed compared to the 70B model. This is expected because the smaller model requires less computational power for its operations.

Quantization: A Tale of Two Approaches

The table also highlights the impact of quantization on performance. Quantization is a technique used to reduce the memory footprint and computational requirements of LLMs. Think of it like a digital diet for your model. With 4-bit quantization (Q4KM), the model size is significantly reduced, leading to faster processing and generation speeds compared to the F16 (Float16) configuration.

The Llama 3 8B model with Q4KM quantization achieves a substantial 2.7x speed boost over its counterpart using F16. This is a prime example of how quantization can be a game-changer for running large models on limited hardware.

Breaking Down the Numbers: A Deeper Dive

Let's explore the token generation and processing speeds in more detail:

Token Generation Speed: This metric reflects the model's output generation capabilities. The Llama 3 8B Q4KM model shines in this area, generating a whopping 56.14 tokens per second. This means it can process and produce text at a fast pace, making it ideal for applications like chatbots or text summarization.

Processing Speed: This metric indicates the overall speed at which the model processes input text. The Llama 3 8B Q4KM model also performs well here, with a processing speed of 3369.24 tokens per second. This means it can handle substantial amounts of text input efficiently.

Factors Affecting Token Generation Speed

Several factors can influence the token generation speed of LLMs:

- Model Size: Larger models generally require more computational resources, resulting in slower token speeds. This is reflected in our benchmark results, where the Llama 3 70B model is noticeably slower than the Llama 3 8B model.

- Quantization: Using quantized models like the Q4KM format can significantly improve performance by reducing memory usage and computational demands.

- GPU Hardware: The specific GPU used will play a crucial role in determining speed. The NVIDIA RTX 4000 Ada 20GB x4 provides a strong foundation for running LLMs.

- Batch Size: The number of inputs processed simultaneously can affect performance. Larger batch sizes typically lead to improved speed but may require more memory.

- Software Optimization: Techniques like using optimized libraries (such as llama.cpp) can further enhance performance.

Using the Right Tool for the Job

With a diverse range of LLMs and hardware options available, choosing the right combination for your needs is paramount. Here are some factors to consider when making a decision:

- Model Size: For tasks requiring complex reasoning or vast knowledge, larger models like Llama 3 70B might be suitable, even if they are slower.

- Performance Requirements: If you need rapid text generation for real-time applications, a smaller, quantized model on powerful hardware like the RTX 4000 Ada 20GB x4 would be a good fit.

- Resource Constraints: If your system has limited memory or processing power, you might opt for a smaller model or a quantized version.

Conclusion

Running LLMs locally on the NVIDIA RTX 4000 Ada 20GB x4 can be a powerful option for developers and geeks looking to explore their capabilities. We've seen how different model sizes and quantization schemes can significantly influence token generation speeds, and how the RTX 4000 Ada 20GB x4 provides a strong foundation for running these models. Remember, choosing the right model for your specific application is crucial to achieving optimal performance.

FAQ

What are LLMs and why do they need powerful hardware?

LLMs, or large language models, are incredibly complex AI systems designed to understand and generate human-like text. They are trained on massive datasets, making them capable of performing diverse language tasks. This complexity requires significant computational power, which is why powerful GPUs like the RTX 4000 Ada 20GB x4 are needed to run these models efficiently.

What does "quantization" mean in the context of LLMs?

Quantization is a technique used to reduce the size of LLM models, making them more efficient and less demanding on hardware resources. It's kind of like compressing a large file to make it easier to download and use. By converting the model's parameters to smaller data types, we can significantly reduce memory requirements and increase performance.

Why is token generation speed important?

Token generation speed is crucial for the real-world application of LLMs. In tasks like chatbots, summarization, or translation, the faster a model can generate text, the more responsive and user-friendly the experience will be.

How can I learn more about LLMs and the different hardware options available?

There are many resources available online for diving deeper into LLMs and their hardware requirements. You can start by exploring websites and forums dedicated to AI, machine learning, and large language models. You can also find excellent courses and tutorials offered by platforms like Coursera, Udacity, and edX.

Keywords

LLMs, large language models, NVIDIA RTX 4000 Ada 20GB x4, token generation speed, quantization, performance, inference, 8-bit, 4-bit, F16, GPU, llama.cpp, GPU Benchmarks on LLM Inference, model size, processing speed, hardware, memory, resources.