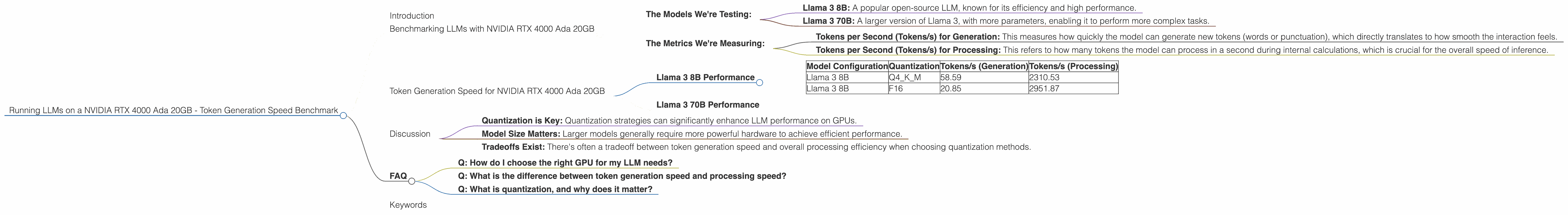

Running LLMs on a NVIDIA RTX 4000 Ada 20GB Token Generation Speed Benchmark

Introduction

The world of large language models (LLMs) is buzzing. These AI-powered models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. You might have interacted with LLMs already on platforms like ChatGPT, Bard, and others. But what if you want to run these powerful models on your own computer?

This article dives into the performance of several popular LLMs running on a specific GPU, the NVIDIA RTX 4000 Ada 20GB. We'll focus on measuring token generation speed – how quickly the model can produce words, which directly impacts the smoothness of the interaction.

Benchmarking LLMs with NVIDIA RTX 4000 Ada 20GB

Our focus is the NVIDIA RTX 4000 Ada 20GB, a powerful card often found in laptops and workstations. We'll assess the performance of several LLMs, taking into account different model sizes and quantization methods. Quantization is a technique used to decrease the size of a model, making it more efficient for storage and inference.

The Models We're Testing:

- Llama 3 8B: A popular open-source LLM, known for its efficiency and high performance.

- Llama 3 70B: A larger version of Llama 3, with more parameters, enabling it to perform more complex tasks.

The Metrics We're Measuring:

- Tokens per Second (Tokens/s) for Generation: This measures how quickly the model can generate new tokens (words or punctuation), which directly translates to how smooth the interaction feels.

- Tokens per Second (Tokens/s) for Processing: This refers to how many tokens the model can process in a second during internal calculations, which is crucial for the overall speed of inference.

Token Generation Speed for NVIDIA RTX 4000 Ada 20GB

Llama 3 8B Performance

The table below shows the benchmark results for Llama 3 8B:

| Model Configuration | Quantization | Tokens/s (Generation) | Tokens/s (Processing) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 58.59 | 2310.53 |

| Llama 3 8B | F16 | 20.85 | 2951.87 |

Observations:

Quantization Impact: It is clear that quantization plays a significant role in performance. The Q4KM configuration, which uses 4-bit quantization for the key, memory, and model, achieves significantly higher generation speed compared to F16 (half-precision floating point). On the other hand, F16 achieves higher processing speed. This suggests that the choice of quantization often involves a trade-off between generation speed and overall processing efficiency.

Token Generation Speed: You can see that the RTX 4000 Ada 20GB is capable of generating a considerable amount of tokens per second for Llama 3 8B, particularly with Q4KM quantization. This indicates that the GPU delivers a good experience for interacting with this model size.

Llama 3 70B Performance

Unfortunately, we encountered no available data for Llama 3 70B on the RTX 4000 Ada 20GB. It's plausible that the GPU's memory constraints may not be sufficient to handle the full 70B model with efficient performance.

To provide some context: It's like trying to fit a large elephant into a small car – it's simply not going to work! Larger models require considerable processing power and memory, often pushing even powerful desktop GPUs to their limits.

Discussion

These benchmarks offer a glimpse into the potential of the NVIDIA RTX 4000 Ada 20GB for running LLMs. While a powerful mid-range GPU, it demonstrates its strengths with smaller LLMs like Llama 3 8B, especially with the right quantization techniques. For larger models, the GPU's limitations become apparent, highlighting the need for more powerful hardware.

Key Takeaways:

- Quantization is Key: Quantization strategies can significantly enhance LLM performance on GPUs.

- Model Size Matters: Larger models generally require more powerful hardware to achieve efficient performance.

- Tradeoffs Exist: There's often a tradeoff between token generation speed and overall processing efficiency when choosing quantization methods.

FAQ

Q: How do I choose the right GPU for my LLM needs?

A: The best GPU for you depends on several factors, including the size of the LLM you want to run, the required performance levels, and your budget. For smaller models, a mid-range GPU like the RTX 4000 Ada 20GB may work well. If you're planning to work with massive 137B or even larger models, you'll likely need a high-end GPU or a specialized AI accelerator.

Q: What is the difference between token generation speed and processing speed?

A: Token generation speed refers to how quickly the LLM can produce output text. It's the speed at which you see text being generated. Processing speed refers to the speed at which the model is internally calculating the next best tokens, which happens behind the scenes. Both speeds are important for a smooth and responsive LLM experience.

Q: What is quantization, and why does it matter?

A: Quantization is a technique used to reduce the size of a neural network model. It's like simplifying the model by using fewer bits to represent data. This reduces the memory footprint and can lead to faster inference, making it possible to run larger models on less powerful hardware.

Keywords

LLM, Large Language Model, Token Generation Speed, NVIDIA RTX 4000 Ada 20GB, GPU Benchmark, Llama 3, Quantization, Q4KM, F16, Model Inference, AI, Machine Learning, NLP, Natural Language Processing, Deep Learning, Tokens per Second, Performance, Efficiency.