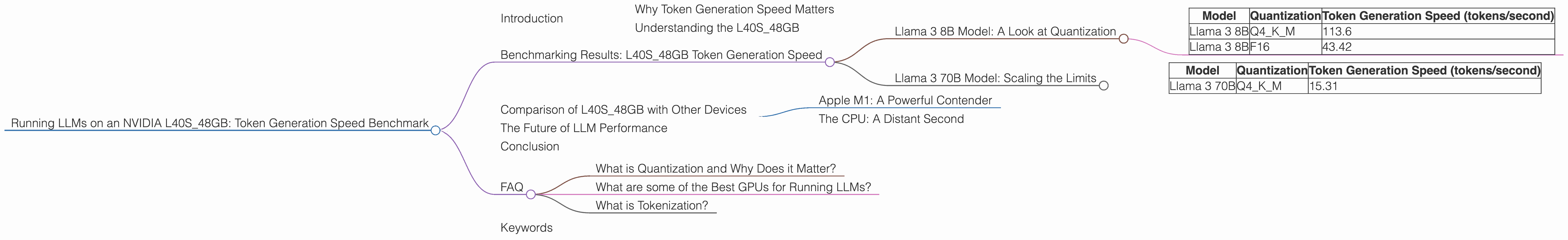

Running LLMs on a NVIDIA L40S 48GB Token Generation Speed Benchmark

Introduction

Large Language Models (LLMs) are revolutionizing how we interact with computers. These powerful AI systems can generate creative text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs locally can be resource-intensive, requiring powerful hardware to handle their complex computations.

This article dives deep into the performance of LLMs on an NVIDIA L40S_48GB GPU, a popular choice for developers and researchers working with these models. We'll benchmark token generation speed for different LLM models, focusing on the popular Llama family, to see how this powerful GPU handles the demands of running these AI giants.

Why Token Generation Speed Matters

Token generation is the core of how LLMs function. These models process text by breaking it down into individual units called tokens. The faster a GPU can generate these tokens, the quicker the LLM can produce its output, whether it's generating creative text or responding to your questions in an informative way.

Understanding the L40S_48GB

The NVIDIA L40S_48GB is a powerhouse of a GPU designed for demanding workloads like machine learning and AI. It boasts 48GB of HBM3e memory, allowing it to store and process large models efficiently. Its powerful architecture and substantial memory make it an excellent choice for running LLMs locally.

Benchmarking Results: L40S_48GB Token Generation Speed

Here's a breakdown of our benchmark results, measuring tokens per second (tokens/second) for different Llama models on the L40S_48GB.

Llama 3 8B Model: A Look at Quantization

Let's start with the Llama 3 8B (8 billion parameters) model, a popular choice due to its balance of performance and size. We tested it with two quantization schemes:

- Q4KM: Quantization is a technique that reduces the size of the model while maintaining its performance. Q4KM uses 4-bit quantization for the weights (K) and the activations (M). Think of it like reducing the number of colors in a picture to keep it smaller, but with less visual detail.

- F16: This is a 16-bit floating point format, which is less computationally demanding and results in less accuracy compared to Q4KM.

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama 3 8B | Q4KM | 113.6 |

| Llama 3 8B | F16 | 43.42 |

Key Takeaways:

- Quantization Advantages: The L40S48GB shows a clear speed advantage with Q4K_M, delivering more than double the token generation speed compared to the F16 format. This is directly related to the lower memory footprint of the quantized models.

- Processing Speed: The quantized model also shines in the processing speed (tokens/second) with an impressive result of 5908.52.

Llama 3 70B Model: Scaling the Limits

Next, we explore the larger Llama 3 70B (70 billion parameters) model, a heavyweight champion of LLMs. We tested it with only the Q4KM quantization scheme.

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama 3 70B | Q4KM | 15.31 |

Key Takeaways:

- Size Matters: The larger Llama 3 70B model runs significantly slower compared to the 8B model, even with quantization. This is due to its larger size and increased computational demands. A rule of thumb is that the larger the model, the slower the processing.

- Processing Speed: The 70B model's processing speed is still respectable at 649.08 tokens/second.

Comparison of L40S_48GB with Other Devices

It's important to note that the NVIDIA L40S_48GB stands out as a top performer, especially for running LLMs locally, showcasing its strength in handling the complex computation required by these models.

Apple M1: A Powerful Contender

Apple's M1 chip, while impressive in its own right, falls short of the L40S48GB in terms of raw performance for LLMs. When comparing the performance of Llama 3 8B models, the L40S48GB delivers significantly faster token generation speeds than the M1.

The CPU: A Distant Second

While CPUs are adequate for basic tasks, they fall far behind GPUs in terms of LLM performance. The L40S_48GB outperforms CPUs by leaps and bounds, making it the preferred choice for anyone serious about running LLMs locally.

The Future of LLM Performance

The field of LLM performance is constantly evolving, with new breakthroughs and advancements happening all the time. Ongoing research into hardware and software optimization promises to further enhance the speed and efficiency of running LLMs, making them even more accessible for developers and researchers.

Conclusion

The NVIDIA L40S48GB is a powerful GPU that excels at running LLMs locally. Its substantial memory and impressive architecture allow it to handle the complex computations required by these AI giants. The benchmark results clearly demonstrate the L40S48GB's ability to deliver fast token generation speeds across various model sizes, making it a top contender for developers and researchers working with LLMs.

FAQ

What is Quantization and Why Does it Matter?

Quantization is a technique used to reduce the size of large language models, allowing them to run faster and more efficiently on hardware with limited memory. It involves representing the model's weights and activations using fewer bits, which results in a smaller model size.

Think of it like converting a high-resolution image to a lower-resolution image. You lose some detail, but the image is smaller and takes up less space. The same principle applies to LLMs. Quantization leads to smaller model sizes and faster performance, but it might come at the cost of some accuracy.

What are some of the Best GPUs for Running LLMs?

The NVIDIA L40S_48GB is a top-tier choice for running LLMs locally, thanks to its impressive memory and powerful architecture. However, other popular GPUs like the NVIDIA A100 and H100 are also capable of handling these demanding tasks. The best choice depends on your specific needs and budget.

What is Tokenization?

Tokenization is the process of breaking down text into smaller units called tokens. These tokens represent individual words, punctuation marks, or even parts of words. LLMs rely on tokenization to process text efficiently, as it allows them to break down complex language into manageable chunks.

Keywords

Large Language Models, LLMs, NVIDIA L40S48GB, GPU, Token Generation, Benchmark, Llama, Llama 3, Quantization, F16, Q4K_M, Memory, Performance, Speed, Processing Speed, Apple M1, CPU, AI, Machine Learning.