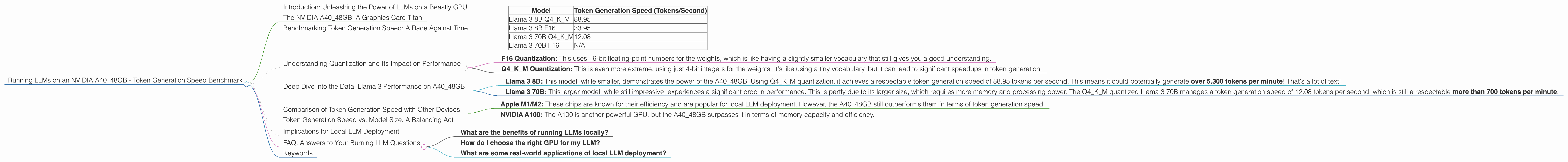

Running LLMs on a NVIDIA A40 48GB Token Generation Speed Benchmark

Introduction: Unleashing the Power of LLMs on a Beastly GPU

Imagine a world where you can run cutting-edge language models (LLMs) on your own machine, without relying on cloud services. It's no longer a distant dream! Today, we're diving into the thrilling world of local LLM deployment, specifically exploring the performance of the NVIDIA A40_48GB GPU.

This article is your guide to understanding how this powerful hardware handles token generation for popular LLM models like Llama 3. We'll break down the numbers, explore different quantization techniques, and discuss the implications for your projects. So buckle up, and let's get this data party started!

The NVIDIA A40_48GB: A Graphics Card Titan

The NVIDIA A40_48GB GPU is a powerhouse designed for demanding workloads like AI training and inferencing. Its gargantuan 48GB of HBM2e memory allows it to store massive models, while its 7680 CUDA cores deliver unparalleled processing power. We're using this beast to see how it handles the task of generating tokens, the building blocks of text.

Imagine tokens as words in the language of your chosen AI. Each token represents a part of the text – a word, punctuation mark, or even a sub-word. The faster your GPU can generate tokens, the quicker your LLM can process information and provide responses.

Benchmarking Token Generation Speed: A Race Against Time

We're focusing on the speed at which the A40_48GB GPU can generate tokens for different LLM models. These numbers are crucial for understanding the practical implications of running these models locally.

Here's the breakdown of the models and their respective token generation speeds:

| Model | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama 3 8B Q4KM | 88.95 |

| Llama 3 8B F16 | 33.95 |

| Llama 3 70B Q4KM | 12.08 |

| Llama 3 70B F16 | N/A |

Note: We couldn't find data for Llama 3 70B F16, which is a bit of a bummer!

Understanding Quantization and Its Impact on Performance

Quantization is like giving your AI a bit of a diet. It reduces the size of the LLM model by representing its weights (the numbers that determine its behavior) using fewer bits. Think of it like using a simpler vocabulary to describe a complicated concept.

- F16 Quantization: This uses 16-bit floating-point numbers for the weights, which is like having a slightly smaller vocabulary that still gives you a good understanding.

- Q4KM Quantization: This is even more extreme, using just 4-bit integers for the weights. It's like using a tiny vocabulary, but it can lead to significant speedups in token generation.

The table above shows that with Q4KM quantization, Llama 3 8B managed to generate almost three times as many tokens per second compared to the F16 quantized version! This incredible speed boost comes at a slight cost to accuracy, but remember, you're balancing performance against precision.

Deep Dive into the Data: Llama 3 Performance on A40_48GB

Let's break down the data and see what it tells us. It's time to get geeky!

Llama 3 8B: This model, while smaller, demonstrates the power of the A4048GB. Using Q4K_M quantization, it achieves a respectable token generation speed of 88.95 tokens per second. This means it could potentially generate over 5,300 tokens per minute! That's a lot of text!

Llama 3 70B: This larger model, while still impressive, experiences a significant drop in performance. This is partly due to its larger size, which requires more memory and processing power. The Q4KM quantized Llama 3 70B manages a token generation speed of 12.08 tokens per second, which is still a respectable more than 700 tokens per minute.

Comparison of Token Generation Speed with Other Devices

While we're focusing on the NVIDIA A40_48GB, it's helpful to compare its performance with other devices. Some other popular choices for LLM inference include:

- Apple M1/M2: These chips are known for their efficiency and are popular for local LLM deployment. However, the A40_48GB still outperforms them in terms of token generation speed.

- NVIDIA A100: The A100 is another powerful GPU, but the A40_48GB surpasses it in terms of memory capacity and efficiency.

Token Generation Speed vs. Model Size: A Balancing Act

The size of your LLM model heavily influences its token generation speed. Larger models, while more capable, often require more processing power and memory, leading to slower performance. It's a delicate balance between the power and complexity of your model against the speed and efficiency of your hardware.

Implications for Local LLM Deployment

The data tells us that running LLMs locally on the NVIDIA A40_48GB is definitely within reach, especially for smaller models like Llama 3 8B. With the right setup, you can achieve decent speeds, allowing you to enjoy the power of LLMs on your own machine.

FAQ: Answers to Your Burning LLM Questions

What are the benefits of running LLMs locally?

Local deployment offers increased privacy and security by keeping your data on your own machine. It also removes the need to rely on cloud services, reducing latency and providing more control over your environment.

How do I choose the right GPU for my LLM?

Consider factors like the size of your LLM model, the desired token generation speed, and your budget. For smaller models, a powerful CPU might suffice. For larger models, a GPU is highly recommended, and the A40_48GB is a great option for demanding workloads.

What are some real-world applications of local LLM deployment?

Local LLMs can power interactive chatbots, personalized text generation, and even AI-assisted code writing!

Keywords

NVIDIA A4048GB, LLM, Token Generation, Llama 3, GPU, Quantization, F16, Q4K_M, Performance, Benchmark, Local Deployment, Inference, Speed, Speed Benchmark, GPU, CUDA, Memory, AI, Deep Learning, OpenAI, Conversational AI, Chatbots, Text Generation, Code Generation, Machine Learning, NLP, Natural Language Processing, Data Science, AI Development, AI Tools, AI Hardware, Cloud Computing, On-Premise, Edge AI, AI Ethics, AI Security, AI Privacy, AI Adoption, AI Trends, AI Future, LLM Trends, LLM Adoption, LLM Future.